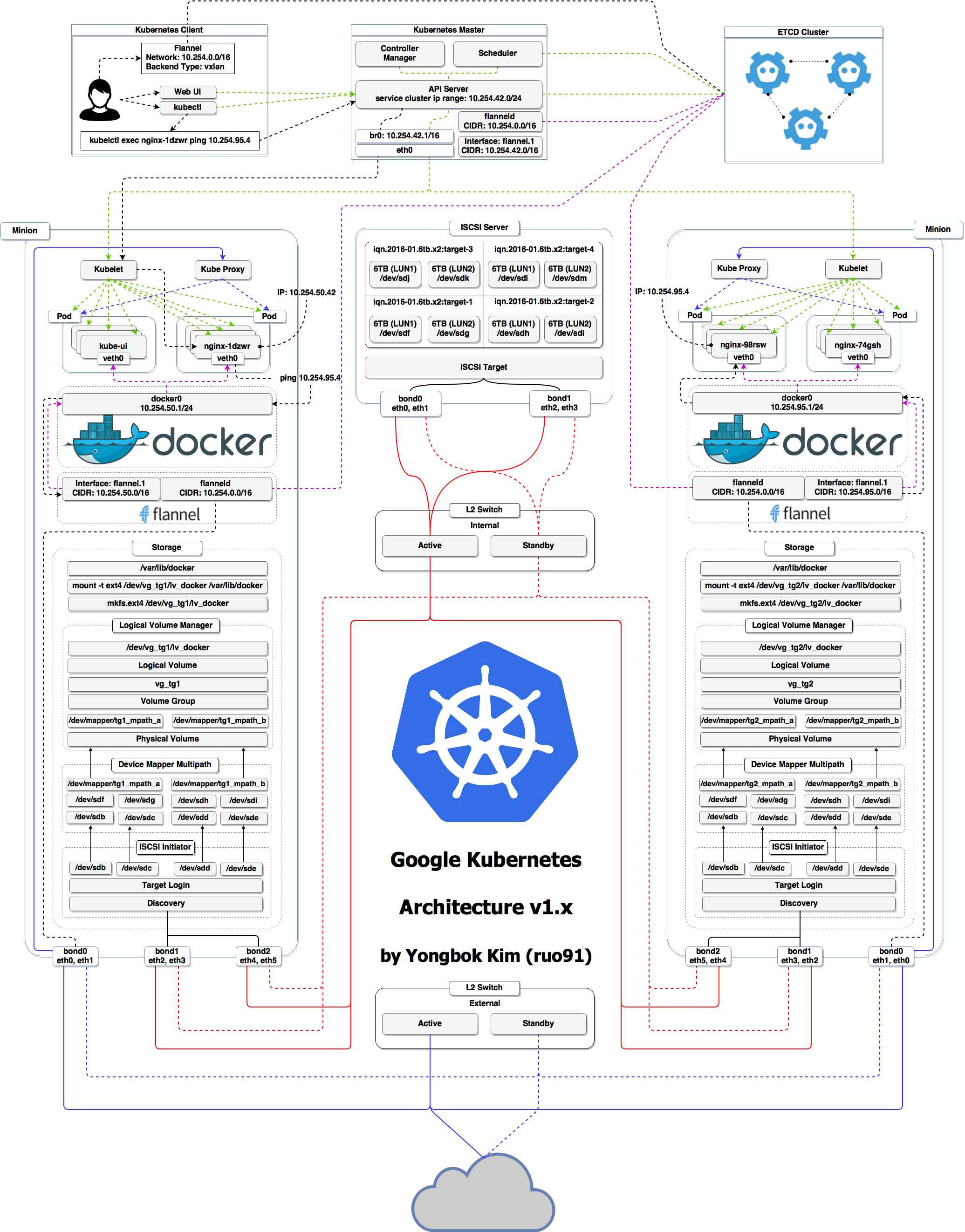

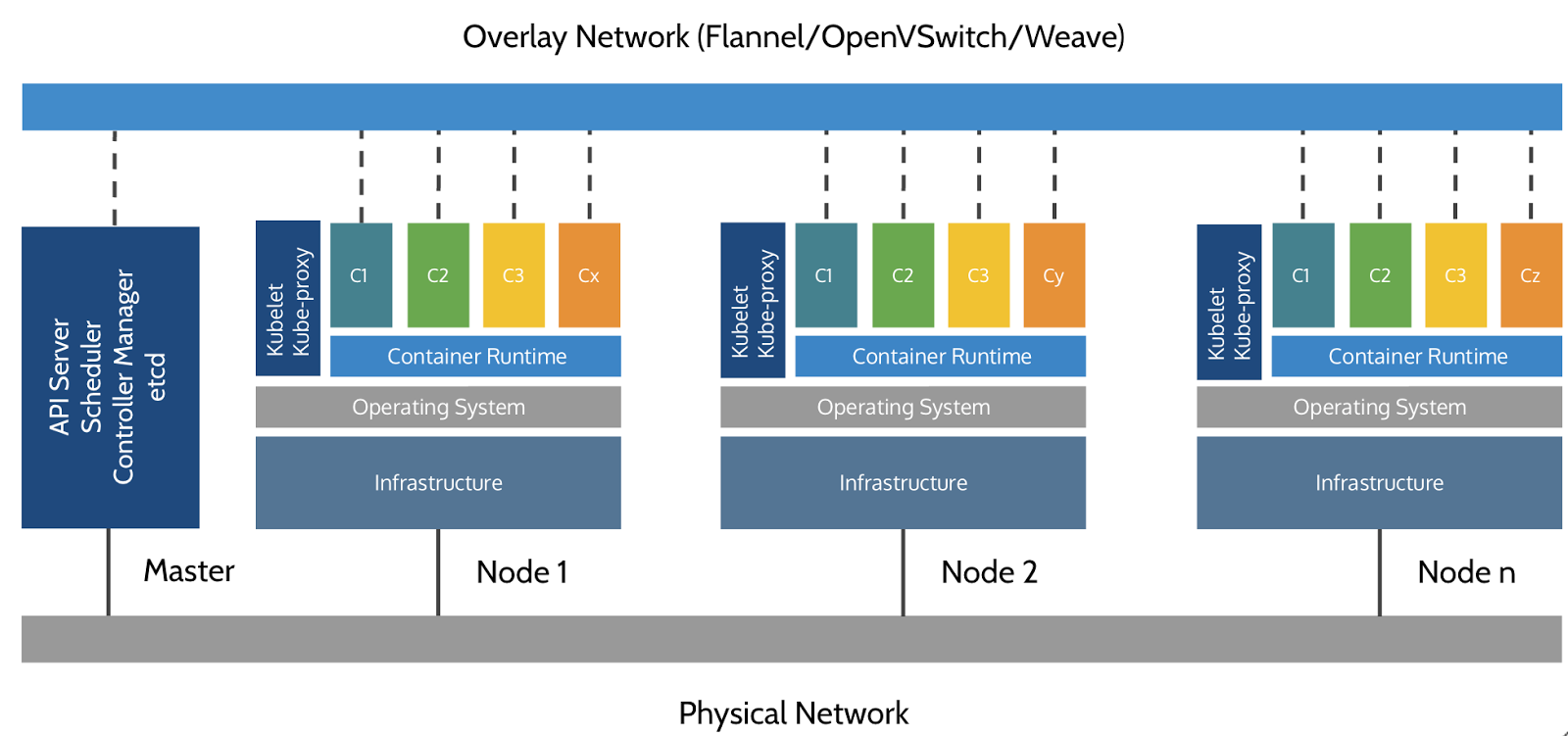

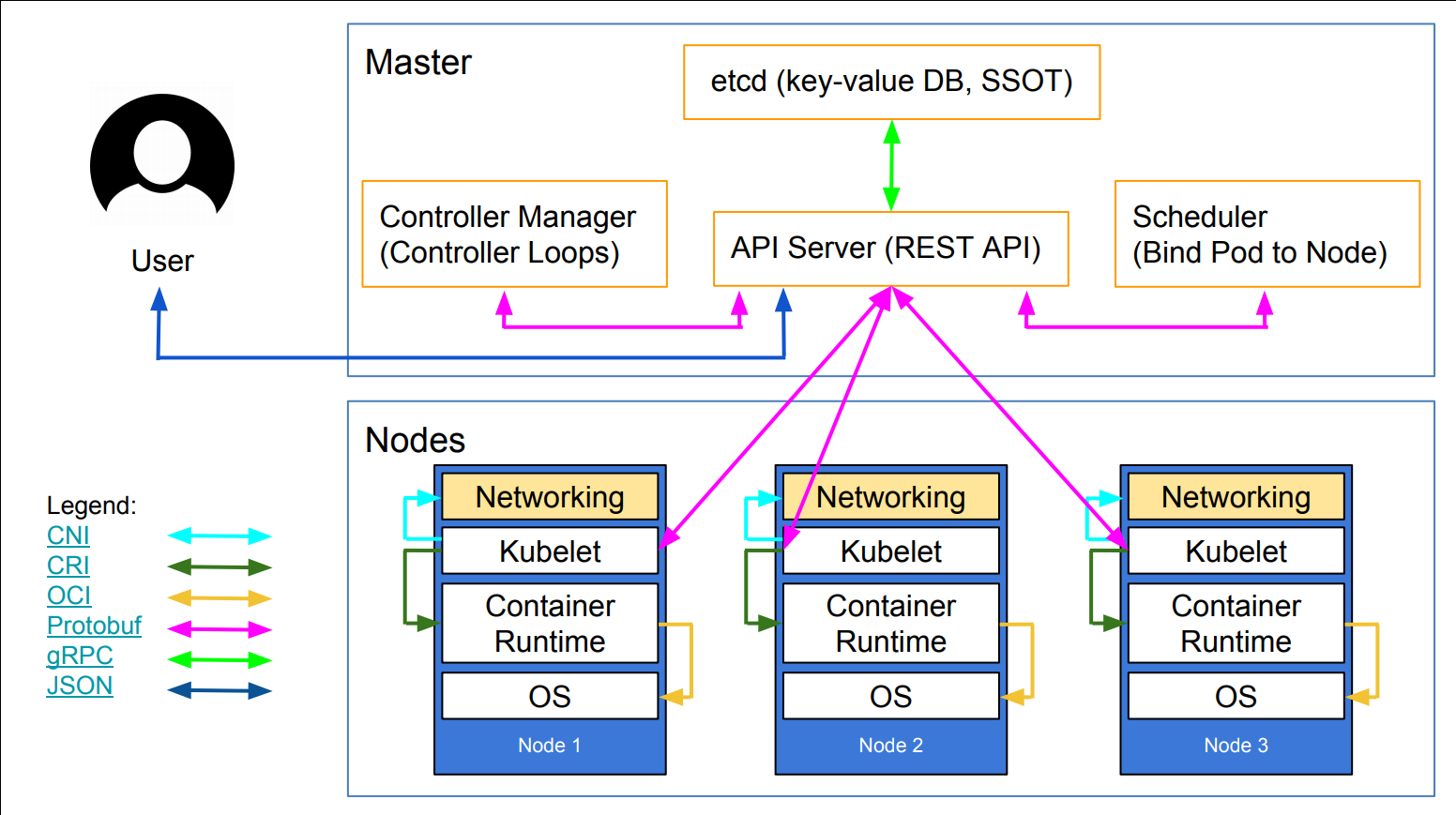

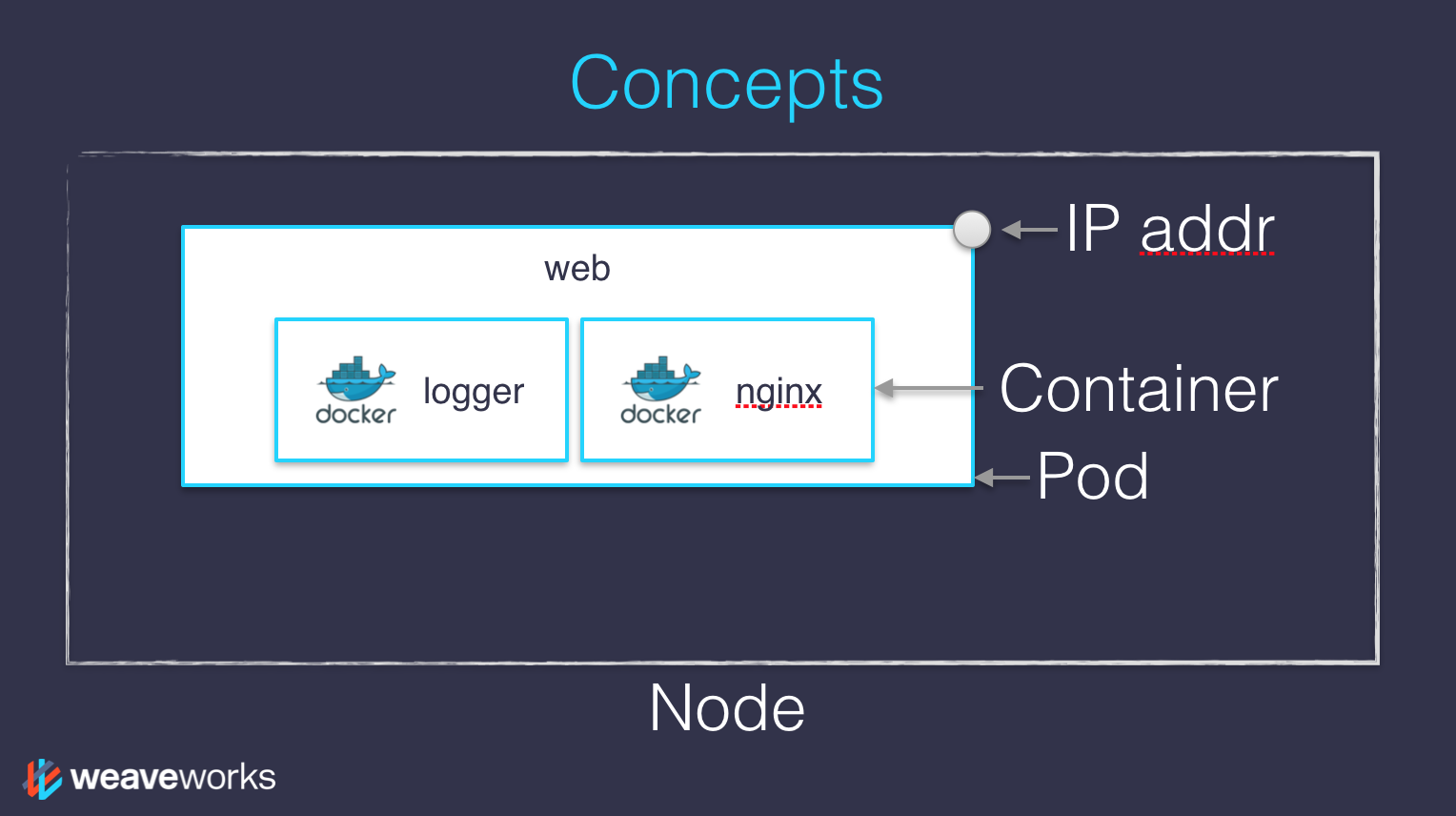

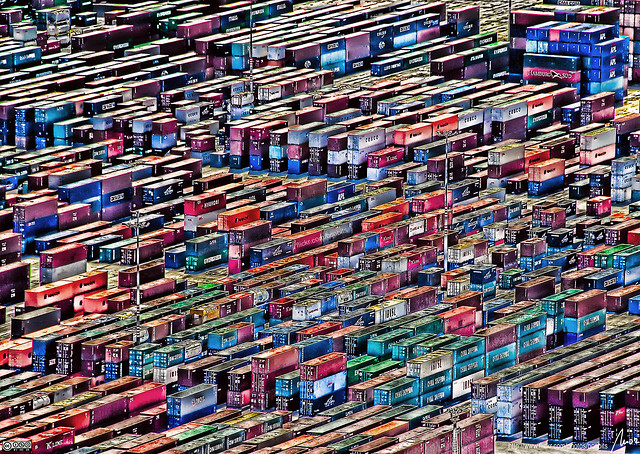

class: title, self-paced Deploying and Scaling Microservices<br/>with Kubernetes<br/> .nav[*Self-paced version*] .debug[ ``` M slides/containers/Docker_Machine.md M slides/containers/Training_Environment.md M slides/kube-twodays.yml M slides/markmaker.py ?? prepare-vms/fullsetup.sh ?? prepare-vms/lib/infra/scaleway.sh ?? prepare-vms/settings/djalal.yaml ?? prepare-vms/templates/djalal.html ?? slides/dok1-3days.yml ?? slides/dok1-3days.yml.bak ?? slides/kube-3days-djalal.yml ?? slides/public/ ``` These slides have been built from commit: 1bd528b [shared/title.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/title.md)] --- class: title, in-person Deploying and Scaling Microservices<br/>with Kubernetes<br/><br/></br> .footnote[ **Allez-y doucement avec le WiFi!**<br/> <!-- *Use the 5G network.* --> *N'utilisez pas votre hotspot.*<br/> *Ne chargez pas de vidéos, ne téléchargez pas de gros fichiers pendant la formation[.](https://www.youtube.com/watch?v=h16zyxiwDLY)*<br/> **djalal, Nanterre, 13 sept.** ] .debug[[shared/title.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/title.md)] --- ## Présentations - Bonjour, je suis: - .emoji[👨🏾🎓] djalal ([@enlamp](https://twitter.com/enlamp), ENLAMP) - Cet atelier se déroulera de 9h à 17h. - La pause déjeuner se fera entre 12h et 13h30. (avec 2 pauses café à 10h30 et 15h!) - N'hésitez pas à m'interrompre pour vos questions, à n'importe quel moment. - *Surtout quand vous verrez des photos de conteneurs en plein écran!* - Vos réactions en direct, questions, demande d'aide <br/>sur https://tinyurl.com/docker-w-djalal .debug[[logistics.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/logistics.md)] --- ## A brief introduction - This was initially written by [Jérôme Petazzoni](https://twitter.com/jpetazzo) to support in-person, instructor-led workshops and tutorials - Credit is also due to [multiple contributors](https://github.com/jpetazzo/container.training/graphs/contributors) — thank you! - You can also follow along on your own, at your own pace - We included as much information as possible in these slides - We recommend having a mentor to help you ... - ... Or be comfortable spending some time reading the Kubernetes [documentation](https://kubernetes.io/docs/) ... - ... And looking for answers on [StackOverflow](http://stackoverflow.com/questions/tagged/kubernetes) and other outlets .debug[[k8s/intro.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/intro.md)] --- class: self-paced ## Hands on, you shall practice - Nobody ever became a Jedi by spending their lives reading Wookiepedia - Likewise, it will take more than merely *reading* these slides to make you an expert - These slides include *tons* of exercises and examples - They assume that you have access to a Kubernetes cluster - If you are attending a workshop or tutorial: <br/>you will be given specific instructions to access your cluster - If you are doing this on your own: <br/>the first chapter will give you various options to get your own cluster .debug[[k8s/intro.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/intro.md)] --- ## À propos de ces diapositives - Tout le contenu est disponible dans un dépôt public Github: https://github.com/jpetazzo/container.training - Vous pouvez obtenir une version à jour de ces diapos ici: https://container.training/ (anglais) ou https://docker.djal.al/ (français) <!-- .exercise[ ```open https://github.com/jpetazzo/container.training``` ```open http://container.training/``` ] --> -- - Coquilles? Erreurs? Questions? N'hésitez pas à passer la souris en bas de diapo... .footnote[.emoji[👇] Essayez! Le code source sera affiché et vous pourrez l'ouvrir dans Github pour le consulter et le corriger.] <!-- .exercise[ ```open https://github.com/jpetazzo/container.training/tree/master/slides/common/about-slides.md``` ] --> .debug[[shared/about-slides.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/about-slides.md)] --- class: extra-details ## Détails supplémentaires - Cette diapo a une petite loupe dans le coin en haut à gauche. - Cette loupe signifie que ces diapos apportent des détails supplémentaires. - Vous pouvez les zapper si: - vous êtes pressé(e); - vous êtes tout nouveau et vous craignez la surcharge cognitive; - vous ne souhaitez que l'essentiel des informations. - Vous pourrez toujours y revenir une autre fois, ils vous attendront ici ☺ .debug[[shared/about-slides.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/about-slides.md)] --- name: toc-chapter-1 ## Chapitre 1 - [Pre-requis](#toc-pre-requis) - [Notre application de démo](#toc-notre-application-de-dmo) - [Concepts Kubernetes](#toc-concepts-kubernetes) - [Déclaratif vs Impératif](#toc-dclaratif-vs-impratif) - [Modèle réseau de Kubernetes](#toc-modle-rseau-de-kubernetes) .debug[(auto-generated TOC)] --- name: toc-chapter-2 ## Chapitre 2 - [Premier contact avec `kubectl`](#toc-premier-contact-avec-kubectl) - [Installer Kubernetes](#toc-installer-kubernetes) - [Lancer nos premiers conteneurs sur Kubernetes](#toc-lancer-nos-premiers-conteneurs-sur-kubernetes) - [Exposer des conteneurs](#toc-exposer-des-conteneurs) .debug[(auto-generated TOC)] --- name: toc-chapter-3 ## Chapitre 3 - [Shipping images with a registry](#toc-shipping-images-with-a-registry) - [Running our application on Kubernetes](#toc-running-our-application-on-kubernetes) - [Accessing the API with `kubectl proxy`](#toc-accessing-the-api-with-kubectl-proxy) - [Controlling the cluster remotely](#toc-controlling-the-cluster-remotely) - [Accessing internal services](#toc-accessing-internal-services) - [The Kubernetes dashboard](#toc-the-kubernetes-dashboard) - [Security implications of `kubectl apply`](#toc-security-implications-of-kubectl-apply) - [Scaling our demo app](#toc-scaling-our-demo-app) .debug[(auto-generated TOC)] --- name: toc-chapter-4 ## Chapitre 4 - [Daemon sets](#toc-daemon-sets) - [Labels and selectors](#toc-labels-and-selectors) - [Rolling updates](#toc-rolling-updates) - [Namespaces](#toc-namespaces) - [Kustomize](#toc-kustomize) .debug[(auto-generated TOC)] --- name: toc-chapter-5 ## Chapitre 5 - [Healthchecks](#toc-healthchecks) - [Accessing logs from the CLI](#toc-accessing-logs-from-the-cli) - [Centralized logging](#toc-centralized-logging) - [Authentication and authorization](#toc-authentication-and-authorization) - [The CSR API](#toc-the-csr-api) - [Pod Security Policies](#toc-pod-security-policies) .debug[(auto-generated TOC)] --- name: toc-chapter-6 ## Chapitre 6 - [Exposing HTTP services with Ingress resources](#toc-exposing-http-services-with-ingress-resources) - [Collecting metrics with Prometheus](#toc-collecting-metrics-with-prometheus) .debug[(auto-generated TOC)] --- name: toc-chapter-7 ## Chapitre 7 - [Volumes](#toc-volumes) - [Managing configuration](#toc-managing-configuration) .debug[(auto-generated TOC)] --- name: toc-chapter-8 ## Chapitre 8 - [Stateful sets](#toc-stateful-sets) - [Running a Consul cluster](#toc-running-a-consul-cluster) - [Local Persistent Volumes](#toc-local-persistent-volumes) - [Static pods](#toc-static-pods) .debug[(auto-generated TOC)] --- name: toc-chapter-9 ## Chapitre 9 - [Next steps](#toc-next-steps) - [Links and resources](#toc-links-and-resources) .debug[(auto-generated TOC)] .debug[[shared/toc.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/toc.md)] --- class: pic .interstitial[] --- name: toc-pre-requis class: title Pre-requis .nav[ [Section préc.](#toc-) | [Retour à la table des matières](#toc-chapter-1) | [Section suivante](#toc-notre-application-de-dmo) ] .debug[(automatically generated title slide)] --- # Pre-requis - Être à l'aise avec la ligne de commande UNIX - se déplacer à travers les dossiers - modifier des fichiers - un petit peu de bash-fu (variables d'environnement, boucles) - Un peu de savoir-faire sur Docker - `docker run`, `docker ps`, `docker build` - idéalement, vous savez comment écrire un Dockerfile et le générer. <br/> (même si c'est une ligne `FROM` et une paire de commandes `RUN`) - C'est totalement autorisé de ne pas être un expert Docker! .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: title *Raconte moi et j'oublie.* <br/> *Apprends-moi et je me souviens.* <br/> *Implique moi et j'apprends.* Attribué par erreur à Benjamin Franklin [(Plus probablement inspiré du philosophe chinois confucianiste Xunzi)](https://www.barrypopik.com/index.php/new_york_city/entry/tell_me_and_i_forget_teach_me_and_i_may_remember_involve_me_and_i_will_lear/) .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- ## Sections pratiques - Cet atelier est entièrement pratique - Nous allons construire, livrer et exécuter des conteneurs! - Vous être invité(e) à reproduire toutes les démos - Les sections "pratique" sont clairement identifiées, via le rectangle gris ci-dessous .exercise[ - C'est le genre de trucs que vous êtes censé faire! - Allez à http://container.training/ pour voir ces diapos - Joignez-vous au salon de chat: In person! <!-- ```open http://container.training/``` --> ] .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person ## Où allons-nous lancer nos conteneurs? .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person, pic  .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person ## Vous avez votre cluster de VMs dans le cloud - Chaque personne aura son cluster privé de VMs dans le cloud (partagé avec personne d'autre) - Les VMs resterons allumées toute la durée de la formation - Vous devez avoir une petite carte avec identifiant+mot de passe+adresses IP - Vous pouvez automatiquement SSH d'une VM à une autre - Les serveurs ont des alias: `node1`, `node2`, etc. .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person ## Pourquoi ne pas lancer nos conteneurs en local? - Installer cet outillage peut être difficile sur certaines machines (CPU ou OS à 32bits... Portables sans accès admin, etc.) - *Toute l'équipe a téléchargé ces images de conteneurs depuis le WiFi! <br/>... et tout s'est bien passé* (litéralement personne) - Tout ce dont vous avez besoin est un ordinateur (ou même une tablette), avec: - une connexion internet - un navigateur web - un client SSH .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person ## Clients SSH - Sur Linux, OS X, FreeBSD... vous être sûrement déjà prêt(e) - Sur Windows, récupérez un de ces logiciels: - [putty](http://www.putty.org/) - Microsoft [Win32 OpenSSH](https://github.com/PowerShell/Win32-OpenSSH/wiki/Install-Win32-OpenSSH) - [Git BASH](https://git-for-windows.github.io/) - [MobaXterm](http://mobaxterm.mobatek.net/) - Sur Android, [JuiceSSH](https://juicessh.com/) ([Play Store](https://play.google.com/store/apps/details?id=com.sonelli.juicessh)) marche plutôt pas mal. - Petit bonus pour: [Mosh](https://mosh.org/) en lieu et place de SSH, si votre connexion internet à tendance à perdre des paquets. .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person, extra-details ## What is this Mosh thing? *You don't have to use Mosh or even know about it to follow along. <br/> We're just telling you about it because some of us think it's cool!* - Mosh is "the mobile shell" - It is essentially SSH over UDP, with roaming features - It retransmits packets quickly, so it works great even on lossy connections (Like hotel or conference WiFi) - It has intelligent local echo, so it works great even in high-latency connections (Like hotel or conference WiFi) - It supports transparent roaming when your client IP address changes (Like when you hop from hotel to conference WiFi) .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person, extra-details ## Using Mosh - To install it: `(apt|yum|brew) install mosh` - It has been pre-installed on the VMs that we are using - To connect to a remote machine: `mosh user@host` (It is going to establish an SSH connection, then hand off to UDP) - It requires UDP ports to be open (By default, it uses a UDP port between 60000 and 61000) .debug[[shared/prereqs.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/prereqs.md)] --- class: in-person ## Se connecter à notre environnement de test .exercise[ - Connectez-vous sur la première VM (`node1`) avec votre client SSH <!-- ```bash for N in $(awk '/\Wnode/{print $2}' /etc/hosts); do ssh -o StrictHostKeyChecking=no $N true done ``` ```bash if which kubectl; then kubectl get deploy,ds -o name | xargs -rn1 kubectl delete kubectl get all -o name | grep -v service/kubernetes | xargs -rn1 kubectl delete --ignore-not-found=true kubectl -n kube-system get deploy,svc -o name | grep -v dns | xargs -rn1 kubectl -n kube-system delete fi ``` --> - Vérifiez que vous pouvez passer sur `node2` sans mot de passe: ```bash ssh node2 ``` - Tapez `exit` ou `^D` pour revenir à `node1` <!-- ```bash exit``` --> ] Si quoique ce soit va mal - appelez à l'aide! .debug[[shared/connecting.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/connecting.md)] --- ## Doing or re-doing the workshop on your own? - Use something like [Play-With-Docker](https://play-with-docker.com/) or [Play-With-Kubernetes](https://training.play-with-kubernetes.com/) Zero setup effort; but environment are short-lived and might have limited resources - Create your own cluster (local or cloud VMs) Small setup effort; small cost; flexible environments - Create a bunch of clusters for you and your friends ([instructions](https://github.com/jpetazzo/container.training/tree/master/prepare-vms)) Bigger setup effort; ideal for group training .debug[[shared/connecting.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/connecting.md)] --- class: self-paced ## Get your own Docker nodes - If you already have some Docker nodes: great! - If not: let's get some thanks to Play-With-Docker .exercise[ - Go to http://www.play-with-docker.com/ - Log in - Create your first node <!-- ```open http://www.play-with-docker.com/``` --> ] You will need a Docker ID to use Play-With-Docker. (Creating a Docker ID is free.) .debug[[shared/connecting.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/connecting.md)] --- ## On travaillera (surtout) avec node1 *Ces remarques s'appliquent uniquement en cas de serveurs multiples, bien sûr.* - Sauf contre-indication expresse, **toutes les commandes sont lancées depuis la première VM, `node1`** - Tout code sera récupéré sur `node1` uniquement. - En administration classique, nous n'avons pas besoin d'accéder aux autres serveurs. - Si nous devions diagnostiquer une panne, on utiliserait tout ou partie de: - SSH (pour accéder aux logs de système, statut du _daemon_, etc.) - l'API Docker (pour vérifier les conteneurs lancés, et l'état du moteur de conteneurs) .debug[[shared/connecting.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/connecting.md)] --- ## Terminaux Once in a while, the instructions will say: <br/>"Open a new terminal." There are multiple ways to do this: - create a new window or tab on your machine, and SSH into the VM; - use screen or tmux on the VM and open a new window from there. You are welcome to use the method that you feel the most comfortable with. .debug[[shared/connecting.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/connecting.md)] --- ## Tmux cheatsheet [Tmux](https://en.wikipedia.org/wiki/Tmux) is a terminal multiplexer like `screen`. *You don't have to use it or even know about it to follow along. <br/> But some of us like to use it to switch between terminals. <br/> It has been preinstalled on your workshop nodes.* - Ctrl-b c → creates a new window - Ctrl-b n → go to next window - Ctrl-b p → go to previous window - Ctrl-b " → split window top/bottom - Ctrl-b % → split window left/right - Ctrl-b Alt-1 → rearrange windows in columns - Ctrl-b Alt-2 → rearrange windows in rows - Ctrl-b arrows → navigate to other windows - Ctrl-b d → detach session - tmux attach → reattach to session .debug[[shared/connecting.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/connecting.md)] --- ## Versions installées - Kubernetes 1.14.3 - Docker Engine 18.09.6 - Docker Compose 1.21.1 <!-- ##VERSION## --> .exercise[ - Vérifier toutes les versions installées: ```bash kubectl version docker version docker-compose -v ``` ] .debug[[k8s/versions-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/versions-k8s.md)] --- class: extra-details ## Compatibilité entre Kubernetes et Docker - Kubernetes 1.13.x est uniquement validé avec les versions Docker Engine [jusqu'à to 18.06](https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.13.md#external-dependencies) - Kubernetes 1.14 est validé avec les versions Docker Engine versions [jusqu'à 18.09](https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.14.md#external-dependencies) <br/> (la dernière version stable quand Kubernetes 1.14 est sorti) - Est-ce qu'on vit dangereusement en installant un Docker Engine "trop récent"? -- class: extra-details - Que nenni! - "Validé" = passe les tests d'intégration continue très intenses (et coûteux) - L'API Docker est versionnée, et offre une comptabilité arrière très forte. (Si un client "parle" l'API v1.25, le Docker Engine va continuer à se comporter de la même façon) .debug[[k8s/versions-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/versions-k8s.md)] --- class: pic .interstitial[] --- name: toc-notre-application-de-dmo class: title Notre application de démo .nav[ [Section préc.](#toc-pre-requis) | [Retour à la table des matières](#toc-chapter-1) | [Section suivante](#toc-concepts-kubernetes) ] .debug[(automatically generated title slide)] --- # Notre application de démo - Nous allons cloner le dépôt Github sur notre `node1` - Le dépôt contient aussi les scripts et outils à utiliser à travers la formation. .exercise[ <!-- ```bash cd ~ if [ -d container.training ]; then mv container.training container.training.$RANDOM fi ``` --> - Cloner le dépôt sur `node1`: ```bash git clone https://github.com/jpetazzo/container.training ``` ] (Vous pouvez aussi _forker_ le dépôt sur Github et cloner votre version si vous préférez.) .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Télécharger et lancer l'application Démarrons-la avant de s'y plonger, puisque le téléchargement peut prendre un peu de temps... .exercise[ - Aller dans le dossier `dockercoins` du dépôt cloné: ```bash cd ~/container.training/dockercoins ``` - Utiliser Compose pour générer et lancer tous les conteneurs: ```bash docker-compose up ``` <!-- ```longwait units of work done``` --> ] Compose indique à Docker de construire toutes les images de conteneurs (en téléchargeant les images de base correspondantes), puis de démarrer tous les conteneurs et d'afficher les logs agrégés. .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Qu'est-ce que cette application? -- - C'est un miner de DockerCoin! .emoji[💰🐳📦🚢] -- - Non, on ne paiera pas le café avec des DockerCoins -- - Comment DockerCoins fonctionne - générer quelques octets aléatoires - calculer une somme de hachage - incrémenter un compteur (pour suivre la vitesse) - répéter en boucle! -- - DockerCoins n'est *pas* une crypto-monnaie (les seuls points communs étant "aléatoire", "hachage", et "coins" dans le nom) </lol> .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## DockerCoins à l'âge des microservices - DockerCoins est composée de 5 services: - `rng` = un service web générant des octets au hasard - `hasher` = un service web calculant un hachage basé sur les données POST-ées - `worker` = un processus en arrière-plan utilisant `rng` et `hasher` - `webui` = une interface web pour le suivi du travail - `redis` = base de données (garde un décompte, mis à jour par `worker`) - Ces 5 services sont visibles dans le fichier Compose de l'application, [docker-compose.yml]( https://github.com/jpetazzo/container.training/blob/master/dockercoins/docker-compose.yml) .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Comment fonctionne DockerCoins - `worker` invoque le service web `rng` pour générer quelques octets aléatoires - `worker` invoque le service web `hasher` pour générer un hachage de ces octets - `worker` reboucle de manière infinie sur ces 2 tâches - chaque seconde, `worker` écrit dans `redis` pour indiquer combien de boucles ont été réalisées - `webui` interroge `redis`, pour calculer et exposer la "vitesse de hachage" dans notre navigateur *(Voir le diagramme en diapo suivante!)* .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- class: pic  .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## _Service discovery_ au pays des conteneurs - Comment chaque service trouve l'adresse des autres? -- - On ne code pas en dur des adresses IP dans le code. - On ne code pas en dur des FQDN dans le code, non plus. - On se connecte simplement avec un nom de service, et la magie du conteneur fait le reste (Par magie du conteneur, nous entendons "l'astucieux DNS embarqué dynamique") .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Exemple dans `worker/worker.py` ```python redis = Redis("`redis`") def get_random_bytes(): r = requests.get("http://`rng`/32") return r.content def hash_bytes(data): r = requests.post("http://`hasher`/", data=data, headers={"Content-Type": "application/octet-stream"}) ``` (Code source complet disponible [ici]( https://github.com/jpetazzo/container.training/blob/8279a3bce9398f7c1a53bdd95187c53eda4e6435/dockercoins/worker/worker.py#L17 )) .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- class: extra-details ## Liens, nommage et découverte de service - Les conteneurs peuvent avoir des alias de réseau (résolus par DNS) - Compose dans sa version 2+ rend chaque conteneur disponible via son nom de service - Compose en version 1 rendait obligatoire la section "links" - Les alias de réseau sont automatiquement préfixé par un espace de nommage - vous pouvez avoir plusieurs applications déclarées via un service appelé `database` - les conteneurs dans l'appli bleue vont atteindre `database` via l'IP de la base de données bleue - les conteneurs dans l'appli verte vont atteindre `database` via l'IP de la base de données verte .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Montrez-moi le code! - Vous pouvez ouvrir le dépôt Github avec tous les contenus de cet atelier: <br/>https://github.com/jpetazzo/container.training - Cette application est dans le sous-dossier [dockercoins]( https://github.com/jpetazzo/container.training/tree/master/dockercoins) - Le fichier Compose ([docker-compose.yml]( https://github.com/jpetazzo/container.training/blob/master/dockercoins/docker-compose.yml)) liste les 5 services - `redis` utilise une image officielle issue du Docker Hub - `hasher`, `rng`, `worker`, `webui` sont générés depuis un Dockerfile - Chaque Dockerfile de service et son code source est stocké dans son propre dossier (`hasher` est dans le dossier [hasher](https://github.com/jpetazzo/container.training/blob/master/dockercoins/hasher/), `rng` est dans le dossier [rng](https://github.com/jpetazzo/container.training/blob/master/dockercoins/rng/), etc.) .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- class: extra-details ## Version du format de fichier Compose *Uniquement pertinent si vous avez utilisé Compose avant 2016...* - Compose 1.6 a introduit le support d'un nouveau format de fichier Compose (alias "v2") - Les services ne sont plus au plus haut niveau, mais dans une section `services`. - Il doit y avoir une clé `version` tout en haut du fichier, avec la valeur `"2"` (la chaîne de caractères, pas le chiffre) - Les conteneurs sont placés dans un réseau dédié, rendant les _links_ inutiles - Il existe d'autres différences mineures, mais la mise à jour est facile et assez directe. .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Notre application à l'oeuvre - A votre gauche, la bande "arc-en-ciel" montrant les noms de conteneurs - A votre droite, nous voyons la sortie standard de nos conteneurs - On peut voir le service `worker` exécutant des requêtes vers `rng` et `hasher` - Pour `rng` et `hasher`, on peut lire leur logs d'accès HTTP .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Se connecter à l'interface web - "Les logs, c'est excitant et drôle" (Citation de personne, jamais, vraiment) - Le conteneur `webui` expose un écran de contrôle web; allons-y voir. .exercise[ - Avec un navigateur, se connecter à `node1` sur le port 8000 - Rappel: les alias `nodeX` ne sont valides que sur les noeuds eux-mêmes. - Dans votre navigateur, vous aurez besoin de taper l'adresse IP de votre noeud. <!-- ```open http://node1:8000``` --> ] Un diagramme devrait s'afficher, et après quelques secondes, une courbe en bleu va apparaître. .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- class: self-paced, extra-details ## Si le graphique ne se charge pas Si tout ce que vous voyez est une erreur `Page not found`, cela peut être à cause de votre Docker Engine qui tourne sur une machine différente. Cela peut être le cas si: - vous utilisez Docker Toolbox - vous utilisez une VM (locale ou distante) créée avec Docker Machine - vous contrôlez un Docker Engine distant Quand vous lancez DockerCoins en mode développement, les fichiers statiques de l'interface web sont appliqués au conteneur via un volume. Hélas, les volumes ne fonctionnent que sur un environnement local, ou quand vous passez par Docker for Desktop. Comment corriger cela? Arrêtez l'appli avec `^C`, modifiez `dockercoins.yml`, commentez la section `volumes`, et relancez le tout. .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- class: extra-details ## Pourquoi le rythme semble irrégulier? - On *dirait peu ou prou* que la vitesse est de 4 hachages/seconde. - Ou plus précisément: 4 hachages/secondes avec des trous reguliers à zéro - Pourquoi? -- class: extra-details - L'appli a en réalité une vitesse constante et régulière de 3.33 hachages/seconde. <br/> (ce qui correspond à 1 hachage toutes les 0.3 secondes, pour *certaines raisons*) - Oui, et donc? .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- class: extra-details ## La raison qui fait que ce graphe n'est *pas super* - Le worker ne met pas à jour le compteur après chaque boucle, mais au maximum une fois par seconde. - La vitesse est calculée par le navigateur, qui vérifie le compte à peu près une fois par seconde. - Entre 2 mise à jours consécutives, le compteur augmentera soit de 4, ou de 0 (zéro). - La vitesse perçue sera donc 4 - 4 - 0 - 4 - 4 - 0, etc. - Que peut-on conclure de tout cela? -- class: extra-details - "Je suis carrément incapable d'écrire du bon code frontend" 😀 — Jérôme .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Arrêter notre application - Si nous stoppons Compose (avec `^C`), il demandera poliment au Docker Engine d'arrêter l'appli - Le Docker Engine va envoyer un signal `TERM` aux conteneurs - Si les conteneurs ne quittent pas assez vite, l'Engine envoie le signal `KILL` .exercise[ - Arrêter l'application en tapant `^C` <!-- ```keys ^C``` --> ] -- Certains conteneurs quittent immédiatement, d'autres prennent plus de temps. Les conteneurs qui ne gèrent pas le `SIGTERM` finissent pas être tués après 10 secs. Si nous sommes vraiment impatients, on peut taper `^C` une seconde fois! .debug[[shared/sampleapp.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/sampleapp.md)] --- ## Nettoyage - Avant de continuer, supprimons tous ces conteneurs. .exercise[ - Dire à Compose de tout enlever: ```bash docker-compose down ``` ] .debug[[shared/composedown.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/composedown.md)] --- class: pic .interstitial[] --- name: toc-concepts-kubernetes class: title Concepts Kubernetes .nav[ [Section préc.](#toc-notre-application-de-dmo) | [Retour à la table des matières](#toc-chapter-1) | [Section suivante](#toc-dclaratif-vs-impratif) ] .debug[(automatically generated title slide)] --- # Concepts Kubernetes - Kubernetes est un système de gestion de conteneurs - Il lance et gère des applications conteneurisées sur un _cluster_ -- - Qu'est-ce que ça signifie vraiment? .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Tâches de base qu'on peut demander à Kubernetes -- - Démarrer 5 conteneurs basés sur l'image `atseashop/api:v1.3` -- - Placer un _load balancer_ interne devant ces conteneurs -- - Démarrer 10 conteneurs basés sur l'image `atseashop/webfront:v1.3` -- - Placer un _load balancer_ public devant ces conteneurs -- - C'est _Black Friday_ (ou Noël!), le trafic explose, agrandir notre cluster et ajouter des conteneurs -- - Nouvelle version! Remplacer les conteneurs avec la nouvelle image `atseashop/webfront:v1.4` -- - Continuer de traiter les requêtes pendant la mise à jour; renouveler mes conteneurs un à la fois .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## D'autres choses que Kubernetes peut faire pour nous - Montée en charge basique - Déploiement _Blue/Green_, déploiement _canary_ - Services de longue durée, mais aussi des tâches par lots (batch) - Surcharger notre cluster et *évincer* les tâches de basse priorité - Lancer des services à données *persistentes* (bases de données, etc.) - Contrôle d'accès assez fin, pour définir *quelle* action est autorisée *pour qui* sur *quelle* ressources. - Intégrer les services tiers (*catalogue de services*) - Automatiser des tâches complexes (*opérateurs*) .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Architecture Kubernetes .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Architecture Kubernetes - Ha ha ha ha - OK, je voulais juste vous faire peur, c'est plus simple que ça ❤️ .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Crédits - Le premier schéma est un cluster Kubernetes avec du stockage sur l'_iSCSI multi-path_ (Grâce à [Yongbok Kim](https://www.yongbok.net/blog/)) - Le second est une représentation simplifiée d'un cluster Kubernetes (Grâce à [Imesh Gunaratne](https://medium.com/containermind/a-reference-architecture-for-deploying-wso2-middleware-on-kubernetes-d4dee7601e8e)) .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Architecture de Kubernetes: les _nodes_ - Les _nodes_ qui font tourner nos conteneurs ont aussi une collection de services: - un moteur de conteneurs (typiquement Docker) - kubelet (l'agent de _node_) - kube-proxy (un composant réseau nécessaire mais pas suffisant) - Les _nodes_ étaient précédemment appelées des "minions" (On peut encore rencontrer ce terme dans d'anciens articles ou documentation) .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Architecture Kubernetes: le plan de contrôle - La logique de Kubernetes (ses "méninges") est une collection de services: - Le serveur API (notre point d'entrée pour toute chose!) - des services principaux comme l'ordonnanceur et le contrôleur - `etcd` (une base clé-valeur hautement disponible; la "base de données" de Kubernetes) - Ensemble, ces services forment le plan de contrôle de notre cluster - Le plan de contrôle est aussi appelé le _"master"_ .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: extra-details ## Plan de contrôle sur des _nodes_ spéciales - Il est commun de réserver une _node_ dédiée au plan de contrôle (Excepté pour les cluster de développement à node unique, comme avec minikube) - Cette _node_ est alors appelée un "_master_" (Oui, c'est ambigu: est-ce que le "master" est une _node_, ou tout le plan de contrôle?) - Les applis normales sont interdites de tourner sur cette _node_ (En utilisant un mécanisme appelé ["taints"](https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/)) - Pour de la haute dispo, chaque service du plan de contrôle doit être résilient - Le plan de contrôle est alors répliqué sur de multiples noeuds (On parle alors d'installation "_multi-master_") .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: extra-details ## Lancer le plan de contrôle sans conteneurs - Les services du plan de contrôle peuvent tourner avec ou sans conteneurs - Par exemple: puisque `etcd` est un service critique, certains le déploient directement sur un cluster dédié (sans conteneurs) (C'est illustré dans le premier schéma "super compliqué") - Dans certaines offres commerciales Kubernetes (par ex. AKS, GKE, EKS), le plan de contrôle est invisible (On "voit" juste un point d'entrée Kubernetes API) - Dans ce cas, il n'y a pas de _node_ "master" *Pour cette raison, il est plus précis de parler de "plan de contrôle" plutôt que de "master".* .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: extra-details ## Docker est-il obligatoire à tout prix? Non! -- - Par défaut, Kubernetes choisit le Docker Engine pour lancer les conteneurs - On pourrait utiliser `rkt` ("Rocket") par CoreOS - Ou exploiter d'autre moteurs via la *Container Runtime Interface* (comme CRI-O, ou containerd) .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: extra-details ## Devrait-on utiliser Docker? Oui! -- - Dans cet atelier, on lancera d'abord notre appli sur un seul noeud - On devra générer les images et les envoyer à la ronde - On pourrait se débrouiller sans Docker <br/> (et être diagnostiqué du syndrome NIH¹) - Docker est à ce jour le moteur de conteneurs le plus stable <br/> (mais les alternatives mûrissent rapidement) .footnote[¹[Not Invented Here](https://en.wikipedia.org/wiki/Not_invented_here)] .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: extra-details ## Devrait-on utiliser Docker? - Sur nos environnements de développement, les pipelines CI ... : *Oui, très certainement* - Sur nos serveurs de production: *Oui (pour aujourd'hui)* *Probablement pas (dans le futur)* .footnote[Pour plus d'infos sur CRI [sur le blog Kubernetes](https://kubernetes.io/blog/2016/12/container-runtime-interface-cri-in-kubernetes)] .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Interagir avec Kubernetes - Le dialogue avec Kubernetes s'effectue via une API RESTful, la plupart du temps. - L'API Kubernetes définit un tas d'objets appelés *resources* - Ces ressources sont organisées par type, ou `Kind` (dans l'API) - Elle permet de déclarer, lire, modifier et supprimer les *resources* - Quelques types de ressources communs: - _node_ (une machine - physique ou virtuelle - de notre cluster) - _pod_ (groupe de conteneurs lancés ensemble sur une _node_) - _service_ (point d'entrée stable du réseau pour se connecter à un ou plusieurs conteneurs) Et bien plus! - On peut afficher la liste complète avec `kubectl api-resources` .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- ## Crédits - Le premier diagramme est une grâcieuseté de Lucas Käldström, dans [cette présentation](https://speakerdeck.com/luxas/kubeadm-cluster-creation-internals-from-self-hosting-to-upgradability-and-ha) - c'est l'un des meilleurs diagrammes d'architecture Kubernetes disponibles! - Le second diagramme est une grâcieuseté de Weave Works - un *pod* peut avoir plusieurs conteneurs qui travaillent ensemble - les adresses IP sont associées aux *pods*, pas aux conteneurs eux-mêmes Les deux diagrammes sont utilisés avec la permission de leurs auteurs. .debug[[k8s/concepts-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/concepts-k8s.md)] --- class: pic .interstitial[] --- name: toc-dclaratif-vs-impratif class: title Déclaratif vs Impératif .nav[ [Section préc.](#toc-concepts-kubernetes) | [Retour à la table des matières](#toc-chapter-1) | [Section suivante](#toc-modle-rseau-de-kubernetes) ] .debug[(automatically generated title slide)] --- # Déclaratif vs Impératif - Notre orchestrateur de conteneurs insiste fortement sur sa nature *déclarative* - Déclaratif: *Je voudrais une tasse de thé* - Impératif: *Faire bouillir de l'eau. Verser dans la théière. Ajouter les feuilles de thé. Infuser un moment. Servir dans une tasse.* -- - Le mode déclaratif semble plus simple au début... -- - ... tant qu'on sait comment préparer du thé .debug[[shared/declarative.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/declarative.md)] --- ## Déclaratif vs Impératif - Ce que le mode déclaratif devrait vraiment être: *Je voudrais une tasse de thé, obtenue en versant une infusion¹ de feuilles de thé dans une tasse.* -- *¹Une infusion est obtenue en laissant l'objet infuser quelques minutes dans l'eau chaude².* -- *²Liquide chaud obtenu en le versant dans un contenant³ approprié et le placer sur la gazinière.* -- *³Ah, finalement, des conteneurs! Quelque chose qu'on maitrise. Mettons-nous au boulot, n'est-ce pas?* -- .footnote[Saviez-vous qu'il existait une [norme ISO](https://fr.wikipedia.org/wiki/ISO_3103) spécifiant comment infuser le thé?] .debug[[shared/declarative.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/declarative.md)] --- ## Déclaratif vs Impératif - Système impératifs: - plus simple - si une tache est interrompue, on doit la redémarrer de zéro - Système déclaratifs: - si une tache est interrompue (ou si on arrive en plein milieu de la fête), on peut déduire ce qu'il manque, et on complète juste par ce qui est nécessaire. - on doit être en mesure *d'observer* le système - ... et de calculer un "diff" entre *ce qui tourne en ce moment* et *ce que nous souhaitons* .debug[[shared/declarative.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/declarative.md)] --- ## Déclaratif vs Impératif dans Kubernetes - Pratiquement tout ce que nous lançons sur Kubernetes est déclaré dans une *spec* - Tout ce qu'on peut faire est écrire un *spec* et la pousser au serveur API (en déclarant des ressources comme *Pod* ou *Deployment*) - Le serveur API va valider cette spec (la rejeter si elle est invalide) - Puis la stocker dans etcd - Un *controller* va "repérer" cette spécification et réagir en conséquence .debug[[k8s/declarative.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/declarative.md)] --- ## Réconciliation d'état - Gardez un oeil sur les champs `spec` dans les fichiers YAML plus tard! - La *spec* décrit *comment on voudrait que ce truc tourne* - Kubernetes va *réconcilier* l'état courant avec la *spec* <br>(techniquement, c'est possible via un tas de *controllers*) - Quand on veut changer une ressources, on modifie la *spec* - Kubernetes va alors *faire converger* cette ressource .debug[[k8s/declarative.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/declarative.md)] --- class: pic .interstitial[] --- name: toc-modle-rseau-de-kubernetes class: title Modèle réseau de Kubernetes .nav[ [Section préc.](#toc-dclaratif-vs-impratif) | [Retour à la table des matières](#toc-chapter-1) | [Section suivante](#toc-premier-contact-avec-kubectl) ] .debug[(automatically generated title slide)] --- # Modèle réseau de Kubernetes - En un mot comme en cent: *Notre cluster (nodes et pods) est un grand réseau IP tout plat.* -- - Dans le détail: - toutes les _nodes_ doivent être accessibles les unes aux autres, sans NAT - tous les _pods_ doivent être accessibles les uns aux autres, sans NAT - _pods_ et _nodes_ doivent être accessibles les uns aux autres, sans NAT - chaque _pod_ connait sa propore adresse IP (sans NAT) - les adresses IP sont assignées par l'implémentation du réseau (le plugin) - Kubernetes ne force pas une implémentation particulière .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- ## Modèle réseau de Kubernetes: le bon - Tout peut se connecter à tout - Pas de traduction d'adresse - Pas de traduction de port - Pas de nouveau protocole - L'implémentation réseau peut décider comment allouer les adresses - Les adresses IP n'ont pas à être "portables" d'une node à une autre. (On peut avoir par ex. un sous-réseau par _node_ et utiliser une topologie simple) - La spécification est assez simple pour permettre différentes implémentations variées .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- ## Modèle réseau de Kubernetes: le moins bon - Tout peut se connecter à tout - si on cherche de la sécurité, on devra rajouter des règles réseau - l'implémentation réseau que vous choisirez devra offrir cette fonction - Il y a littéralement des dizaines d'implémentations dans le monde (Pas moins de 15 sont mentionnées dans la documentation Kubernetes) - Les _pods_ ont une connectivité de niveau 3 (IP), et les *services* de niveau 4 (TCP ou UDP) (Les services sont associés à un seul port TCP ou UDP; pas de groupe de ports ou de paquets IP arbitraires) - `kube-proxy` est sur le chemin de données quand il se connecte à un _pod_ ou conteneur, <br/>et ce n'est pas particulièrement rapide (il s'appuie sur du proxy utilisateur ou iptables) .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- ## Modèle réseau de Kubernetes: en pratique - Les nodes que nous avons à notre disposition utilisent [Weave](https://github.com/weaveworks/weave) - On ne recommande pas Weave plus que ça, c'est juste que "Ca Marche Pour Nous" - Pas d'inquiétude à propos des réserves sur la performance `kube-proxy` - Sauf si vous: - saturez régulièrement des interfaces réseaux 10Gbps - comptez les flux de paquets par millions à la seconde - lancez des plate-formes VOIP ou de jeu de haut trafic - faites des trucs bizarres qui lancent des millions de connexions simultanées <br/>(auquel cas vous êtes déjà familier avec l'optimisation du noyau) - Si nécessaire, des alternatives à `kube-proxy` existent, comme: [`kube-router`](https://www.kube-router.io) .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- class: extra-details ## La CNI (_Container Network Interface_) - La CNI est une [spécification](https://github.com/containernetworking/cni/blob/master/SPEC.md#network-configuration) complète à destination des _plugins_ réseau. - Quand un nouveau _pod_ est créé, Kubernetes délègue la config réseau aux _plugins_ CNI. (ça peut être un seul plugin, ou une combinaison de plugins, chacun spécialisé dans une tache) - Généralement, un _plugin_ CNI va: - allouer une adresse IP (en appelant un _plugin_ IPAM) - ajouter une interface réseau dans le _namespace_ réseau du _pod_ - configurer l'interface ainsi que les routes minimum, etc. - Tous les _plugins_ CNI ne naissent pas égaux (par ex. il ne supportent pas tous les politiques de réseau, obligatoires pour isoler les _pods_) .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- class: extra-details ## Plusieurs cibles mouvantes - Le "réseau pod-à-pod" ou "réseau pod": - fournit la communication entre pods et nodes - est généralement implémenté via des plugins CNI - Le "réseau pod-à-service": - fournit la communication interne et la répartition de charge - est généralement implémenté avec kube-proxy (ou par ex. kube-router) - _Network policies_ : - jouent le rôle de firewall et de couche d'isolation - peuvent être livrées avec le "réseau pod" ou fournit par un autre composant .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- class: extra-details ## Encore plus de cibles mouvantes - Le trafic entrant peut être géré par plusieurs composants: - quelque chose comme kube-proxy ou kube-router (pour les services NodePort) - les _load balancers_ (idéalement, connectés au réseau _pod_) - En théorie, il est possible d'utiliser plusieurs réseaux _pods_ en parallèle (avec des "meta-plugins" comme CNI-Genie ou Multus) - Quelques solutions peuvent remplir plusieurs de ces rôles (par ex. kube-router peut être installé pour implémenter le réseau _pod_ et/ou les _network policies_ et/ou remplacer kube-proxy) .debug[[k8s/kubenet.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubenet.md)] --- class: pic .interstitial[] --- name: toc-premier-contact-avec-kubectl class: title Premier contact avec `kubectl` .nav[ [Section préc.](#toc-modle-rseau-de-kubernetes) | [Retour à la table des matières](#toc-chapter-2) | [Section suivante](#toc-installer-kubernetes) ] .debug[(automatically generated title slide)] --- # Premier contact avec `kubectl` - `kubectl` est (presque) le seul outil dont nous aurons besoin pour parler à Kubernetes - C'est un outil en ligne de commande très riche, autour de l'API Kubernetes (Tout ce qu'on peut faire avec `kubectl`, est directement exécutable via l'API) - Sur nos machines, on trouvera un fichier `~/.kube/config` avec: - l'adresse de l'API Kubernetes - le chemin vers nos certificats TLS d'identification - On peut aussi utiliser l'option `--kubeconfig` pour forcer un fichier de config - Ou passer directement `--server`, `--user`, etc. - `kubectl` se prononce "Cube Cé Té Elle", "Cube coeuteule", "Cube coeudeule" .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## `kubectl get` - Jetons un oeil aux ressources `Node` avec `kubectl get`! .exercise[ - Examiner la composition de notre cluster: ```bash kubectl get node ``` - Ces commandes sont équivalentes: ```bash kubectl get no kubectl get node kubectl get nodes ``` ] .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Obtenir un affichage version "machine" - `kubectl get` peut afficher du JSON, YAML ou un format personnalisé .exercise[ - Sortir plus d'info sur les nodes ```bash kubectl get nodes -o wide ``` - Récupérons du YAML: ```bash kubectl get no -o yaml ``` Ce bout de `kind: List` tout à la fin? C'est le type de notre résultat! ] .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## User et abuser de `kubectl` et `jq` - C'est super facile d'afficher ses propres rapports .exercise[ - Montrer la capacité de tous nos noeuds sous forme de flux d'objets JSON: ```bash kubectl get nodes -o json | jq ".items[] | {name:.metadata.name} + .status.capacity" ``` ] .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Qu'est-ce qui tourne là-dessous? - `kubectl` dispose de capacité d'introspection solides - On peut lister les types de ressources en lançant `kubectl api-resources` <br/> (Sur Kubernetes 1.10 et les versions précédentes, il fallait taper `kubectl get`) - Pour détailler une ressource, c'est: ```bash kubectl explain type ``` - La définition d'un type de ressource s'affiche avec: ```bash kubectl explain node.spec ``` - ou afficher la définition complète de tous les champs et sous-champs: ```bash kubectl explain node --recursive ``` .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Introspection vs. documentation - On peut accéder à la même information en lisant [la documentation d'API](https://kubernetes.io/docs/reference/#api-reference) - La doc est habituellement plus facile à lire, mais: - elle ne montrera par les types (comme les Custom Resource Definitions) - attention à bien utiliser la version correcte - `kubectl api-resources` and `kubectl explain` font de l'*introspection* (en s'appuyant sur le serveur API, pour récupérer des définitions de types exactes) .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Nommage de types - Les ressources les plus communes ont jusqu'à 3 formes de noms: - singulier (par ex. `node`, `service`, `deployment`) - pluriel (par ex. `nodes`, `services`, `deployments`) - court (par ex. `no`, `svc`, `deploy`) - Certaines ressources n'ont pas de nom court - `Endpoints` n'ont qu'une forme au pluriel (parce que même une seule ressource `Endpoints` est en fait une liste d'_endpoints_) .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Détailler l'affichage - On peut taper `kubectl get -o yaml` pour un détail complet d'une ressource - Toutefois, le format YAML peut être à la fois trop verbeux et incomplet - Par exemple, `kubectl get node node1 -o yaml` est: - trop verbeux (par ex. la liste des images disponibles sur cette node) - incomplet (car on ne voit pas les pods qui y tournent) - difficile à lire pour un administrateur humain - Pour une vue complète, on peut utiliser `kubectl describe` en alternative. .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## `kubectl describe` - `kubectl describe` requiert un type de ressource et (en option) un nom de ressource - Il est possible de fournir un préfixe de nom de ressource (tous les objets contenant ce nom seront affichés) - `kubectl describe` va récupérer quelques infos de plus sur une ressource .exercise[ - Jeter un oeil aux infos de `node1` avec une de ces commandes: ```bash kubectl describe node/node1 kubectl describe node node1 ``` ] (On devrait voir un tas de pods du plan de contrôle) .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Services - Un *service* est un point d'entrée stable pour se connecter à "quelque chose" (Dans la proposition initiale, on appelait ça un "portail") .exercise[ - Lister les services sur notre cluster avec une de ces commandes: ```bash kubectl get services kubectl get svc ``` ] -- Il y a déjà un service sur notre cluster: l'API Kubernetes elle-même. .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## services *ClusterIP* - Un service `ClusterIP` est interne, disponible uniquement depuis le cluster - C'est utile pour faire l'introspection depuis l'intérieur de conteneurs. .exercise[ - Essayer de se connecter à l'API: ```bash curl -k https://`10.96.0.1` ``` - `-k` est spécifié pour désactiver la vérification de certificat - Attention à bien remplacer 10.96.0.1 avec l'IP CLUSTER affichée par `kubectl get svc` ] NB :sur Docker for Desktop, l'API n'est accessible que sur `https://localhost:6443/` -- L'erreur que vous voyez était attendue: l'API Kubernetes exige une identification. .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Lister les conteneurs qui tournent - Les conteneurs existent à travers des *pods*. - Un _pod_ est un groupe de conteneurs: - qui tournent ensemble (sur le même noeud) - qui partagent des ressources (RAM, CPU; mais aussi réseau et volumes) .exercise[ - Lister les _pods_ de notre cluster: ```bash kubectl get pods ``` ] -- *Ce ne sont pas là les _pods_ que nous cherchons.* Mais où sont-ils alors?!? .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Namespaces - Les espaces de nommage (_namespaces_) nous permettent de cloisonner des ressources. .exercise[ - Lister les _namespaces_ de notre cluster avec une de ces commandes: ```bash kubectl get namespaces kubectl get namespace kubectl get ns ``` ] -- *Vous savez quoi... Ce machin `kube-system` m'a l'air suspect.* *En fait, je suis plutôt sûr de l'avoir vu tout à l'heure, quand on a tapé:* `kubectl describe node node1` .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Accéder aux _namespaces_ - Par défaut, `kubectl` utilise le _namespace_... `default` - On peut montrer toutes les ressources avec `--all-namespaces` .exercise[ - Lister les _pods_ à travers tous les _namespaces_: ```bash kubectl get pods --all-namespaces ``` - Depuis Kubernetes 1.14, on peut aussi taper `-A` pour faire plus court: ```bash kubectl get pods -A ``` *Et voici nos pods système!* .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## A quoi servent ces _pods_ du plan de contrôle? - `etcd` est notre serveur etcd - `kube-apiserver` est le serveur API - `kube-controller-manager` et `kube-scheduler` sont d'autres composants maître - `coredns` fournit une découverte de services basé sur le DNS ([il remplace kube-dns depuis 1.11](https://kubernetes.io/blog/2018/07/10/coredns-ga-for-kubernetes-cluster-dns/)) - `kube-proxy` tourne sur chaque _node_ et gère le _mapping_ de ports etc. - `weave` est le composant qui gère les réseaux superposés sur chaque noeud - la colonne `READY` indique le nombre de conteneurs dans chaque _pod_ - les _pods_ avec un nom qui finit en `-node1` sont les composants maître <br/> ils sont spécifiquement "scotchés" au noeud maître. .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## Viser un autre _namespace_ - On peut aussi examiner un autre namespace (que `default`) .exercise[ - Lister uniquement les pods du namespace `kube-system`: ```bash kubectl get pods --namespace=kube-system kubectl get pods -n kube-system ``` ] .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- ## _Namespaces_ selon les commandes `kubectl` - On peut combiner `-n`/`--namespace` avec presque toute commande - Exemple: - `kubectl create --namespace=X` pour créer quelque chose dans le _namespace_ X - On peut utiliser `-A`/`--all-namespaces` avec la plupart des commandes qui manipulent plein d'objets à la fois - Exemples: - `kubectl delete` supprime des ressources à travers plusieurs _namespaces_ - `kubectl label` ajoute/supprime des labels à travers plusieurs _namespaces_ .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Qu'en est-il de ce `kube-public`? .exercise[ - Lister les _pods_ dans le _namespace_ `kube-public`: ```bash kubectl -n kube-public get pods ``` ] Rien! `kube-public` est créé par kubeadm et [utilisé pour établie une sécurité de base](https://kubernetes.io/blog/2017/01/stronger-foundation-for-creating-and-managing-kubernetes-clusters) .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Explorer `kube-public` - Le seul objet intéressant dans `kube-public` est un `ConfigMap` nommé `cluster-info` .exercise[ - Lister les ConfigMaps dans le _namespace_ `kube-public`: ```bash kubectl -n kube-public get configmaps ``` - Inspecter `cluster-info`: ```bash kubectl -n kube-public get configmap cluster-info -o yaml ``` ] Noter l'URI `selfLink`: `/api/v1/namespaces/kube-public/configmaps/cluster-info` On pourrait en avoir besoin! .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Accéder à `cluster-info` - Plus tôt, en interrogeant le serveur API, on a reçu une réponse `Forbidden` - Mais `cluster-info` est lisible par tous (y compris sans authentification) .exercise[ - Récupérer `cluster-info`: ```bash curl -k https://10.96.0.1/api/v1/namespaces/kube-public/configmaps/cluster-info ``` ] - Nous sommes capables d'accéder à `cluster-info` (sans auth) - Il contient un fichier `kubeconfig` .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Récupérer `kubeconfig` - On peut facilement extraire le conenu du fichier `kubeconfig` de cette ConfigMap .exercise[ - Afficher le contenu de `kubeconfig`: ```bash curl -sk https://10.96.0.1/api/v1/namespaces/kube-public/configmaps/cluster-info \ | jq -r .data.kubeconfig ``` ] - Ce fichier contient l'adresse canonique du serveur d'API, et la clé publique du CA. - Ce fichier *ne contient pas* les clés client ou tokens - Ce ne sont pas des infos sensibles, mais c'est essentiel pour établir une connexion sécurisée. .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: extra-details ## Qu'en est-il de `kube-node-lease`? - Depuis Kubernetes 1.14, il y a un namespace `kube-node-lease` (ou dès la version 1.13 si la fonction NodeLease était activée) - Ce namespace contient un objet Lease par node - Un *Node lease* est une nouvelle manière d'implémenter les _heartbeat_ de _node_ (c'est-à-dire qu'une node va contacter le _master_ de temps à autre et dire "Je suis vivant!") - Pour plus de détails, voir [KEP-0009] ou la [doc de contrôleur de node] [KEP-0009]: https://github.com/kubernetes/enhancements/blob/master/keps/sig-node/0009-node-heartbeat.md [doc de contrôleur de node]: https://kubernetes.io/docs/concepts/architecture/nodes/#node-controller .debug[[k8s/kubectlget.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlget.md)] --- class: pic .interstitial[] --- name: toc-installer-kubernetes class: title Installer Kubernetes .nav[ [Section préc.](#toc-premier-contact-avec-kubectl) | [Retour à la table des matières](#toc-chapter-2) | [Section suivante](#toc-lancer-nos-premiers-conteneurs-sur-kubernetes) ] .debug[(automatically generated title slide)] --- # Installer Kubernetes - Comment avons-nous installé les clusters Kubernetes à qui on parle? -- <!-- ##VERSION## --> - On est passé par `kubeadm` sur des VMs fraîchement installées avec Ubuntu LTS 1. Installer Docker 2. Installer les paquets Kubernetes 3. Lancer `kubeadm init` sur la première node (c'est ce qui va déployer le plan de contrôle) 4. Installer Weave (la couche réseau _overlay_) <br/> (cette étape consiste en une seule commande `kubectl apply`; voir plus loin) 5. Lancer `kubeadm join` sur les autres _nodes_ (avec le jeton fourni par `kubeadm init`) 6. Copier le fichier de configuration généré par `kubeadm init` - Allez voir [README d'installation des VMs](https://github.com/jpetazzo/container.training/blob/master/prepare-vms/README.md) pour plus de détails. .debug[[k8s/setup-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/setup-k8s.md)] --- ## Inconvénients `kubeadm` - N'installe ni Docker ni autre moteur de conteneurs - N'installe pas de réseau _overlay_ - N'installe pas de mode multi-maître (pas de haute disponibilité) -- (En tout cas... pas encore!) Même si c'est une fonction [expérimentale en version 1.12](https://kubernetes.io/docs/setup/independent/high-availability/).) -- "C'est quand même le double de travail par rapport à un cluster Swarm 😕" -- Jérôme .debug[[k8s/setup-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/setup-k8s.md)] --- ## Autres options de déploiement - Si vous êtes sur Azure: [AKS](https://azure.microsoft.com/services/kubernetes-service/) - Si vous êtes sur Google Cloud: [GKE](https://cloud.google.com/kubernetes-engine/) - Si vous êtes sur AWS: [EKS](https://aws.amazon.com/eks/), [eksctl](https://eksctl.io/) - Agnostique au cloud (AWS/DO/GCE (beta)/vSphere(alpha)): [kops](https://github.com/kubernetes/kops) - Sur votre machine locale: [minikube](https://kubernetes.io/docs/setup/minikube/), [kubespawn](https://github.com/kinvolk/kube-spawn), [Docker Desktop](https://docs.docker.com/docker-for-mac/kubernetes/) - Si vous avez un déploiement spécifique: [kubicorn](https://github.com/kubicorn/kubicorn) Sans doute à ce jour l'outil le plus proche d'une solution multi-cloud/hybride, mais encore en développement. .debug[[k8s/setup-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/setup-k8s.md)] --- ## Encore plus d'options de déploiement - Si vous aimez Ansible: [kubespray](https://github.com/kubernetes-incubator/kubespray) - Si vous aimez Terraform: [typhoon](https://github.com/poseidon/typhoon) - Si vous aimez Terraform et Puppet: [tarmak](https://github.com/jetstack/tarmak) - Vous pouvez aussi apprendre à installer chaque composant manuellement, avec l'excellent tutoriel [Kubernetes The Hard Way](https://github.com/kelseyhightower/kubernetes-the-hard-way) *Kubernetes The Hard Way est optimisé pour l'apprentissage, ce qui implique de prendre les détours obligatoires à la compréhension de chaque étape nécessaire pour la construction d'un cluster Kubernetes.* - Il y a aussi nombre d'options commerciales disponibles! - Pour une liste plus complète, veuillez consulter la documentation Kubernetes: <br/> on y trouve un super guide pour [choisir la bonne piste](https://kubernetes.io/docs/setup/#production-environment) .debug[[k8s/setup-k8s.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/setup-k8s.md)] --- class: pic .interstitial[] --- name: toc-lancer-nos-premiers-conteneurs-sur-kubernetes class: title Lancer nos premiers conteneurs sur Kubernetes .nav[ [Section préc.](#toc-installer-kubernetes) | [Retour à la table des matières](#toc-chapter-2) | [Section suivante](#toc-exposer-des-conteneurs) ] .debug[(automatically generated title slide)] --- # Lancer nos premiers conteneurs sur Kubernetes - Commençons par le commencement: on ne lance pas "un" conteneur -- - On va lancer un _pod_, et dans ce _pod_, on fera tourner un seul conteneur -- - Dans ce conteneur, qui est dans le _pod_, nous allons lancer une simple commande `ping` - Puis nous allons démarrer plusieurs exemplaires du _pod_. .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Démarrer un simple pod avec `kubectl run` - On doit spécifier au moins un *nom* et l'image qu'on veut utiliser. .exercise[ - Lancer un ping sur `1.1.1.1`, le [serveur DNS public](https://blog.cloudflare.com/announcing-1111/) de Cloudflare: ```bash kubectl run pingpong --image alpine ping 1.1.1.1 ``` <!-- ```hide kubectl wait deploy/pingpong --for condition=available``` --> ] -- (A partir de Kubernetes 1.12, un message s'affiche nous indiquant que `kubectl run` est déprécié. Laissons ça de côté pour l'instant.) .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Dans les coulisses de `kubectl run` - Jetons un oeil aux ressources créées par `kubectl run` .exercise[ - Lister tous types de ressources: ```bash kubectl get all ``` ] -- On devrait y voir quelque chose comme: - `deployment.apps/pingpong` (le *deployment* que nous venons juste de déclarer) - `replicaset.apps/pingpong-xxxxxxxxxx` (un *replica set* généré par ce déploiement) - `pod/pingpong-xxxxxxxxxx-yyyyy` (un *pod* généré par le _replica set_) Note: à partir de 1.10.1, les types de ressources sont affichés plus en détail. .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Que représentent ces différentes choses? - Un _deployment_ est une structure de haut niveau - permet la montée en charge, les mises à jour, les retour-arrière - plusieurs déploiements peuvent être cumulés pour implémenter un [_canary deployment_](https://kubernetes.io/docs/concepts/cluster-administration/manage-deployment/#canary-deployments) - délègue la gestion des _pods_ aux _replica sets_ - Un *replica set* est une structure de bas niveau - s'assure qu'un nombre de _pods_ identiques est lancé - permet la montée en chage - est rarement utilisé directement - Un _replication controlller_ est l'ancêtre (déprécié) du _replica set_ .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Notre déploiement `pingpong` - `kubectl run` déclare un *deployment*, `deployment.apps/pingpong` ``` NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE deployment.apps/pingpong 1 1 1 1 10m ``` - Ce déploiement a généré un *replica set*, `replicaset.apps/pingpong-xxxxxxxxxx` ``` NAME DESIRED CURRENT READY AGE replicaset.apps/pingpong-7c8bbcd9bc 1 1 1 10m ``` - Ce _replica set_ a créé un _pod_, `pod/pingpong-xxxxxxxxxx-yyyyy` ``` NAME READY STATUS RESTARTS AGE pod/pingpong-7c8bbcd9bc-6c9qz 1/1 Running 0 10m ``` - Nous verrons plus tard comment ces gars vivent ensemble pour: - la montée en charge, la haute disponibilité, les mises à jour en continu .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Afficher la sortie du conteneur - Essayons la commande `kubectl logs` - On lui passera soit un _nom de pod_ ou un _type/name_ (Par ex., si on spécifie un déploiement ou un _replica set_, il nous sortira le premier _pod_ qu'il contient) - Sauf instruction expresse, la commande n'affichera que les logs du premier conteneur du _pod_ (Heureusement qu'il n'y en a qu'un chez nous!) .exercise[ - Afficher le résultat de notre commande `ping`: ```bash kubectl logs deploy/pingpong ``` ] .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Suivre les logs en temps réel - Tout comme `docker logs`, `kubectl logs` supporte des options bien pratiques: - `-f`/`--follow` pour continuer à afficher les logs en temps réel (à la `tail -f`) - `--tail` pour indiquer combien de lignes on veut afficher (depuis la fin) - `--since` pour afficher les logs après un certain _timestamp_ .exercise[ - Voir les derniers logs de notre commande `ping`: ```bash kubectl logs deploy/pingpong --tail 1 --follow ``` <!-- ```wait seq=3``` ```keys ^C``` --> ] .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Escalader notre application - On peut ajouter plusieurs exemplaires de notre conteneur (notre _pod_, pour être plus précis), avec la commande `kubectl scale` .exercise[ - Escalader notre déploiement `pingpong`: ```bash kubectl scale deploy/pingpong --replicas 3 ``` - Noter que cette autre commande fait exactement pareil: ```bash kubectl scale deployment pingpong --replicas 3 ``` ] Note: et si on avait essayé d'escalader `replicaset.apps/pingpong-xxxxxxxxxx`? On pourrait! Mais le *deployment* le remarquerait tout de suite, et le baisserait au niveau initial. .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Résilience - Le *déploiement* `pingpong` affiche son _replica set_ - Le *replica set* s'assure que le bon nombre de _pods_ sont lancés - Que se passe-t-il en cas de disparition inattendue de _pods_? .exercise[ - Dans une fenêtre séparée, lister les _pods_ en continu: ```bash kubectl get pods -w ``` <!-- ```wait Running``` ```keys ^C``` ```hide kubectl wait deploy pingpong --for condition=available``` ```keys kubectl delete pod ping``` ```copypaste pong-..........-.....``` --> - Supprimer un _pod_ ``` kubectl delete pod pingpong-xxxxxxxxxx-yyyyy ``` ] .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Et si on voulait que ça se passe différemment? - Et si on voulait lancer un conteneur "one-shot" qui ne va *pas* se relancer? - On pourrait utiliser `kubectl run --restart=OnFailure` or `kubectl run --restart=Never` - Ces commandes iraient déclarer des *jobs* ou *pods* au lieu de *deployments*. - Sous le capot, `kubectl run` invoque des _"generators"_ pour déclarer les descriptions de ressources. - On pourrait aussi écrire ces descriptions de ressources nous-mêmes (typiquement en YAML), <br/>et les créer sur le cluster avec `kubectl apply -f` (comme on verra plus loin) - Avec `kubectl run --schedule=...`, on peut aussi lancer des *cronjobs* .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Bon, et cet avertissement de déprécation? - Comme nous avons vu dans les diapos précédentes, `kubectl run` peut faire bien des choses. - Le type exact des ressources créées n'est pas flagrant. - Pour rendre les choses plus explicites, on préfère passer par `kubectl create`: - `kubectl create deployment` pour créer un déploiement - `kubectl create job` pour créer un job - `kubectl create cronjob` pour lancer un _job_ à intervalle régulier <br/>(depuis Kubernetes 1.14) - Finalement, `kubectl run` ne sera utilisé que pour démarrer des _pods_ à usage unique (voir https://github.com/kubernetes/kubernetes/pull/68132) .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Divers moyens de créer des ressources - `kubectl run` - facile pour débuter - versatile - `kubectl create <ressource>` - explicite, mais lui manque quelques fonctions - ne peut déclarer de CronJob avant Kubernetes 1.14 - ne peut pas transmettre des arguments en ligne de commande aux déploiements - `kubectl create -f foo.yaml` ou `kubectl apply -f foo.yaml` - 100% des fonctions disponibles - exige d'écrire du YAML .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Afficher les logs de multiple _pods_ - Quand on spécifie un nom de déploiement, les logs d'un seul _pod_ sont affichés - On peut afficher les logs de plusieurs pods en ajoutant un *selector* - Un sélecteur est une expression logique basée sur des *labels* - Pour faciliter les choses, quand on lance `kubectl run monpetitnom`, les objets associés ont un label `run=monpetitnom` .exercise[ - Afficher la dernière ligne de log pour tout _pod_ confondus qui a le label `run=pingpong`: ```bash kubectl logs -l run=pingpong --tail 1 ``` ] .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ### Suivre les logs de plusieurs pods - Est-ce qu'on peut suivre les logs de tous nos _pods_ `pingpong`? .exercise[ - Combiner les options `-l` and `-f`: ```bash kubectl logs -l run=pingpong --tail 1 -f ``` <!-- ```wait seq=``` ```keys ^C``` --> ] *Note: combiner les options `-l` et `-f` est possible depuis Kubernetes 1.14!* *Essayons de comprendre pourquoi ...* .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- class: extra-details ### Suivre les logs de plusieurs pods - Voyons ce qu'il se passe si on essaie de sortir les logs de plus de 5 _pods_ .exercise[ - Escalader notre déploiement: ```bash kubectl scale deployment pingpong --replicas=8 ``` - Afficher les logs en continu: ```bash kubectl logs -l run=pingpong --tail 1 -f ``` ] On devrait voir un message du type: ``` error: you are attempting to follow 8 log streams, but maximum allowed concurency is 5, use --max-log-requests to increase the limit ``` .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- class: extra-details ## Pourquoi ne peut-on pas suivre les logs de plein de pods? - `kubectl` ouvre une connection vers le serveur API par _pod_ - Pour chaque _pod_, le serveur API ouvre une autre connexion vers le kubelet correspondant. - S'il y a 1000 pods dans notre déploiement, cela fait 1000 connexions entrantes + 1000 connexions au serveur API. - Cela peut facilement surcharger le serveur API. - Avant la version 1.14 de K8S, il a été décidé de ne pas autoriser les multiple connexions. - A partir de 1.14, c'est autorisé, mais plafonné à 5 connexions. (paramétrable via `--max-log-requests`) - Pour plus de détails sur les tenants et aboutissants, voir [PR #67573](https://github.com/kubernetes/kubernetes/pull/67573) .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Limitations de `kubectl logs` - On ne voit pas quel _pod_ envoie quelle ligne - Si les _pods_ sont redémarrés / remplacés, le flux de log se fige. - Si de nouveaux _pods_ arrivent, on ne verra pas leurs logs. - Pour suivre les logs de plusieur pods, il nous faut écrire un sélecteur - Certains outils externes corrigent ces limitations: (par ex.: [Stern](https://github.com/wercker/stern)) .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- class: extra-details ## `kubectl logs -l ... --tail N` - En exécutant cette commande dans Kubernetes 1.12, plusieurs lignes s'affichent - C'est une régression quand `--tail` et `-l`/`--selector` sont couplés. - Ca affichera toujours les 10 dernières lignes de la sortie de chaque conteneur. (au lieu du nombre de lignes spécifiées en ligne de commande) - Le problème a été résolu dans Kubernetes 1.13 *Voir [#70554](https://github.com/kubernetes/kubernetes/issues/70554) pour plus de détails.* .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## Est-ce qu'on n'est pas en train de submerger 1.1.1.1? - Si on y réfléchit, c'est une bonne question! - Pourtant, pas d'inquiétude: *Le groupe de recherche APNIC a géré les adresses 1.1.1.1 et 1.0.0.1. Alors qu'elles étaient valides, tellement de gens les ont introduit dans divers systèmes, qu'ils étaient continuellement submergés par un flot de trafic polluant. L'APNIC voulait étudier cette pollution mais à chaque fois qu'ils ont essayé d'annoncer les IPs, le flot de trafic a submergé tout réseau conventionnel. * (Source: https://blog.cloudflare.com/announcing-1111/) - Il est tout à fait improbable que nos pings réunis puissent produire ne serait-ce qu'un modeste truc dans le NOC chez Cloudflare! .debug[[k8s/kubectlrun.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlrun.md)] --- ## 19,000 mots On dit "qu'une image vaut mille mots". Les 19 diapos suivantes montrent ce qu'il se passe quand on lance: ```bash kubectl run web --image=nginx --replicas=3 ``` .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic  .debug[[k8s/deploymentslideshow.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/deploymentslideshow.md)] --- class: pic .interstitial[] --- name: toc-exposer-des-conteneurs class: title Exposer des conteneurs .nav[ [Section préc.](#toc-lancer-nos-premiers-conteneurs-sur-kubernetes) | [Retour à la table des matières](#toc-chapter-2) | [Section suivante](#toc-shipping-images-with-a-registry) ] .debug[(automatically generated title slide)] --- # Exposer des conteneurs - `kubectl expose` crée un *service* pour des _pods_ existant - Un *service* est une adresse stable pour un (ou plusieurs) _pods_ - Si on veut se connecter à nos _pods_, on doit déclarer un nouveau *service* - Une fois que le service est créé, CoreDNS va nous permettre d'y accéder par son nom (i.e après avoir créé le service `hello`, le nom `hello` va pointer quelque part) - Il y a différents types de services, détaillé dans les diapos suivantes: `ClusterIP`, `NodePort`, `LoadBalancer`, `ExternalName` .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Types de service de base - `ClusterIP` (type par défaut) - une adresse IP virtuelle est allouée au service (dans un sous-réseau privé interne) - cette adresse IP est accessible uniquement de l'intérieur du cluster (noeuds et _pods_) - notre code peut se connecter au service par le numéro de port d'origine. - `NodePort` - un port est alloué pour le service (par défaut, entre 30000 et 32768) - ce port est exposé sur *toutes les nodes* et quiconque peut s'y connecter - notre code doit être modifié pour pouvoir s'y connecter Ces types de service sont toujours disponibles. Sous le capot: `kube-proxy` passe par un proxy utilisateur et un tas de règles `iptables`. .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Autres types de service - `LoadBalancer` - un répartiteur de charge externe est alloué pour le service - le répartiteur de charge est configuré en accord <br/>(par ex.: un service `NodePort` est créé, et le répartiteur y envoit le traffic vers son port) - disponible seulement quand l'infrastructure sous-jacente fournit une sorte de "load balancer as a service" <br/>(e.g. AWS, Azure, GCE, OpenStack...) - `ExternalName` - l'entrée DNS gérée par CoreDNS est juste un enregistrement `CNAME` - ni port, ni adresse IP, ni rien d'autre n'est alloué .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Lancer des conteneurs avec ouverture de port - Puisque `ping` n'a nulle part où se connecter, nous allons lancer quelque chose d'autre - On pourrait utiliser l'image officielle `nginx`, mais... ... comment distinguer un backend d'un autre! - On va plutôt passer par `jpetazzo/httpenv`, un petit serveur HTTP écrit en Go - `jpetazzo/httpenv` écoute sur le port 8888 - Il renvoie ses variables d'environnement au format JSON - Les variables d'environnement vont inclure `HOSTNAME`, qui aura pour valeur le nom du _pod_ (et de ce fait, elle aura une valeur différente pour chaque backend) .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Créer un déploiement pour notre serveur HTTP - On *pourrait* lancer `kubectl run httpenv --image=jpetazzo/httpenv` ... - Mais puisque `kubectl run` est bientôt obsolète, voyons voir comment utiliser `kubectl create` à sa place. .exercise[ - Dans une autre fenêtre, surveiller les pods (pour voir quand ils seront créés): ```bash kubectl get pods -w ``` <!-- ```keys ^C``` --> - Créer un déploiement pour ce serveur HTTP super-léger: server: ```bash kubectl create deployment httpenv --image=jpetazzo/httpenv ``` - Escalader le déploiement à 10 replicas: ```bash kubectl scale deployment httpenv --replicas=10 ``` ] .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Exposer notre déploiement - Nous allons déclarer un service `ClusterIP` par défaut .exercise[ - Exposer le port HTTP de notre serveur: ```bash kubectl expose deployment httpenv --port 8888 ``` - Rechercher quelles adresses IP ont été alloués: ```bash kubectl get service ``` ] .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Services: constructions de 4e couche - On peut assigner des adresses IP aux services, mais elles restent dans la *couche 4* (i.e un service n'est pas une adresse IP; c'est une IP+ protocole + port) - La raison en est l'implémentation actuelle de `kube-proxy` (qui se base sur des mécanismes qui ne supportent pas la couche n°3) - Il en résulte que: vous *devez* indiquer le numéro de port de votre service - Lancer des services avec un (ou plusieurs) ports au hasard demandent des bidouilles (comme passer le mode réseau au niveau hôte) .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Tester notre service - Nous allons maintenant envoyer quelques requêtes HTTP à nos _pods_ .exercise[ - Obtenir l'adresse IP qui a été allouée à notre service, *sous forme de script*: ```bash IP=$(kubectl get svc httpenv -o go-template --template '{{ .spec.clusterIP }}') ``` <!-- ```hide kubectl wait deploy httpenv --for condition=available``` --> - Envoyer quelques requêtes: ```bash curl http://$IP:8888/ ``` - Trop de lignes? Filtrer avec `jq`: ```bash curl -s http://$IP:8888/ | jq .HOSTNAME ``` ] -- Essayez-le plusieurs fois! Nos requêtes sont réparties à travers plusieurs _pods_. .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Si on n'a pas besoin d'un répartiteur de charge - Parfois, on voudrait accéder à nos services directement: - si on veut économiser un petit bout de latence (typiquement < 1ms) - si on a besoin de se connecter à n'importe quel port (au lieu de quelques ports fixes) - si on a besoin de communiquer sur un autre protocole qu'UDP ou TCP - si on veut décider comment répartir la charge depuis le client - ... - Dans ce cas, on peut utiliser un "headless service" .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Services Headless - On obtient un service _headless_ en assignant la valeur `None` au champ `clusterIP` (Soit avec `--cluster-ip=None`, ou via un bout de YAML) - Puisqu'il n'y a pas d'adresse IP virtuelle, il n'y pas non plus de répartiteur de charge - CoreDNS va retourner les adresses IP des _pods_ comme autant d'enregistrements `A` - C'est un moyen facile de recenser tous les réplicas d'un deploiement. .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Services et points d'entrée - Un service dispose d'un certain nombre de "points d'entrée" (_endpoint_) - Chaque _endpoint_ est une combinaison "hôte + port" qui pointe vers le service - Les points d'entrée sont maintenus et mis à jour automatiquement par Kubernetes .exercise[ - Vérifier les _endpoints_ que Kubernetes a associé au service `httpenv`: ```bash kubectl describe service httpenv ``` ] Dans l'affichage, il y aura une ligne commençant par `Endpoints:`. Cette ligne liste un tas d'adresses au format `host:port`. .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Afficher les détails d'un _endpoint_ - Dans le cas de nombreux _endpoints_, les commandes d'affichage tronquent la liste ```bash kubectl get endpoints ``` - Pour sortir la liste complète, on peut passer par la commande suivante: ```bash kubectl describe endpoints httpenv kubectl get endpoints httpenv -o yaml ``` - Ces commandes vont nous montrer une liste d'adresses IP - On devrait retrouver ces mêmes adresses IP dans les _pods_ correspondants: ```bash kubectl get pods -l app=httpenv -o wide ``` .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- class: extra-details ## `endpoints`, pas `endpoint` - `endpoints` est la seule ressource qui ne s'écrit jamais au singulier ```bash $ kubectl get endpoint error: the server doesn't have a resource type "endpoint" ``` - C'est parce que le type lui-même est pluriel (contrairement à toutes les autres ressources) - Il n'existe aucun objet `endpoint`: `type Endpoints struct` - Le type ne représente pas un seul _endpoint_, mais une liste d'_endpoints_ .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- ## Exposer des services au monde extérieur - Le type par défaut (ClusterIP) ne fonctionne que pour le trafic interne - Si nous voulons accepter du trafic depuis l'extene, on devra utiliser soit: - NodePort (exposer un service sur un port TCP entre 30000 et 32768) - LoadBalancer (si notre fournisseur de cloud est compatible) - ExternalIP (passer par l'adresse IP externe d'une node) - Ingress (mécanisme spécial pour les services HTTP) *Nous détaillerons l'usage des NodePorts et Ingresses plus loin.* .debug[[k8s/kubectlexpose.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlexpose.md)] --- class: pic .interstitial[] --- name: toc-shipping-images-with-a-registry class: title Shipping images with a registry .nav[ [Section préc.](#toc-exposer-des-conteneurs) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-running-our-application-on-kubernetes) ] .debug[(automatically generated title slide)] --- # Shipping images with a registry - Initially, our app was running on a single node - We could *build* and *run* in the same place - Therefore, we did not need to *ship* anything - Now that we want to run on a cluster, things are different - The easiest way to ship container images is to use a registry .debug[[k8s/shippingimages.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/shippingimages.md)] --- ## How Docker registries work (a reminder) - What happens when we execute `docker run alpine` ? - If the Engine needs to pull the `alpine` image, it expands it into `library/alpine` - `library/alpine` is expanded into `index.docker.io/library/alpine` - The Engine communicates with `index.docker.io` to retrieve `library/alpine:latest` - To use something else than `index.docker.io`, we specify it in the image name - Examples: ```bash docker pull gcr.io/google-containers/alpine-with-bash:1.0 docker build -t registry.mycompany.io:5000/myimage:awesome . docker push registry.mycompany.io:5000/myimage:awesome ``` .debug[[k8s/shippingimages.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/shippingimages.md)] --- ## Running DockerCoins on Kubernetes - Create one deployment for each component (hasher, redis, rng, webui, worker) - Expose deployments that need to accept connections (hasher, redis, rng, webui) - For redis, we can use the official redis image - For the 4 others, we need to build images and push them to some registry .debug[[k8s/shippingimages.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/shippingimages.md)] --- ## Building and shipping images - There are *many* options! - Manually: - build locally (with `docker build` or otherwise) - push to the registry - Automatically: - build and test locally - when ready, commit and push a code repository - the code repository notifies an automated build system - that system gets the code, builds it, pushes the image to the registry .debug[[k8s/shippingimages.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/shippingimages.md)] --- ## Which registry do we want to use? - There are SAAS products like Docker Hub, Quay ... - Each major cloud provider has an option as well (ACR on Azure, ECR on AWS, GCR on Google Cloud...) - There are also commercial products to run our own registry (Docker EE, Quay...) - And open source options, too! - When picking a registry, pay attention to its build system (when it has one) .debug[[k8s/shippingimages.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/shippingimages.md)] --- ## Using images from the Docker Hub - For everyone's convenience, we took care of building DockerCoins images - We pushed these images to the DockerHub, under the [dockercoins](https://hub.docker.com/u/dockercoins) user - These images are *tagged* with a version number, `v0.1` - The full image names are therefore: - `dockercoins/hasher:v0.1` - `dockercoins/rng:v0.1` - `dockercoins/webui:v0.1` - `dockercoins/worker:v0.1` .debug[[k8s/buildshiprun-dockerhub.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/buildshiprun-dockerhub.md)] --- ## Setting `$REGISTRY` and `$TAG` - In the upcoming exercises and labs, we use a couple of environment variables: - `$REGISTRY` as a prefix to all image names - `$TAG` as the image version tag - For example, the worker image is `$REGISTRY/worker:$TAG` - If you copy-paste the commands in these exercises: **make sure that you set `$REGISTRY` and `$TAG` first!** - For example: ``` export REGISTRY=dockercoins TAG=v0.1 ``` (this will expand `$REGISTRY/worker:$TAG` to `dockercoins/worker:v0.1`) .debug[[k8s/buildshiprun-dockerhub.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/buildshiprun-dockerhub.md)] --- class: pic .interstitial[] --- name: toc-running-our-application-on-kubernetes class: title Running our application on Kubernetes .nav[ [Section préc.](#toc-shipping-images-with-a-registry) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-accessing-the-api-with-kubectl-proxy) ] .debug[(automatically generated title slide)] --- # Running our application on Kubernetes - We can now deploy our code (as well as a redis instance) .exercise[ - Deploy `redis`: ```bash kubectl create deployment redis --image=redis ``` - Deploy everything else: ```bash set -u for SERVICE in hasher rng webui worker; do kubectl create deployment $SERVICE --image=$REGISTRY/$SERVICE:$TAG done ``` ] .debug[[k8s/ourapponkube.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ourapponkube.md)] --- ## Is this working? - After waiting for the deployment to complete, let's look at the logs! (Hint: use `kubectl get deploy -w` to watch deployment events) .exercise[ <!-- ```hide kubectl wait deploy/rng --for condition=available kubectl wait deploy/worker --for condition=available ``` --> - Look at some logs: ```bash kubectl logs deploy/rng kubectl logs deploy/worker ``` ] -- 🤔 `rng` is fine ... But not `worker`. -- 💡 Oh right! We forgot to `expose`. .debug[[k8s/ourapponkube.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ourapponkube.md)] --- ## Connecting containers together - Three deployments need to be reachable by others: `hasher`, `redis`, `rng` - `worker` doesn't need to be exposed - `webui` will be dealt with later .exercise[ - Expose each deployment, specifying the right port: ```bash kubectl expose deployment redis --port 6379 kubectl expose deployment rng --port 80 kubectl expose deployment hasher --port 80 ``` ] .debug[[k8s/ourapponkube.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ourapponkube.md)] --- ## Is this working yet? - The `worker` has an infinite loop, that retries 10 seconds after an error .exercise[ - Stream the worker's logs: ```bash kubectl logs deploy/worker --follow ``` (Give it about 10 seconds to recover) <!-- ```wait units of work done, updating hash counter``` ```keys ^C``` --> ] -- We should now see the `worker`, well, working happily. .debug[[k8s/ourapponkube.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ourapponkube.md)] --- ## Exposing services for external access - Now we would like to access the Web UI - We will expose it with a `NodePort` (just like we did for the registry) .exercise[ - Create a `NodePort` service for the Web UI: ```bash kubectl expose deploy/webui --type=NodePort --port=80 ``` - Check the port that was allocated: ```bash kubectl get svc ``` ] .debug[[k8s/ourapponkube.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ourapponkube.md)] --- ## Accessing the web UI - We can now connect to *any node*, on the allocated node port, to view the web UI .exercise[ - Open the web UI in your browser (http://node-ip-address:3xxxx/) <!-- ```open http://node1:3xxxx/``` --> ] -- Yes, this may take a little while to update. *(Narrator: it was DNS.)* -- *Alright, we're back to where we started, when we were running on a single node!* .debug[[k8s/ourapponkube.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ourapponkube.md)] --- class: pic .interstitial[] --- name: toc-accessing-the-api-with-kubectl-proxy class: title Accessing the API with `kubectl proxy` .nav[ [Section préc.](#toc-running-our-application-on-kubernetes) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-controlling-the-cluster-remotely) ] .debug[(automatically generated title slide)] --- # Accessing the API with `kubectl proxy` - The API requires us to authenticate.red[¹] - There are many authentication methods available, including: - TLS client certificates <br/> (that's what we've used so far) - HTTP basic password authentication <br/> (from a static file; not recommended) - various token mechanisms <br/> (detailed in the [documentation](https://kubernetes.io/docs/reference/access-authn-authz/authentication/#authentication-strategies)) .red[¹]OK, we lied. If you don't authenticate, you are considered to be user `system:anonymous`, which doesn't have any access rights by default. .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Accessing the API directly - Let's see what happens if we try to access the API directly with `curl` .exercise[ - Retrieve the ClusterIP allocated to the `kubernetes` service: ```bash kubectl get svc kubernetes ``` - Replace the IP below and try to connect with `curl`: ```bash curl -k https://`10.96.0.1`/ ``` ] The API will tell us that user `system:anonymous` cannot access this path. .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Authenticating to the API If we wanted to talk to the API, we would need to: - extract our TLS key and certificate information from `~/.kube/config` (the information is in PEM format, encoded in base64) - use that information to present our certificate when connecting (for instance, with `openssl s_client -key ... -cert ... -connect ...`) - figure out exactly which credentials to use (once we start juggling multiple clusters) - change that whole process if we're using another authentication method 🤔 There has to be a better way! .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Using `kubectl proxy` for authentication - `kubectl proxy` runs a proxy in the foreground - This proxy lets us access the Kubernetes API without authentication (`kubectl proxy` adds our credentials on the fly to the requests) - This proxy lets us access the Kubernetes API over plain HTTP - This is a great tool to learn and experiment with the Kubernetes API - ... And for serious uses as well (suitable for one-shot scripts) - For unattended use, it's better to create a [service account](https://kubernetes.io/docs/tasks/configure-pod-container/configure-service-account/) .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Trying `kubectl proxy` - Let's start `kubectl proxy` and then do a simple request with `curl`! .exercise[ - Start `kubectl proxy` in the background: ```bash kubectl proxy & ``` - Access the API's default route: ```bash curl localhost:8001 ``` <!-- ```wait /version``` ```keys ^J``` --> - Terminate the proxy: ```bash kill %1 ``` ] The output is a list of available API routes. .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## `kubectl proxy` is intended for local use - By default, the proxy listens on port 8001 (But this can be changed, or we can tell `kubectl proxy` to pick a port) - By default, the proxy binds to `127.0.0.1` (Making it unreachable from other machines, for security reasons) - By default, the proxy only accepts connections from: `^localhost$,^127\.0\.0\.1$,^\[::1\]$` - This is great when running `kubectl proxy` locally - Not-so-great when you want to connect to the proxy from a remote machine .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Running `kubectl proxy` on a remote machine - If we wanted to connect to the proxy from another machine, we would need to: - bind to `INADDR_ANY` instead of `127.0.0.1` - accept connections from any address - This is achieved with: ``` kubectl proxy --port=8888 --address=0.0.0.0 --accept-hosts=.* ``` .warning[Do not do this on a real cluster: it opens full unauthenticated access!] .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Security considerations - Running `kubectl proxy` openly is a huge security risk - It is slightly better to run the proxy where you need it (and copy credentials, e.g. `~/.kube/config`, to that place) - It is even better to use a limited account with reduced permissions .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- ## Good to know ... - `kubectl proxy` also gives access to all internal services - Specifically, services are exposed as such: ``` /api/v1/namespaces/<namespace>/services/<service>/proxy ``` - We can use `kubectl proxy` to access an internal service in a pinch (or, for non HTTP services, `kubectl port-forward`) - This is not very useful when running `kubectl` directly on the cluster (since we could connect to the services directly anyway) - But it is very powerful as soon as you run `kubectl` from a remote machine .debug[[k8s/kubectlproxy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kubectlproxy.md)] --- class: pic .interstitial[] --- name: toc-controlling-the-cluster-remotely class: title Controlling the cluster remotely .nav[ [Section préc.](#toc-accessing-the-api-with-kubectl-proxy) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-accessing-internal-services) ] .debug[(automatically generated title slide)] --- # Controlling the cluster remotely - All the operations that we do with `kubectl` can be done remotely - In this section, we are going to use `kubectl` from our local machine .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Requirements .warning[The exercises in this chapter should be done *on your local machine*.] - `kubectl` is officially available on Linux, macOS, Windows (and unofficially anywhere we can build and run Go binaries) - You may skip these exercises if you are following along from: - a tablet or phone - a web-based terminal - an environment where you can't install and run new binaries .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Installing `kubectl` - If you already have `kubectl` on your local machine, you can skip this .exercise[ <!-- ##VERSION## --> - Download the `kubectl` binary from one of these links: [Linux](https://storage.googleapis.com/kubernetes-release/release/v1.14.2/bin/linux/amd64/kubectl) | [macOS](https://storage.googleapis.com/kubernetes-release/release/v1.14.2/bin/darwin/amd64/kubectl) | [Windows](https://storage.googleapis.com/kubernetes-release/release/v1.14.2/bin/windows/amd64/kubectl.exe) - On Linux and macOS, make the binary executable with `chmod +x kubectl` (And remember to run it with `./kubectl` or move it to your `$PATH`) ] Note: if you are following along with a different platform (e.g. Linux on an architecture different from amd64, or with a phone or tablet), installing `kubectl` might be more complicated (or even impossible) so feel free to skip this section. .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Testing `kubectl` - Check that `kubectl` works correctly (before even trying to connect to a remote cluster!) .exercise[ - Ask `kubectl` to show its version number: ```bash kubectl version --client ``` ] The output should look like this: ``` Client Version: version.Info{Major:"1", Minor:"14", GitVersion:"v1.14.0", GitCommit:"641856db18352033a0d96dbc99153fa3b27298e5", GitTreeState:"clean", BuildDate:"2019-03-25T15:53:57Z", GoVersion:"go1.12.1", Compiler:"gc", Platform:"linux/amd64"} ``` .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Preserving the existing `~/.kube/config` - If you already have a `~/.kube/config` file, rename it (we are going to overwrite it in the following slides!) - If you never used `kubectl` on your machine before: nothing to do! .exercise[ - Make a copy of `~/.kube/config`; if you are using macOS or Linux, you can do: ```bash cp ~/.kube/config ~/.kube/config.before.training ``` - If you are using Windows, you will need to adapt this command ] .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Copying the configuration file from `node1` - The `~/.kube/config` file that is on `node1` contains all the credentials we need - Let's copy it over! .exercise[ - Copy the file from `node1`; if you are using macOS or Linux, you can do: ``` scp `USER`@`X.X.X.X`:.kube/config ~/.kube/config # Make sure to replace X.X.X.X with the IP address of node1, # and USER with the user name used to log into node1! ``` - If you are using Windows, adapt these instructions to your SSH client ] .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Updating the server address - There is a good chance that we need to update the server address - To know if it is necessary, run `kubectl config view` - Look for the `server:` address: - if it matches the public IP address of `node1`, you're good! - if it is anything else (especially a private IP address), update it! - To update the server address, run: ```bash kubectl config set-cluster kubernetes --server=https://`X.X.X.X`:6443 # Make sure to replace X.X.X.X with the IP address of node1! ``` .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- class: extra-details ## What if we get a certificate error? - Generally, the Kubernetes API uses a certificate that is valid for: - `kubernetes` - `kubernetes.default` - `kubernetes.default.svc` - `kubernetes.default.svc.cluster.local` - the ClusterIP address of the `kubernetes` service - the hostname of the node hosting the control plane (e.g. `node1`) - the IP address of the node hosting the control plane - On most clouds, the IP address of the node is an internal IP address - ... And we are going to connect over the external IP address - ... And that external IP address was not used when creating the certificate! .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- class: extra-details ## Working around the certificate error - We need to tell `kubectl` to skip TLS verification (only do this with testing clusters, never in production!) - The following command will do the trick: ```bash kubectl config set-cluster kubernetes --insecure-skip-tls-verify ``` .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- ## Checking that we can connect to the cluster - We can now run a couple of trivial commands to check that all is well .exercise[ - Check the versions of the local client and remote server: ```bash kubectl version ``` - View the nodes of the cluster: ```bash kubectl get nodes ``` ] We can now utilize the cluster exactly as we did before, except that it's remote. .debug[[k8s/localkubeconfig.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/localkubeconfig.md)] --- class: pic .interstitial[] --- name: toc-accessing-internal-services class: title Accessing internal services .nav[ [Section préc.](#toc-controlling-the-cluster-remotely) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-the-kubernetes-dashboard) ] .debug[(automatically generated title slide)] --- # Accessing internal services - When we are logged in on a cluster node, we can access internal services (by virtue of the Kubernetes network model: all nodes can reach all pods and services) - When we are accessing a remote cluster, things are different (generally, our local machine won't have access to the cluster's internal subnet) - How can we temporarily access a service without exposing it to everyone? -- - `kubectl proxy`: gives us access to the API, which includes a proxy for HTTP resources - `kubectl port-forward`: allows forwarding of TCP ports to arbitrary pods, services, ... .debug[[k8s/accessinternal.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/accessinternal.md)] --- ## Suspension of disbelief The exercises in this section assume that we have set up `kubectl` on our local machine in order to access a remote cluster. We will therefore show how to access services and pods of the remote cluster, from our local machine. You can also run these exercises directly on the cluster (if you haven't installed and set up `kubectl` locally). Running commands locally will be less useful (since you could access services and pods directly), but keep in mind that these commands will work anywhere as long as you have installed and set up `kubectl` to communicate with your cluster. .debug[[k8s/accessinternal.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/accessinternal.md)] --- ## `kubectl proxy` in theory - Running `kubectl proxy` gives us access to the entire Kubernetes API - The API includes routes to proxy HTTP traffic - These routes look like the following: `/api/v1/namespaces/<namespace>/services/<service>/proxy` - We just add the URI to the end of the request, for instance: `/api/v1/namespaces/<namespace>/services/<service>/proxy/index.html` - We can access `services` and `pods` this way .debug[[k8s/accessinternal.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/accessinternal.md)] --- ## `kubectl proxy` in practice - Let's access the `webui` service through `kubectl proxy` .exercise[ - Run an API proxy in the background: ```bash kubectl proxy & ``` - Access the `webui` service: ```bash curl localhost:8001/api/v1/namespaces/default/services/webui/proxy/index.html ``` - Terminate the proxy: ```bash kill %1 ``` ] .debug[[k8s/accessinternal.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/accessinternal.md)] --- ## `kubectl port-forward` in theory - What if we want to access a TCP service? - We can use `kubectl port-forward` instead - It will create a TCP relay to forward connections to a specific port (of a pod, service, deployment...) - The syntax is: `kubectl port-forward service/name_of_service local_port:remote_port` - If only one port number is specified, it is used for both local and remote ports .debug[[k8s/accessinternal.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/accessinternal.md)] --- ## `kubectl port-forward` in practice - Let's access our remote Redis server .exercise[ - Forward connections from local port 10000 to remote port 6379: ```bash kubectl port-forward svc/redis 10000:6379 & ``` - Connect to the Redis server: ```bash telnet localhost 10000 ``` - Issue a few commands, e.g. `INFO server` then `QUIT` <!-- ```wait Connected to localhost``` ```keys INFO server``` ```keys ^J``` ```keys QUIT``` ```keys ^J``` --> - Terminate the port forwarder: ```bash kill %1 ``` ] .debug[[k8s/accessinternal.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/accessinternal.md)] --- class: pic .interstitial[] --- name: toc-the-kubernetes-dashboard class: title The Kubernetes dashboard .nav[ [Section préc.](#toc-accessing-internal-services) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-security-implications-of-kubectl-apply) ] .debug[(automatically generated title slide)] --- # The Kubernetes dashboard - Kubernetes resources can also be viewed with a web dashboard - That dashboard is usually exposed over HTTPS (this requires obtaining a proper TLS certificate) - Dashboard users need to authenticate - We are going to take a *dangerous* shortcut .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- ## The insecure method - We could (and should) use [Let's Encrypt](https://letsencrypt.org/) ... - ... but we don't want to deal with TLS certificates - We could (and should) learn how authentication and authorization work ... - ... but we will use a guest account with admin access instead .footnote[.warning[Yes, this will open our cluster to all kinds of shenanigans. Don't do this at home.]] .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- ## Running a very insecure dashboard - We are going to deploy that dashboard with *one single command* - This command will create all the necessary resources (the dashboard itself, the HTTP wrapper, the admin/guest account) - All these resources are defined in a YAML file - All we have to do is load that YAML file with with `kubectl apply -f` .exercise[ - Create all the dashboard resources, with the following command: ```bash kubectl apply -f ~/container.training/k8s/insecure-dashboard.yaml ``` ] .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- ## Connecting to the dashboard .exercise[ - Check which port the dashboard is on: ```bash kubectl get svc dashboard ``` ] You'll want the `3xxxx` port. .exercise[ - Connect to http://oneofournodes:3xxxx/ <!-- ```open http://node1:3xxxx/``` --> ] The dashboard will then ask you which authentication you want to use. .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- ## Dashboard authentication - We have three authentication options at this point: - token (associated with a role that has appropriate permissions) - kubeconfig (e.g. using the `~/.kube/config` file from `node1`) - "skip" (use the dashboard "service account") - Let's use "skip": we're logged in! -- .warning[By the way, we just added a backdoor to our Kubernetes cluster!] .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- ## Running the Kubernetes dashboard securely - The steps that we just showed you are *for educational purposes only!* - If you do that on your production cluster, people [can and will abuse it](https://redlock.io/blog/cryptojacking-tesla) - For an in-depth discussion about securing the dashboard, <br/> check [this excellent post on Heptio's blog](https://blog.heptio.com/on-securing-the-kubernetes-dashboard-16b09b1b7aca) .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- class: pic .interstitial[] --- name: toc-security-implications-of-kubectl-apply class: title Security implications of `kubectl apply` .nav[ [Section préc.](#toc-the-kubernetes-dashboard) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-scaling-our-demo-app) ] .debug[(automatically generated title slide)] --- # Security implications of `kubectl apply` - When we do `kubectl apply -f <URL>`, we create arbitrary resources - Resources can be evil; imagine a `deployment` that ... -- - starts bitcoin miners on the whole cluster -- - hides in a non-default namespace -- - bind-mounts our nodes' filesystem -- - inserts SSH keys in the root account (on the node) -- - encrypts our data and ransoms it -- - ☠️☠️☠️ .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- ## `kubectl apply` is the new `curl | sh` - `curl | sh` is convenient - It's safe if you use HTTPS URLs from trusted sources -- - `kubectl apply -f` is convenient - It's safe if you use HTTPS URLs from trusted sources - Example: the official setup instructions for most pod networks -- - It introduces new failure modes (for instance, if you try to apply YAML from a link that's no longer valid) .debug[[k8s/dashboard.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/dashboard.md)] --- class: pic .interstitial[] --- name: toc-scaling-our-demo-app class: title Scaling our demo app .nav[ [Section préc.](#toc-security-implications-of-kubectl-apply) | [Retour à la table des matières](#toc-chapter-3) | [Section suivante](#toc-daemon-sets) ] .debug[(automatically generated title slide)] --- # Scaling our demo app - Our ultimate goal is to get more DockerCoins (i.e. increase the number of loops per second shown on the web UI) - Let's look at the architecture again:  - The loop is done in the worker; perhaps we could try adding more workers? .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Adding another worker - All we have to do is scale the `worker` Deployment .exercise[ - Open two new terminals to check what's going on with pods and deployments: ```bash kubectl get pods -w kubectl get deployments -w ``` <!-- ```wait RESTARTS``` ```keys ^C``` ```wait AVAILABLE``` ```keys ^C``` --> - Now, create more `worker` replicas: ```bash kubectl scale deployment worker --replicas=2 ``` ] After a few seconds, the graph in the web UI should show up. .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Adding more workers - If 2 workers give us 2x speed, what about 3 workers? .exercise[ - Scale the `worker` Deployment further: ```bash kubectl scale deployment worker --replicas=3 ``` ] The graph in the web UI should go up again. (This is looking great! We're gonna be RICH!) .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Adding even more workers - Let's see if 10 workers give us 10x speed! .exercise[ - Scale the `worker` Deployment to a bigger number: ```bash kubectl scale deployment worker --replicas=10 ``` ] -- The graph will peak at 10 hashes/second. (We can add as many workers as we want: we will never go past 10 hashes/second.) .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- class: extra-details ## Didn't we briefly exceed 10 hashes/second? - It may *look like it*, because the web UI shows instant speed - The instant speed can briefly exceed 10 hashes/second - The average speed cannot - The instant speed can be biased because of how it's computed .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- class: extra-details ## Why instant speed is misleading - The instant speed is computed client-side by the web UI - The web UI checks the hash counter once per second <br/> (and does a classic (h2-h1)/(t2-t1) speed computation) - The counter is updated once per second by the workers - These timings are not exact <br/> (e.g. the web UI check interval is client-side JavaScript) - Sometimes, between two web UI counter measurements, <br/> the workers are able to update the counter *twice* - During that cycle, the instant speed will appear to be much bigger <br/> (but it will be compensated by lower instant speed before and after) .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Why are we stuck at 10 hashes per second? - If this was high-quality, production code, we would have instrumentation (Datadog, Honeycomb, New Relic, statsd, Sumologic, ...) - It's not! - Perhaps we could benchmark our web services? (with tools like `ab`, or even simpler, `httping`) .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Benchmarking our web services - We want to check `hasher` and `rng` - We are going to use `httping` - It's just like `ping`, but using HTTP `GET` requests (it measures how long it takes to perform one `GET` request) - It's used like this: ``` httping [-c count] http://host:port/path ``` - Or even simpler: ``` httping ip.ad.dr.ess ``` - We will use `httping` on the ClusterIP addresses of our services .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Obtaining ClusterIP addresses - We can simply check the output of `kubectl get services` - Or do it programmatically, as in the example below .exercise[ - Retrieve the IP addresses: ```bash HASHER=$(kubectl get svc hasher -o go-template={{.spec.clusterIP}}) RNG=$(kubectl get svc rng -o go-template={{.spec.clusterIP}}) ``` ] Now we can access the IP addresses of our services through `$HASHER` and `$RNG`. .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Checking `hasher` and `rng` response times .exercise[ - Check the response times for both services: ```bash httping -c 3 $HASHER httping -c 3 $RNG ``` ] - `hasher` is fine (it should take a few milliseconds to reply) - `rng` is not (it should take about 700 milliseconds if there are 10 workers) - Something is wrong with `rng`, but ... what? .debug[[k8s/scalingdockercoins.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/scalingdockercoins.md)] --- ## Hasardons-nous à des conclusions hâtives - Le goulot d'étranglement semble être `rng`. - Et si *à tout hasard*, nous n'avions pas assez d'entropie, et qu'on ne pouvait générer assez de nombres aléatoires? - On doit escalader le service `rng` sur plusieurs machines! Note: ceci est une fiction! Nous avons assez d'entropie. Mais on a besoin d'un prétexte pour monter en charge. (En réalité, le code de `rng` exploite `/dev/urandom`, qui n'est jamais à court d'entropie...) <br/> ...et c'est [tout aussi bon que `/dev/random`](https://www.slideshare.net/PacSecJP/filippo-plain-simple-reality-of-entropy).) .debug[[shared/hastyconclusions.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/hastyconclusions.md)] --- class: pic .interstitial[] --- name: toc-daemon-sets class: title Daemon sets .nav[ [Section préc.](#toc-scaling-our-demo-app) | [Retour à la table des matières](#toc-chapter-4) | [Section suivante](#toc-labels-and-selectors) ] .debug[(automatically generated title slide)] --- # Daemon sets - We want to scale `rng` in a way that is different from how we scaled `worker` - We want one (and exactly one) instance of `rng` per node - What if we just scale up `deploy/rng` to the number of nodes? - nothing guarantees that the `rng` containers will be distributed evenly - if we add nodes later, they will not automatically run a copy of `rng` - if we remove (or reboot) a node, one `rng` container will restart elsewhere - Instead of a `deployment`, we will use a `daemonset` .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Daemon sets in practice - Daemon sets are great for cluster-wide, per-node processes: - `kube-proxy` - `weave` (our overlay network) - monitoring agents - hardware management tools (e.g. SCSI/FC HBA agents) - etc. - They can also be restricted to run [only on some nodes](https://kubernetes.io/docs/concepts/workloads/controllers/daemonset/#running-pods-on-only-some-nodes) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Creating a daemon set <!-- ##VERSION## --> - Unfortunately, as of Kubernetes 1.14, the CLI cannot create daemon sets -- - More precisely: it doesn't have a subcommand to create a daemon set -- - But any kind of resource can always be created by providing a YAML description: ```bash kubectl apply -f foo.yaml ``` -- - How do we create the YAML file for our daemon set? -- - option 1: [read the docs](https://kubernetes.io/docs/concepts/workloads/controllers/daemonset/#create-a-daemonset) -- - option 2: `vi` our way out of it .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Creating the YAML file for our daemon set - Let's start with the YAML file for the current `rng` resource .exercise[ - Dump the `rng` resource in YAML: ```bash kubectl get deploy/rng -o yaml >rng.yml ``` - Edit `rng.yml` ] .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## "Casting" a resource to another - What if we just changed the `kind` field? (It can't be that easy, right?) .exercise[ - Change `kind: Deployment` to `kind: DaemonSet` <!-- ```bash vim rng.yml``` ```wait kind: Deployment``` ```keys /Deployment``` ```keys ^J``` ```keys cwDaemonSet``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> - Save, quit - Try to create our new resource: ``` kubectl apply -f rng.yml ``` ] -- We all knew this couldn't be that easy, right! .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Understanding the problem - The core of the error is: ``` error validating data: [ValidationError(DaemonSet.spec): unknown field "replicas" in io.k8s.api.extensions.v1beta1.DaemonSetSpec, ... ``` -- - *Obviously,* it doesn't make sense to specify a number of replicas for a daemon set -- - Workaround: fix the YAML - remove the `replicas` field - remove the `strategy` field (which defines the rollout mechanism for a deployment) - remove the `progressDeadlineSeconds` field (also used by the rollout mechanism) - remove the `status: {}` line at the end -- - Or, we could also ... .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Use the `--force`, Luke - We could also tell Kubernetes to ignore these errors and try anyway - The `--force` flag's actual name is `--validate=false` .exercise[ - Try to load our YAML file and ignore errors: ```bash kubectl apply -f rng.yml --validate=false ``` ] -- 🎩✨🐇 -- Wait ... Now, can it be *that* easy? .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Checking what we've done - Did we transform our `deployment` into a `daemonset`? .exercise[ - Look at the resources that we have now: ```bash kubectl get all ``` ] -- We have two resources called `rng`: - the *deployment* that was existing before - the *daemon set* that we just created We also have one too many pods. <br/> (The pod corresponding to the *deployment* still exists.) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## `deploy/rng` and `ds/rng` - You can have different resource types with the same name (i.e. a *deployment* and a *daemon set* both named `rng`) - We still have the old `rng` *deployment* ``` NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE deployment.apps/rng 1 1 1 1 18m ``` - But now we have the new `rng` *daemon set* as well ``` NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/rng 2 2 2 2 2 <none> 9s ``` .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Too many pods - If we check with `kubectl get pods`, we see: - *one pod* for the deployment (named `rng-xxxxxxxxxx-yyyyy`) - *one pod per node* for the daemon set (named `rng-zzzzz`) ``` NAME READY STATUS RESTARTS AGE rng-54f57d4d49-7pt82 1/1 Running 0 11m rng-b85tm 1/1 Running 0 25s rng-hfbrr 1/1 Running 0 25s [...] ``` -- The daemon set created one pod per node, except on the master node. The master node has [taints](https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/) preventing pods from running there. (To schedule a pod on this node anyway, the pod will require appropriate [tolerations](https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/).) .footnote[(Off by one? We don't run these pods on the node hosting the control plane.)] .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Is this working? - Look at the web UI -- - The graph should now go above 10 hashes per second! -- - It looks like the newly created pods are serving traffic correctly - How and why did this happen? (We didn't do anything special to add them to the `rng` service load balancer!) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- class: pic .interstitial[] --- name: toc-labels-and-selectors class: title Labels and selectors .nav[ [Section préc.](#toc-daemon-sets) | [Retour à la table des matières](#toc-chapter-4) | [Section suivante](#toc-rolling-updates) ] .debug[(automatically generated title slide)] --- # Labels and selectors - The `rng` *service* is load balancing requests to a set of pods - That set of pods is defined by the *selector* of the `rng` service .exercise[ - Check the *selector* in the `rng` service definition: ```bash kubectl describe service rng ``` ] - The selector is `app=rng` - It means "all the pods having the label `app=rng`" (They can have additional labels as well, that's OK!) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Selector evaluation - We can use selectors with many `kubectl` commands - For instance, with `kubectl get`, `kubectl logs`, `kubectl delete` ... and more .exercise[ - Get the list of pods matching selector `app=rng`: ```bash kubectl get pods -l app=rng kubectl get pods --selector app=rng ``` ] But ... why do these pods (in particular, the *new* ones) have this `app=rng` label? .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Where do labels come from? - When we create a deployment with `kubectl create deployment rng`, <br/>this deployment gets the label `app=rng` - The replica sets created by this deployment also get the label `app=rng` - The pods created by these replica sets also get the label `app=rng` - When we created the daemon set from the deployment, we re-used the same spec - Therefore, the pods created by the daemon set get the same labels .footnote[Note: when we use `kubectl run stuff`, the label is `run=stuff` instead.] .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Updating load balancer configuration - We would like to remove a pod from the load balancer - What would happen if we removed that pod, with `kubectl delete pod ...`? -- It would be re-created immediately (by the replica set or the daemon set) -- - What would happen if we removed the `app=rng` label from that pod? -- It would *also* be re-created immediately -- Why?!? .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Selectors for replica sets and daemon sets - The "mission" of a replica set is: "Make sure that there is the right number of pods matching this spec!" - The "mission" of a daemon set is: "Make sure that there is a pod matching this spec on each node!" -- - *In fact,* replica sets and daemon sets do not check pod specifications - They merely have a *selector*, and they look for pods matching that selector - Yes, we can fool them by manually creating pods with the "right" labels - Bottom line: if we remove our `app=rng` label ... ... The pod "disappears" for its parent, which re-creates another pod to replace it .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- class: extra-details ## Isolation of replica sets and daemon sets - Since both the `rng` daemon set and the `rng` replica set use `app=rng` ... ... Why don't they "find" each other's pods? -- - *Replica sets* have a more specific selector, visible with `kubectl describe` (It looks like `app=rng,pod-template-hash=abcd1234`) - *Daemon sets* also have a more specific selector, but it's invisible (It looks like `app=rng,controller-revision-hash=abcd1234`) - As a result, each controller only "sees" the pods it manages .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Removing a pod from the load balancer - Currently, the `rng` service is defined by the `app=rng` selector - The only way to remove a pod is to remove or change the `app` label - ... But that will cause another pod to be created instead! - What's the solution? -- - We need to change the selector of the `rng` service! - Let's add another label to that selector (e.g. `enabled=yes`) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Complex selectors - If a selector specifies multiple labels, they are understood as a logical *AND* (In other words: the pods must match all the labels) - Kubernetes has support for advanced, set-based selectors (But these cannot be used with services, at least not yet!) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## The plan 1. Add the label `enabled=yes` to all our `rng` pods 2. Update the selector for the `rng` service to also include `enabled=yes` 3. Toggle traffic to a pod by manually adding/removing the `enabled` label 4. Profit! *Note: if we swap steps 1 and 2, it will cause a short service disruption, because there will be a period of time during which the service selector won't match any pod. During that time, requests to the service will time out. By doing things in the order above, we guarantee that there won't be any interruption.* .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Adding labels to pods - We want to add the label `enabled=yes` to all pods that have `app=rng` - We could edit each pod one by one with `kubectl edit` ... - ... Or we could use `kubectl label` to label them all - `kubectl label` can use selectors itself .exercise[ - Add `enabled=yes` to all pods that have `app=rng`: ```bash kubectl label pods -l app=rng enabled=yes ``` ] .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Updating the service selector - We need to edit the service specification - Reminder: in the service definition, we will see `app: rng` in two places - the label of the service itself (we don't need to touch that one) - the selector of the service (that's the one we want to change) .exercise[ - Update the service to add `enabled: yes` to its selector: ```bash kubectl edit service rng ``` <!-- ```wait Please edit the object below``` ```keys /app: rng``` ```keys ^J``` ```keys noenabled: yes``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> ] -- ... And then we get *the weirdest error ever.* Why? .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## When the YAML parser is being too smart - YAML parsers try to help us: - `xyz` is the string `"xyz"` - `42` is the integer `42` - `yes` is the boolean value `true` - If we want the string `"42"` or the string `"yes"`, we have to quote them - So we have to use `enabled: "yes"` .footnote[For a good laugh: if we had used "ja", "oui", "si" ... as the value, it would have worked!] .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Updating the service selector, take 2 .exercise[ - Update the service to add `enabled: "yes"` to its selector: ```bash kubectl edit service rng ``` <!-- ```wait Please edit the object below``` ```keys /app: rng``` ```keys ^J``` ```keys noenabled: "yes"``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> ] This time it should work! If we did everything correctly, the web UI shouldn't show any change. .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Updating labels - We want to disable the pod that was created by the deployment - All we have to do, is remove the `enabled` label from that pod - To identify that pod, we can use its name - ... Or rely on the fact that it's the only one with a `pod-template-hash` label - Good to know: - `kubectl label ... foo=` doesn't remove a label (it sets it to an empty string) - to remove label `foo`, use `kubectl label ... foo-` - to change an existing label, we would need to add `--overwrite` .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Removing a pod from the load balancer .exercise[ - In one window, check the logs of that pod: ```bash POD=$(kubectl get pod -l app=rng,pod-template-hash -o name) kubectl logs --tail 1 --follow $POD ``` (We should see a steady stream of HTTP logs) - In another window, remove the label from the pod: ```bash kubectl label pod -l app=rng,pod-template-hash enabled- ``` (The stream of HTTP logs should stop immediately) ] There might be a slight change in the web UI (since we removed a bit of capacity from the `rng` service). If we remove more pods, the effect should be more visible. .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- class: extra-details ## Updating the daemon set - If we scale up our cluster by adding new nodes, the daemon set will create more pods - These pods won't have the `enabled=yes` label - If we want these pods to have that label, we need to edit the daemon set spec - We can do that with e.g. `kubectl edit daemonset rng` .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- class: extra-details ## We've put resources in your resources - Reminder: a daemon set is a resource that creates more resources! - There is a difference between: - the label(s) of a resource (in the `metadata` block in the beginning) - the selector of a resource (in the `spec` block) - the label(s) of the resource(s) created by the first resource (in the `template` block) - We would need to update the selector and the template (metadata labels are not mandatory) - The template must match the selector (i.e. the resource will refuse to create resources that it will not select) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Labels and debugging - When a pod is misbehaving, we can delete it: another one will be recreated - But we can also change its labels - It will be removed from the load balancer (it won't receive traffic anymore) - Another pod will be recreated immediately - But the problematic pod is still here, and we can inspect and debug it - We can even re-add it to the rotation if necessary (Very useful to troubleshoot intermittent and elusive bugs) .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- ## Labels and advanced rollout control - Conversely, we can add pods matching a service's selector - These pods will then receive requests and serve traffic - Examples: - one-shot pod with all debug flags enabled, to collect logs - pods created automatically, but added to rotation in a second step <br/> (by setting their label accordingly) - This gives us building blocks for canary and blue/green deployments .debug[[k8s/daemonset.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/daemonset.md)] --- class: pic .interstitial[] --- name: toc-rolling-updates class: title Rolling updates .nav[ [Section préc.](#toc-labels-and-selectors) | [Retour à la table des matières](#toc-chapter-4) | [Section suivante](#toc-namespaces) ] .debug[(automatically generated title slide)] --- # Rolling updates - By default (without rolling updates), when a scaled resource is updated: - new pods are created - old pods are terminated - ... all at the same time - if something goes wrong, ¯\\\_(ツ)\_/¯ .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Rolling updates - With rolling updates, when a resource is updated, it happens progressively - Two parameters determine the pace of the rollout: `maxUnavailable` and `maxSurge` - They can be specified in absolute number of pods, or percentage of the `replicas` count - At any given time ... - there will always be at least `replicas`-`maxUnavailable` pods available - there will never be more than `replicas`+`maxSurge` pods in total - there will therefore be up to `maxUnavailable`+`maxSurge` pods being updated - We have the possibility of rolling back to the previous version <br/>(if the update fails or is unsatisfactory in any way) .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Checking current rollout parameters - Recall how we build custom reports with `kubectl` and `jq`: .exercise[ - Show the rollout plan for our deployments: ```bash kubectl get deploy -o json | jq ".items[] | {name:.metadata.name} + .spec.strategy.rollingUpdate" ``` ] .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Rolling updates in practice - As of Kubernetes 1.8, we can do rolling updates with: `deployments`, `daemonsets`, `statefulsets` - Editing one of these resources will automatically result in a rolling update - Rolling updates can be monitored with the `kubectl rollout` subcommand .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Building a new version of the `worker` service .warning[ Only run these commands if you have built and pushed DockerCoins to a local registry. <br/> If you are using images from the Docker Hub (`dockercoins/worker:v0.1`), skip this. ] .exercise[ - Go to the `stacks` directory (`~/container.training/stacks`) - Edit `dockercoins/worker/worker.py`; update the first `sleep` line to sleep 1 second - Build a new tag and push it to the registry: ```bash #export REGISTRY=localhost:3xxxx export TAG=v0.2 docker-compose -f dockercoins.yml build docker-compose -f dockercoins.yml push ``` ] .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Rolling out the new `worker` service .exercise[ - Let's monitor what's going on by opening a few terminals, and run: ```bash kubectl get pods -w kubectl get replicasets -w kubectl get deployments -w ``` <!-- ```wait NAME``` ```keys ^C``` --> - Update `worker` either with `kubectl edit`, or by running: ```bash kubectl set image deploy worker worker=$REGISTRY/worker:$TAG ``` ] -- That rollout should be pretty quick. What shows in the web UI? .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Give it some time - At first, it looks like nothing is happening (the graph remains at the same level) - According to `kubectl get deploy -w`, the `deployment` was updated really quickly - But `kubectl get pods -w` tells a different story - The old `pods` are still here, and they stay in `Terminating` state for a while - Eventually, they are terminated; and then the graph decreases significantly - This delay is due to the fact that our worker doesn't handle signals - Kubernetes sends a "polite" shutdown request to the worker, which ignores it - After a grace period, Kubernetes gets impatient and kills the container (The grace period is 30 seconds, but [can be changed](https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods) if needed) .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Rolling out something invalid - What happens if we make a mistake? .exercise[ - Update `worker` by specifying a non-existent image: ```bash export TAG=v0.3 kubectl set image deploy worker worker=$REGISTRY/worker:$TAG ``` - Check what's going on: ```bash kubectl rollout status deploy worker ``` <!-- ```wait Waiting for deployment``` ```keys ^C``` --> ] -- Our rollout is stuck. However, the app is not dead. (After a minute, it will stabilize to be 20-25% slower.) .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## What's going on with our rollout? - Why is our app a bit slower? - Because `MaxUnavailable=25%` ... So the rollout terminated 2 replicas out of 10 available - Okay, but why do we see 5 new replicas being rolled out? - Because `MaxSurge=25%` ... So in addition to replacing 2 replicas, the rollout is also starting 3 more - It rounded down the number of MaxUnavailable pods conservatively, <br/> but the total number of pods being rolled out is allowed to be 25+25=50% .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- class: extra-details ## The nitty-gritty details - We start with 10 pods running for the `worker` deployment - Current settings: MaxUnavailable=25% and MaxSurge=25% - When we start the rollout: - two replicas are taken down (as per MaxUnavailable=25%) - two others are created (with the new version) to replace them - three others are created (with the new version) per MaxSurge=25%) - Now we have 8 replicas up and running, and 5 being deployed - Our rollout is stuck at this point! .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Checking the dashboard during the bad rollout If you didn't deploy the Kubernetes dashboard earlier, just skip this slide. .exercise[ - Check which port the dashboard is on: ```bash kubectl -n kube-system get svc socat ``` ] Note the `3xxxx` port. .exercise[ - Connect to http://oneofournodes:3xxxx/ <!-- ```open https://node1:3xxxx/``` --> ] -- - We have failures in Deployments, Pods, and Replica Sets .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Recovering from a bad rollout - We could push some `v0.3` image (the pod retry logic will eventually catch it and the rollout will proceed) - Or we could invoke a manual rollback .exercise[ <!-- ```keys ^C ``` --> - Cancel the deployment and wait for the dust to settle: ```bash kubectl rollout undo deploy worker kubectl rollout status deploy worker ``` ] .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Changing rollout parameters - We want to: - revert to `v0.1` - be conservative on availability (always have desired number of available workers) - go slow on rollout speed (update only one pod at a time) - give some time to our workers to "warm up" before starting more The corresponding changes can be expressed in the following YAML snippet: .small[ ```yaml spec: template: spec: containers: - name: worker image: $REGISTRY/worker:v0.1 strategy: rollingUpdate: maxUnavailable: 0 maxSurge: 1 minReadySeconds: 10 ``` ] .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- ## Applying changes through a YAML patch - We could use `kubectl edit deployment worker` - But we could also use `kubectl patch` with the exact YAML shown before .exercise[ .small[ - Apply all our changes and wait for them to take effect: ```bash kubectl patch deployment worker -p " spec: template: spec: containers: - name: worker image: $REGISTRY/worker:v0.1 strategy: rollingUpdate: maxUnavailable: 0 maxSurge: 1 minReadySeconds: 10 " kubectl rollout status deployment worker kubectl get deploy -o json worker | jq "{name:.metadata.name} + .spec.strategy.rollingUpdate" ``` ] ] .debug[[k8s/rollout.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/rollout.md)] --- class: pic .interstitial[] --- name: toc-namespaces class: title Namespaces .nav[ [Section préc.](#toc-rolling-updates) | [Retour à la table des matières](#toc-chapter-4) | [Section suivante](#toc-kustomize) ] .debug[(automatically generated title slide)] --- # Namespaces - We would like to deploy another copy of DockerCoins on our cluster - We could rename all our deployments and services: hasher → hasher2, redis → redis2, rng → rng2, etc. - That would require updating the code - There has to be a better way! -- - As hinted by the title of this section, we will use *namespaces* .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Identifying a resource - We cannot have two resources with the same name (or can we...?) -- - We cannot have two resources *of the same kind* with the same name (but it's OK to have an `rng` service, an `rng` deployment, and an `rng` daemon set) -- - We cannot have two resources of the same kind with the same name *in the same namespace* (but it's OK to have e.g. two `rng` services in different namespaces) -- - Except for resources that exist at the *cluster scope* (these do not belong to a namespace) .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Uniquely identifying a resource - For *namespaced* resources: the tuple *(kind, name, namespace)* needs to be unique - For resources at the *cluster scope*: the tuple *(kind, name)* needs to be unique .exercise[ - List resource types again, and check the NAMESPACED column: ```bash kubectl api-resources ``` ] .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Pre-existing namespaces - If we deploy a cluster with `kubeadm`, we have three or four namespaces: - `default` (for our applications) - `kube-system` (for the control plane) - `kube-public` (contains one ConfigMap for cluster discovery) - `kube-node-lease` (in Kubernetes 1.14 and later; contains Lease objects) - If we deploy differently, we may have different namespaces .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Creating namespaces - Let's see two identical methods to create a namespace .exercise[ - We can use `kubectl create namespace`: ```bash kubectl create namespace blue ``` - Or we can construct a very minimal YAML snippet: ```bash kubectl apply -f- <<EOF apiVersion: v1 kind: Namespace metadata: name: blue EOF ``` ] - Some tools like Helm will create namespaces automatically when needed .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Using namespaces - We can pass a `-n` or `--namespace` flag to most `kubectl` commands: ```bash kubectl -n blue get svc ``` - We can also change our current *context* - A context is a *(user, cluster, namespace)* tuple - We can manipulate contexts with the `kubectl config` command .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Viewing existing contexts - On our training environments, at this point, there should be only one context .exercise[ - View existing contexts to see the cluster name and the current user: ```bash kubectl config get-contexts ``` ] - The current context (the only one!) is tagged with a `*` - What are NAME, CLUSTER, AUTHINFO, and NAMESPACE? .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## What's in a context - NAME is an arbitrary string to identify the context - CLUSTER is a reference to a cluster (i.e. API endpoint URL, and optional certificate) - AUTHINFO is a reference to the authentication information to use (i.e. a TLS client certificate, token, or otherwise) - NAMESPACE is the namespace (empty string = `default`) .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Switching contexts - We want to use a different namespace - Solution 1: update the current context *This is appropriate if we need to change just one thing (e.g. namespace or authentication).* - Solution 2: create a new context and switch to it *This is appropriate if we need to change multiple things and switch back and forth.* - Let's go with solution 1! .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Updating a context - This is done through `kubectl config set-context` - We can update a context by passing its name, or the current context with `--current` .exercise[ - Update the current context to use the `blue` namespace: ```bash kubectl config set-context --current --namespace=blue ``` - Check the result: ```bash kubectl config get-contexts ``` ] .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Using our new namespace - Let's check that we are in our new namespace, then deploy a new copy of Dockercoins .exercise[ - Verify that the new context is empty: ```bash kubectl get all ``` ] .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Deploying DockerCoins with YAML files - The GitHub repository `jpetazzo/kubercoins` contains everything we need! .exercise[ - Clone the kubercoins repository: ```bash cd ~ git clone https://github.com/jpetazzo/kubercoins ``` - Create all the DockerCoins resources: ```bash kubectl create -f kubercoins ``` ] If the argument behind `-f` is a directory, all the files in that directory are processed. The subdirectories are *not* processed, unless we also add the `-R` flag. .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Viewing the deployed app - Let's see if this worked correctly! .exercise[ - Retrieve the port number allocated to the `webui` service: ```bash kubectl get svc webui ``` - Point our browser to http://X.X.X.X:3xxxx ] If the graph shows up but stays at zero, give it a minute or two! .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Namespaces and isolation - Namespaces *do not* provide isolation - A pod in the `green` namespace can communicate with a pod in the `blue` namespace - A pod in the `default` namespace can communicate with a pod in the `kube-system` namespace - CoreDNS uses a different subdomain for each namespace - Example: from any pod in the cluster, you can connect to the Kubernetes API with: `https://kubernetes.default.svc.cluster.local:443/` .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Isolating pods - Actual isolation is implemented with *network policies* - Network policies are resources (like deployments, services, namespaces...) - Network policies specify which flows are allowed: - between pods - from pods to the outside world - and vice-versa .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Switch back to the default namespace - Let's make sure that we don't run future exercises in the `blue` namespace .exercise[ - Switch back to the original context: ```bash kubectl config set-context --current --namespace= ``` ] Note: we could have used `--namespace=default` for the same result. .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## Switching namespaces more easily - We can also use a little helper tool called `kubens`: ```bash # Switch to namespace foo kubens foo # Switch back to the previous namespace kubens - ``` - On our clusters, `kubens` is called `kns` instead (so that it's even fewer keystrokes to switch namespaces) .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## `kubens` and `kubectx` - With `kubens`, we can switch quickly between namespaces - With `kubectx`, we can switch quickly between contexts - Both tools are simple shell scripts available from https://github.com/ahmetb/kubectx - On our clusters, they are installed as `kns` and `kctx` (for brevity and to avoid completion clashes between `kubectx` and `kubectl`) .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- ## `kube-ps1` - It's easy to lose track of our current cluster / context / namespace - `kube-ps1` makes it easy to track these, by showing them in our shell prompt - It's a simple shell script available from https://github.com/jonmosco/kube-ps1 - On our clusters, `kube-ps1` is installed and included in `PS1`: ``` [123.45.67.89] `(kubernetes-admin@kubernetes:default)` docker@node1 ~ ``` (The highlighted part is `context:namespace`, managed by `kube-ps1`) - Highly recommended if you work across multiple contexts or namespaces! .debug[[k8s/namespaces.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/namespaces.md)] --- class: pic .interstitial[] --- name: toc-kustomize class: title Kustomize .nav[ [Section préc.](#toc-namespaces) | [Retour à la table des matières](#toc-chapter-4) | [Section suivante](#toc-healthchecks) ] .debug[(automatically generated title slide)] --- # Kustomize - Kustomize lets us transform YAML files representing Kubernetes resources - The original YAML files are valid resource files (e.g. they can be loaded with `kubectl apply -f`) - They are left untouched by Kustomize - Kustomize lets us define *overlays* that extend or change the resource files .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Differences with Helm - Helm charts use placeholders `{{ like.this }}` - Kustomize "bases" are standard Kubernetes YAML - It is possible to use an existing set of YAML as a Kustomize base - As a result, writing a Helm chart is more work ... - ... But Helm charts are also more powerful; e.g. they can: - use flags to conditionally include resources or blocks - check if a given Kubernetes API group is supported - [and much more](https://helm.sh/docs/chart_template_guide/) .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Kustomize concepts - Kustomize needs a `kustomization.yaml` file - That file can be a *base* or a *variant* - If it's a *base*: - it lists YAML resource files to use - If it's a *variant* (or *overlay*): - it refers to (at least) one *base* - and some *patches* .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## An easy way to get started with Kustomize - We are going to use [Replicated Ship](https://www.replicated.com/ship/) to experiment with Kustomize - The [Replicated Ship CLI](https://github.com/replicatedhq/ship/releases) has been installed on our clusters - Replicated Ship has multiple workflows; here is what we will do: - initialize a Kustomize overlay from a remote GitHub repository - customize some values using the web UI provided by Ship - look at the resulting files and apply them to the cluster .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Getting started with Ship - We need to run `ship init` in a new directory - `ship init` requires a URL to a remote repository containing Kubernetes YAML - It will clone that repository and start a web UI - Later, it can watch that repository and/or update from it - We will use the [jpetazzo/kubercoins](https://github.com/jpetazzo/kubercoins) repository (it contains all the DockerCoins resources as YAML files) .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## `ship init` .exercise[ - Change to a new directory: ```bash mkdir ~/kustomcoins cd ~/kustomcoins ``` - Run `ship init` with the kustomcoins repository: ```bash ship init https://github.com/jpetazzo/kubercoins ``` ] .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Access the web UI - `ship init` tells us to connect on `localhost:8800` - We need to replace `localhost` with the address of our node (since we run on a remote machine) - Follow the steps in the web UI, and change one parameter (e.g. set the number of replicas in the worker Deployment) - Complete the web workflow, and go back to the CLI .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Inspect the results - Look at the content of our directory - `base` contains the kubercoins repository + a `kustomization.yaml` file - `overlays/ship` contains the Kustomize overlay referencing the base + our patch(es) - `rendered.yaml` is a YAML bundle containing the patched application - `.ship` contains a state file used by Ship .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Using the results - We can `kubectl apply -f rendered.yaml` (on any version of Kubernetes) - Starting with Kubernetes 1.14, we can apply the overlay directly with: ```bash kubectl apply -k overlays/ship ``` - But let's not do that for now! - We will create a new copy of DockerCoins in another namespace .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Deploy DockerCoins with Kustomize .exercise[ - Create a new namespace: ```bash kubectl create namespace kustomcoins ``` - Deploy DockerCoins: ```bash kubectl apply -f rendered.yaml --namespace=kustomcoins ``` - Or, with Kubernetes 1.14, you can also do this: ```bash kubectl apply -k overlays/ship --namespace=kustomcoins ``` ] .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- ## Checking our new copy of DockerCoins - We can check the worker logs, or the web UI .exercise[ - Retrieve the NodePort number of the web UI: ```bash kubectl get service webui --namespace=kustomcoins ``` - Open it in a web browser - Look at the worker logs: ```bash kubectl logs deploy/worker --tail=10 --follow --namespace=kustomcoins ``` ] Note: it might take a minute or two for the worker to start. .debug[[k8s/kustomize.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/kustomize.md)] --- class: pic .interstitial[] --- name: toc-healthchecks class: title Healthchecks .nav[ [Section préc.](#toc-kustomize) | [Retour à la table des matières](#toc-chapter-5) | [Section suivante](#toc-accessing-logs-from-the-cli) ] .debug[(automatically generated title slide)] --- # Healthchecks - Kubernetes provides two kinds of healthchecks: liveness and readiness - Healthchecks are *probes* that apply to *containers* (not to pods) - Each container can have two (optional) probes: - liveness = is this container dead or alive? - readiness = is this container ready to serve traffic? - Different probes are available (HTTP, TCP, program execution) - Let's see the difference and how to use them! .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Liveness probe - Indicates if the container is dead or alive - A dead container cannot come back to life - If the liveness probe fails, the container is killed (to make really sure that it's really dead; no zombies or undeads!) - What happens next depends on the pod's `restartPolicy`: - `Never`: the container is not restarted - `OnFailure` or `Always`: the container is restarted .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## When to use a liveness probe - To indicate failures that can't be recovered - deadlocks (causing all requests to time out) - internal corruption (causing all requests to error) - If the liveness probe fails *N* consecutive times, the container is killed - *N* is the `failureThreshold` (3 by default) .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Readiness probe - Indicates if the container is ready to serve traffic - If a container becomes "unready" (let's say busy!) it might be ready again soon - If the readiness probe fails: - the container is *not* killed - if the pod is a member of a service, it is temporarily removed - it is re-added as soon as the readiness probe passes again .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## When to use a readiness probe - To indicate temporary failures - the application can only service *N* parallel connections - the runtime is busy doing garbage collection or initial data load - The container is marked as "not ready" after `failureThreshold` failed attempts (3 by default) - It is marked again as "ready" after `successThreshold` successful attempts (1 by default) .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Different types of probes - HTTP request - specify URL of the request (and optional headers) - any status code between 200 and 399 indicates success - TCP connection - the probe succeeds if the TCP port is open - arbitrary exec - a command is executed in the container - exit status of zero indicates success .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Benefits of using probes - Rolling updates proceed when containers are *actually ready* (as opposed to merely started) - Containers in a broken state get killed and restarted (instead of serving errors or timeouts) - Overloaded backends get removed from load balancer rotation (thus improving response times across the board) .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Example: HTTP probe Here is a pod template for the `rng` web service of the DockerCoins app: ```yaml apiVersion: v1 kind: Pod metadata: name: rng-with-liveness spec: containers: - name: rng image: dockercoins/rng:v0.1 livenessProbe: httpGet: path: / port: 80 initialDelaySeconds: 10 periodSeconds: 1 ``` If the backend serves an error, or takes longer than 1s, 3 times in a row, it gets killed. .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Example: exec probe Here is a pod template for a Redis server: ```yaml apiVersion: v1 kind: Pod metadata: name: redis-with-liveness spec: containers: - name: redis image: redis livenessProbe: exec: command: ["redis-cli", "ping"] ``` If the Redis process becomes unresponsive, it will be killed. .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- ## Details about liveness and readiness probes - Probes are executed at intervals of `periodSeconds` (default: 10) - The timeout for a probe is set with `timeoutSeconds` (default: 1) - A probe is considered successful after `successThreshold` successes (default: 1) - A probe is considered failing after `failureThreshold` failures (default: 3) - If a probe is not defined, it's as if there was an "always successful" probe .debug[[k8s/healthchecks.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/healthchecks.md)] --- class: pic .interstitial[] --- name: toc-accessing-logs-from-the-cli class: title Accessing logs from the CLI .nav[ [Section préc.](#toc-healthchecks) | [Retour à la table des matières](#toc-chapter-5) | [Section suivante](#toc-centralized-logging) ] .debug[(automatically generated title slide)] --- # Accessing logs from the CLI - The `kubectl logs` command has limitations: - it cannot stream logs from multiple pods at a time - when showing logs from multiple pods, it mixes them all together - We are going to see how to do it better .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- ## Doing it manually - We *could* (if we were so inclined) write a program or script that would: - take a selector as an argument - enumerate all pods matching that selector (with `kubectl get -l ...`) - fork one `kubectl logs --follow ...` command per container - annotate the logs (the output of each `kubectl logs ...` process) with their origin - preserve ordering by using `kubectl logs --timestamps ...` and merge the output -- - We *could* do it, but thankfully, others did it for us already! .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- ## Stern [Stern](https://github.com/wercker/stern) is an open source project by [Wercker](http://www.wercker.com/). From the README: *Stern allows you to tail multiple pods on Kubernetes and multiple containers within the pod. Each result is color coded for quicker debugging.* *The query is a regular expression so the pod name can easily be filtered and you don't need to specify the exact id (for instance omitting the deployment id). If a pod is deleted it gets removed from tail and if a new pod is added it automatically gets tailed.* Exactly what we need! .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- ## Installing Stern - Run `stern` (without arguments) to check if it's installed: ``` $ stern Tail multiple pods and containers from Kubernetes Usage: stern pod-query [flags] ``` - If it is not installed, the easiest method is to download a [binary release](https://github.com/wercker/stern/releases) - The following commands will install Stern on a Linux Intel 64 bit machine: ```bash sudo curl -L -o /usr/local/bin/stern \ https://github.com/wercker/stern/releases/download/1.10.0/stern_linux_amd64 sudo chmod +x /usr/local/bin/stern ``` <!-- ##VERSION## --> .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- ## Using Stern - There are two ways to specify the pods whose logs we want to see: - `-l` followed by a selector expression (like with many `kubectl` commands) - with a "pod query," i.e. a regex used to match pod names - These two ways can be combined if necessary .exercise[ - View the logs for all the rng containers: ```bash stern rng ``` <!-- ```wait HTTP/1.1``` ```keys ^C``` --> ] .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- ## Stern convenient options - The `--tail N` flag shows the last `N` lines for each container (Instead of showing the logs since the creation of the container) - The `-t` / `--timestamps` flag shows timestamps - The `--all-namespaces` flag is self-explanatory .exercise[ - View what's up with the `weave` system containers: ```bash stern --tail 1 --timestamps --all-namespaces weave ``` <!-- ```wait weave-npc``` ```keys ^C``` --> ] .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- ## Using Stern with a selector - When specifying a selector, we can omit the value for a label - This will match all objects having that label (regardless of the value) - Everything created with `kubectl run` has a label `run` - We can use that property to view the logs of all the pods created with `kubectl run` - Similarly, everything created with `kubectl create deployment` has a label `app` .exercise[ - View the logs for all the things started with `kubectl create deployment`: ```bash stern -l app ``` <!-- ```wait units of work``` ```keys ^C``` --> ] .debug[[k8s/logs-cli.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-cli.md)] --- class: pic .interstitial[] --- name: toc-centralized-logging class: title Centralized logging .nav[ [Section préc.](#toc-accessing-logs-from-the-cli) | [Retour à la table des matières](#toc-chapter-5) | [Section suivante](#toc-authentication-and-authorization) ] .debug[(automatically generated title slide)] --- # Centralized logging - Using `kubectl` or `stern` is simple; but it has drawbacks: - when a node goes down, its logs are not available anymore - we can only dump or stream logs; we want to search/index/count... - We want to send all our logs to a single place - We want to parse them (e.g. for HTTP logs) and index them - We want a nice web dashboard -- - We are going to deploy an EFK stack .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## What is EFK? - EFK is three components: - ElasticSearch (to store and index log entries) - Fluentd (to get container logs, process them, and put them in ElasticSearch) - Kibana (to view/search log entries with a nice UI) - The only component that we need to access from outside the cluster will be Kibana .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## Deploying EFK on our cluster - We are going to use a YAML file describing all the required resources .exercise[ - Load the YAML file into our cluster: ```bash kubectl apply -f ~/container.training/k8s/efk.yaml ``` ] If we [look at the YAML file](https://github.com/jpetazzo/container.training/blob/master/k8s/efk.yaml), we see that it creates a daemon set, two deployments, two services, and a few roles and role bindings (to give fluentd the required permissions). .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## The itinerary of a log line (before Fluentd) - A container writes a line on stdout or stderr - Both are typically piped to the container engine (Docker or otherwise) - The container engine reads the line, and sends it to a logging driver - The timestamp and stream (stdout or stderr) is added to the log line - With the default configuration for Kubernetes, the line is written to a JSON file (`/var/log/containers/pod-name_namespace_container-id.log`) - That file is read when we invoke `kubectl logs`; we can access it directly too .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## The itinerary of a log line (with Fluentd) - Fluentd runs on each node (thanks to a daemon set) - It bind-mounts `/var/log/containers` from the host (to access these files) - It continuously scans this directory for new files; reads them; parses them - Each log line becomes a JSON object, fully annotated with extra information: <br/>container id, pod name, Kubernetes labels... - These JSON objects are stored in ElasticSearch - ElasticSearch indexes the JSON objects - We can access the logs through Kibana (and perform searches, counts, etc.) .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## Accessing Kibana - Kibana offers a web interface that is relatively straightforward - Let's check it out! .exercise[ - Check which `NodePort` was allocated to Kibana: ```bash kubectl get svc kibana ``` - With our web browser, connect to Kibana ] .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## Using Kibana *Note: this is not a Kibana workshop! So this section is deliberately very terse.* - The first time you connect to Kibana, you must "configure an index pattern" - Just use the one that is suggested, `@timestamp`.red[*] - Then click "Discover" (in the top-left corner) - You should see container logs - Advice: in the left column, select a few fields to display, e.g.: `kubernetes.host`, `kubernetes.pod_name`, `stream`, `log` .red[*]If you don't see `@timestamp`, it's probably because no logs exist yet. <br/>Wait a bit, and double-check the logging pipeline! .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- ## Caveat emptor We are using EFK because it is relatively straightforward to deploy on Kubernetes, without having to redeploy or reconfigure our cluster. But it doesn't mean that it will always be the best option for your use-case. If you are running Kubernetes in the cloud, you might consider using the cloud provider's logging infrastructure (if it can be integrated with Kubernetes). The deployment method that we will use here has been simplified: there is only one ElasticSearch node. In a real deployment, you might use a cluster, both for performance and reliability reasons. But this is outside of the scope of this chapter. The YAML file that we used creates all the resources in the `default` namespace, for simplicity. In a real scenario, you will create the resources in the `kube-system` namespace or in a dedicated namespace. .debug[[k8s/logs-centralized.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/logs-centralized.md)] --- class: pic .interstitial[] --- name: toc-authentication-and-authorization class: title Authentication and authorization .nav[ [Section préc.](#toc-centralized-logging) | [Retour à la table des matières](#toc-chapter-5) | [Section suivante](#toc-the-csr-api) ] .debug[(automatically generated title slide)] --- # Authentication and authorization *And first, a little refresher!* - Authentication = verifying the identity of a person On a UNIX system, we can authenticate with login+password, SSH keys ... - Authorization = listing what they are allowed to do On a UNIX system, this can include file permissions, sudoer entries ... - Sometimes abbreviated as "authn" and "authz" - In good modular systems, these things are decoupled (so we can e.g. change a password or SSH key without having to reset access rights) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Authentication in Kubernetes - When the API server receives a request, it tries to authenticate it (it examines headers, certificates... anything available) - Many authentication methods are available and can be used simultaneously (we will see them on the next slide) - It's the job of the authentication method to produce: - the user name - the user ID - a list of groups - The API server doesn't interpret these; that'll be the job of *authorizers* .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Authentication methods - TLS client certificates (that's what we've been doing with `kubectl` so far) - Bearer tokens (a secret token in the HTTP headers of the request) - [HTTP basic auth](https://en.wikipedia.org/wiki/Basic_access_authentication) (carrying user and password in an HTTP header) - Authentication proxy (sitting in front of the API and setting trusted headers) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Anonymous requests - If any authentication method *rejects* a request, it's denied (`401 Unauthorized` HTTP code) - If a request is neither rejected nor accepted by anyone, it's anonymous - the user name is `system:anonymous` - the list of groups is `[system:unauthenticated]` - By default, the anonymous user can't do anything (that's what you get if you just `curl` the Kubernetes API) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Authentication with TLS certificates - This is enabled in most Kubernetes deployments - The user name is derived from the `CN` in the client certificates - The groups are derived from the `O` fields in the client certificate - From the point of view of the Kubernetes API, users do not exist (i.e. they are not stored in etcd or anywhere else) - Users can be created (and added to groups) independently of the API - The Kubernetes API can be set up to use your custom CA to validate client certs .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Viewing our admin certificate - Let's inspect the certificate we've been using all this time! .exercise[ - This command will show the `CN` and `O` fields for our certificate: ```bash kubectl config view \ --raw \ -o json \ | jq -r .users[0].user[\"client-certificate-data\"] \ | openssl base64 -d -A \ | openssl x509 -text \ | grep Subject: ``` ] Let's break down that command together! 😅 .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Breaking down the command - `kubectl config view` shows the Kubernetes user configuration - `--raw` includes certificate information (which shows as REDACTED otherwise) - `-o json` outputs the information in JSON format - `| jq ...` extracts the field with the user certificate (in base64) - `| openssl base64 -d -A` decodes the base64 format (now we have a PEM file) - `| openssl x509 -text` parses the certificate and outputs it as plain text - `| grep Subject:` shows us the line that interests us → We are user `kubernetes-admin`, in group `system:masters`. (We will see later how and why this gives us the permissions that we have.) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## User certificates in practice - The Kubernetes API server does not support certificate revocation (see issue [#18982](https://github.com/kubernetes/kubernetes/issues/18982)) - As a result, we don't have an easy way to terminate someone's access (if their key is compromised, or they leave the organization) - Option 1: re-create a new CA and re-issue everyone's certificates <br/> → Maybe OK if we only have a few users; no way otherwise - Option 2: don't use groups; grant permissions to individual users <br/> → Inconvenient if we have many users and teams; error-prone - Option 3: issue short-lived certificates (e.g. 24 hours) and renew them often <br/> → This can be facilitated by e.g. Vault or by the Kubernetes CSR API .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Authentication with tokens - Tokens are passed as HTTP headers: `Authorization: Bearer and-then-here-comes-the-token` - Tokens can be validated through a number of different methods: - static tokens hard-coded in a file on the API server - [bootstrap tokens](https://kubernetes.io/docs/reference/access-authn-authz/bootstrap-tokens/) (special case to create a cluster or join nodes) - [OpenID Connect tokens](https://kubernetes.io/docs/reference/access-authn-authz/authentication/#openid-connect-tokens) (to delegate authentication to compatible OAuth2 providers) - service accounts (these deserve more details, coming right up!) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Service accounts - A service account is a user that exists in the Kubernetes API (it is visible with e.g. `kubectl get serviceaccounts`) - Service accounts can therefore be created / updated dynamically (they don't require hand-editing a file and restarting the API server) - A service account is associated with a set of secrets (the kind that you can view with `kubectl get secrets`) - Service accounts are generally used to grant permissions to applications, services... (as opposed to humans) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Token authentication in practice - We are going to list existing service accounts - Then we will extract the token for a given service account - And we will use that token to authenticate with the API .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Listing service accounts .exercise[ - The resource name is `serviceaccount` or `sa` for short: ```bash kubectl get sa ``` ] There should be just one service account in the default namespace: `default`. .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Finding the secret .exercise[ - List the secrets for the `default` service account: ```bash kubectl get sa default -o yaml SECRET=$(kubectl get sa default -o json | jq -r .secrets[0].name) ``` ] It should be named `default-token-XXXXX`. .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Extracting the token - The token is stored in the secret, wrapped with base64 encoding .exercise[ - View the secret: ```bash kubectl get secret $SECRET -o yaml ``` - Extract the token and decode it: ```bash TOKEN=$(kubectl get secret $SECRET -o json \ | jq -r .data.token | openssl base64 -d -A) ``` ] .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Using the token - Let's send a request to the API, without and with the token .exercise[ - Find the ClusterIP for the `kubernetes` service: ```bash kubectl get svc kubernetes API=$(kubectl get svc kubernetes -o json | jq -r .spec.clusterIP) ``` - Connect without the token: ```bash curl -k https://$API ``` - Connect with the token: ```bash curl -k -H "Authorization: Bearer $TOKEN" https://$API ``` ] .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Results - In both cases, we will get a "Forbidden" error - Without authentication, the user is `system:anonymous` - With authentication, it is shown as `system:serviceaccount:default:default` - The API "sees" us as a different user - But neither user has any rights, so we can't do nothin' - Let's change that! .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Authorization in Kubernetes - There are multiple ways to grant permissions in Kubernetes, called [authorizers](https://kubernetes.io/docs/reference/access-authn-authz/authorization/#authorization-modules): - [Node Authorization](https://kubernetes.io/docs/reference/access-authn-authz/node/) (used internally by kubelet; we can ignore it) - [Attribute-based access control](https://kubernetes.io/docs/reference/access-authn-authz/abac/) (powerful but complex and static; ignore it too) - [Webhook](https://kubernetes.io/docs/reference/access-authn-authz/webhook/) (each API request is submitted to an external service for approval) - [Role-based access control](https://kubernetes.io/docs/reference/access-authn-authz/rbac/) (associates permissions to users dynamically) - The one we want is the last one, generally abbreviated as RBAC .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Role-based access control - RBAC allows to specify fine-grained permissions - Permissions are expressed as *rules* - A rule is a combination of: - [verbs](https://kubernetes.io/docs/reference/access-authn-authz/authorization/#determine-the-request-verb) like create, get, list, update, delete... - resources (as in "API resource," like pods, nodes, services...) - resource names (to specify e.g. one specific pod instead of all pods) - in some case, [subresources](https://kubernetes.io/docs/reference/access-authn-authz/rbac/#referring-to-resources) (e.g. logs are subresources of pods) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## From rules to roles to rolebindings - A *role* is an API object containing a list of *rules* Example: role "external-load-balancer-configurator" can: - [list, get] resources [endpoints, services, pods] - [update] resources [services] - A *rolebinding* associates a role with a user Example: rolebinding "external-load-balancer-configurator": - associates user "external-load-balancer-configurator" - with role "external-load-balancer-configurator" - Yes, there can be users, roles, and rolebindings with the same name - It's a good idea for 1-1-1 bindings; not so much for 1-N ones .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Cluster-scope permissions - API resources Role and RoleBinding are for objects within a namespace - We can also define API resources ClusterRole and ClusterRoleBinding - These are a superset, allowing us to: - specify actions on cluster-wide objects (like nodes) - operate across all namespaces - We can create Role and RoleBinding resources within a namespace - ClusterRole and ClusterRoleBinding resources are global .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Pods and service accounts - A pod can be associated with a service account - by default, it is associated with the `default` service account - as we saw earlier, this service account has no permissions anyway - The associated token is exposed to the pod's filesystem (in `/var/run/secrets/kubernetes.io/serviceaccount/token`) - Standard Kubernetes tooling (like `kubectl`) will look for it there - So Kubernetes tools running in a pod will automatically use the service account .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## In practice - We are going to create a service account - We will use a default cluster role (`view`) - We will bind together this role and this service account - Then we will run a pod using that service account - In this pod, we will install `kubectl` and check our permissions .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Creating a service account - We will call the new service account `viewer` (note that nothing prevents us from calling it `view`, like the role) .exercise[ - Create the new service account: ```bash kubectl create serviceaccount viewer ``` - List service accounts now: ```bash kubectl get serviceaccounts ``` ] .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Binding a role to the service account - Binding a role = creating a *rolebinding* object - We will call that object `viewercanview` (but again, we could call it `view`) .exercise[ - Create the new role binding: ```bash kubectl create rolebinding viewercanview \ --clusterrole=view \ --serviceaccount=default:viewer ``` ] It's important to note a couple of details in these flags... .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Roles vs Cluster Roles - We used `--clusterrole=view` - What would have happened if we had used `--role=view`? - we would have bound the role `view` from the local namespace <br/>(instead of the cluster role `view`) - the command would have worked fine (no error) - but later, our API requests would have been denied - This is a deliberate design decision (we can reference roles that don't exist, and create/update them later) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Users vs Service Accounts - We used `--serviceaccount=default:viewer` - What would have happened if we had used `--user=default:viewer`? - we would have bound the role to a user instead of a service account - again, the command would have worked fine (no error) - ...but our API requests would have been denied later - What's about the `default:` prefix? - that's the namespace of the service account - yes, it could be inferred from context, but... `kubectl` requires it .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Testing - We will run an `alpine` pod and install `kubectl` there .exercise[ - Run a one-time pod: ```bash kubectl run eyepod --rm -ti --restart=Never \ --serviceaccount=viewer \ --image alpine ``` - Install `curl`, then use it to install `kubectl`: ```bash apk add --no-cache curl URLBASE=https://storage.googleapis.com/kubernetes-release/release KUBEVER=$(curl -s $URLBASE/stable.txt) curl -LO $URLBASE/$KUBEVER/bin/linux/amd64/kubectl chmod +x kubectl ``` ] .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Running `kubectl` in the pod - We'll try to use our `view` permissions, then to create an object .exercise[ - Check that we can, indeed, view things: ```bash ./kubectl get all ``` - But that we can't create things: ``` ./kubectl create deployment testrbac --image=nginx ``` - Exit the container with `exit` or `^D` <!-- ```keys ^D``` --> ] .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- ## Testing directly with `kubectl` - We can also check for permission with `kubectl auth can-i`: ```bash kubectl auth can-i list nodes kubectl auth can-i create pods kubectl auth can-i get pod/name-of-pod kubectl auth can-i get /url-fragment-of-api-request/ kubectl auth can-i '*' services ``` - And we can check permissions on behalf of other users: ```bash kubectl auth can-i list nodes \ --as some-user kubectl auth can-i list nodes \ --as system:serviceaccount:<namespace>:<name-of-service-account> ``` .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Where does this `view` role come from? - Kubernetes defines a number of ClusterRoles intended to be bound to users - `cluster-admin` can do *everything* (think `root` on UNIX) - `admin` can do *almost everything* (except e.g. changing resource quotas and limits) - `edit` is similar to `admin`, but cannot view or edit permissions - `view` has read-only access to most resources, except permissions and secrets *In many situations, these roles will be all you need.* *You can also customize them!* .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Customizing the default roles - If you need to *add* permissions to these default roles (or others), <br/> you can do it through the [ClusterRole Aggregation](https://kubernetes.io/docs/reference/access-authn-authz/rbac/#aggregated-clusterroles) mechanism - This happens by creating a ClusterRole with the following labels: ```yaml metadata: labels: rbac.authorization.k8s.io/aggregate-to-admin: "true" rbac.authorization.k8s.io/aggregate-to-edit: "true" rbac.authorization.k8s.io/aggregate-to-view: "true" ``` - This ClusterRole permissions will be added to `admin`/`edit`/`view` respectively - This is particulary useful when using CustomResourceDefinitions (since Kubernetes cannot guess which resources are sensitive and which ones aren't) .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Where do our permissions come from? - When interacting with the Kubernetes API, we are using a client certificate - We saw previously that this client certificate contained: `CN=kubernetes-admin` and `O=system:masters` - Let's look for these in existing ClusterRoleBindings: ```bash kubectl get clusterrolebindings -o yaml | grep -e kubernetes-admin -e system:masters ``` (`system:masters` should show up, but not `kubernetes-admin`.) - Where does this match come from? .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## The `system:masters` group - If we eyeball the output of `kubectl get clusterrolebindings -o yaml`, we'll find out! - It is in the `cluster-admin` binding: ```bash kubectl describe clusterrolebinding cluster-admin ``` - This binding associates `system:masters` with the cluster role `cluster-admin` - And the `cluster-admin` is, basically, `root`: ```bash kubectl describe clusterrole cluster-admin ``` .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: extra-details ## Figuring out who can do what - For auditing purposes, sometimes we want to know who can perform an action - There is a proof-of-concept tool by Aqua Security which does exactly that: https://github.com/aquasecurity/kubectl-who-can - This is one way to install it: ```bash docker run --rm -v /usr/local/bin:/go/bin golang \ go get -v github.com/aquasecurity/kubectl-who-can ``` - This is one way to use it: ```bash kubectl-who-can create pods ``` .debug[[k8s/authn-authz.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/authn-authz.md)] --- class: pic .interstitial[] --- name: toc-the-csr-api class: title The CSR API .nav[ [Section préc.](#toc-authentication-and-authorization) | [Retour à la table des matières](#toc-chapter-5) | [Section suivante](#toc-pod-security-policies) ] .debug[(automatically generated title slide)] --- # The CSR API - The Kubernetes API exposes CSR resources - We can use these resources to issue TLS certificates - First, we will go through a quick reminder about TLS certificates - Then, we will see how to obtain a certificate for a user - We will use that certificate to authenticate with the cluster - Finally, we will grant some privileges to that user .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Reminder about TLS - TLS (Transport Layer Security) is a protocol providing: - encryption (to prevent eavesdropping) - authentication (using public key cryptography) - When we access an https:// URL, the server authenticates itself (it proves its identity to us; as if it were "showing its ID") - But we can also have mutual TLS authentication (mTLS) (client proves its identity to server; server proves its identity to client) .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Authentication with certificates - To authenticate, someone (client or server) needs: - a *private key* (that remains known only to them) - a *public key* (that they can distribute) - a *certificate* (associating the public key with an identity) - A message encrypted with the private key can only be decrypted with the public key (and vice versa) - If I use someone's public key to encrypt/decrypt their messages, <br/> I can be certain that I am talking to them / they are talking to me - The certificate proves that I have the correct public key for them .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Certificate generation workflow This is what I do if I want to obtain a certificate. 1. Create public and private keys. 2. Create a Certificate Signing Request (CSR). (The CSR contains the identity that I claim and a public key.) 3. Send that CSR to the Certificate Authority (CA). 4. The CA verifies that I can claim the identity in the CSR. 5. The CA generates my certificate and gives it to me. The CA (or anyone else) never needs to know my private key. .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## The CSR API - The Kubernetes API has a CertificateSigningRequest resource type (we can list them with e.g. `kubectl get csr`) - We can create a CSR object (= upload a CSR to the Kubernetes API) - Then, using the Kubernetes API, we can approve/deny the request - If we approve the request, the Kubernetes API generates a certificate - The certificate gets attached to the CSR object and can be retrieved .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Using the CSR API - We will show how to use the CSR API to obtain user certificates - This will be a rather complex demo - ... And yet, we will take a few shortcuts to simplify it (but it will illustrate the general idea) - The demo also won't be automated (we would have to write extra code to make it fully functional) .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## General idea - We will create a Namespace named "users" - Each user will get a ServiceAccount in that Namespace - That ServiceAccount will give read/write access to *one* CSR object - Users will use that ServiceAccount's token to submit a CSR - We will approve the CSR (or not) - Users can then retrieve their certificate from their CSR object - ...And use that certificate for subsequent interactions .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Resource naming For a user named `jean.doe`, we will have: - ServiceAccount `jean.doe` in Namespace `users` - CertificateSigningRequest `users:jean.doe` - ClusterRole `users:jean.doe` giving read/write access to that CSR - ClusterRoleBinding `users:jean.doe` binding ClusterRole and ServiceAccount .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Creating the user's resources .warning[If you want to use another name than `jean.doe`, update the YAML file!] .exercise[ - Create the global namespace for all users: ```bash kubectl create namespace users ``` - Create the ServiceAccount, ClusterRole, ClusterRoleBinding for `jean.doe`: ```bash kubectl apply -f ~/container.training/k8s/users:jean.doe.yaml ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Extracting the user's token - Let's obtain the user's token and give it to them (the token will be their password) .exercise[ - List the user's secrets: ```bash kubectl --namespace=users describe serviceaccount jean.doe ``` - Show the user's token: ```bash kubectl --namespace=users describe secret `jean.doe-token-xxxxx` ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Configure `kubectl` to use the token - Let's create a new context that will use that token to access the API .exercise[ - Add a new identity to our kubeconfig file: ```bash kubectl config set-credentials token:jean.doe --token=... ``` - Add a new context using that identity: ```bash kubectl config set-context jean.doe --user=token:jean.doe --cluster=kubernetes ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Access the API with the token - Let's check that our access rights are set properly .exercise[ - Try to access any resource: ```bash kubectl get pods ``` (This should tell us "Forbidden") - Try to access "our" CertificateSigningRequest: ```bash kubectl get csr users:jean.doe ``` (This should tell us "NotFound") ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Create a key and a CSR - There are many tools to generate TLS keys and CSRs - Let's use OpenSSL; it's not the best one, but it's installed everywhere (many people prefer cfssl, easyrsa, or other tools; that's fine too!) .exercise[ - Generate the key and certificate signing request: ```bash openssl req -newkey rsa:2048 -nodes -keyout key.pem \ -new -subj /CN=jean.doe/O=devs/ -out csr.pem ``` ] The command above generates: - a 2048-bit RSA key, without encryption, stored in key.pem - a CSR for the name `jean.doe` in group `devs` .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Inside the Kubernetes CSR object - The Kubernetes CSR object is a thin wrapper around the CSR PEM file - The PEM file needs to be encoded to base64 on a single line (we will use `base64 -w0` for that purpose) - The Kubernetes CSR object also needs to list the right "usages" (these are flags indicating how the certificate can be used) .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Sending the CSR to Kubernetes .exercise[ - Generate and create the CSR resource: ```bash kubectl apply -f - <<EOF apiVersion: certificates.k8s.io/v1beta1 kind: CertificateSigningRequest metadata: name: users:jean.doe spec: request: $(base64 -w0 < csr.pem) usages: - digital signature - key encipherment - client auth EOF ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Adjusting certificate expiration - By default, the CSR API generates certificates valid 1 year - We want to generate short-lived certificates, so we will lower that to 1 hour - For now, this is configured [through an experimental controller manager flag](https://github.com/kubernetes/kubernetes/issues/67324) .exercise[ - Edit the static pod definition for the controller manager: ```bash sudo vim /etc/kubernetes/manifests/kube-controller-manager.yaml ``` - In the list of flags, add the following line: ```bash - --experimental-cluster-signing-duration=1h ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Verifying and approving the CSR - Let's inspect the CSR, and if it is valid, approve it .exercise[ - Switch back to `cluster-admin`: ```bash kctx - ``` - Inspect the CSR: ```bash kubectl describe csr users:jean.doe ``` - Approve it: ```bash kubectl certificate approve users:jean.doe ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Obtaining the certificate .exercise[ - Switch back to the user's identity: ```bash kctx - ``` - Retrieve the updated CSR object and extract the certificate: ```bash kubectl get csr users:jean.doe \ -o jsonpath={.status.certificate} \ | base64 -d > cert.pem ``` - Inspect the certificate: ```bash openssl x509 -in cert.pem -text -noout ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Using the certificate .exercise[ - Add the key and certificate to kubeconfig: ```bash kubectl config set-credentials cert:jean.doe --embed-certs \ --client-certificate=cert.pem --client-key=key.pem ``` - Update the user's context to use the key and cert to authenticate: ```bash kubectl config set-context jean.doe --user cert:jean.doe ``` - Confirm that we are seen as `jean.doe` (but don't have permissions): ```bash kubectl get pods ``` ] .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## What's missing? We have just shown, step by step, a method to issue short-lived certificates for users. To be usable in real environments, we would need to add: - a kubectl helper to automatically generate the CSR and obtain the cert (and transparently renew the cert when needed) - a Kubernetes controller to automatically validate and approve CSRs (checking that the subject and groups are valid) - a way for the users to know the groups to add to their CSR (e.g.: annotations on their ServiceAccount + read access to the ServiceAccount) .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- ## Is this realistic? - Larger organizations typically integrate with their own directory - The general principle, however, is the same: - users have long-term credentials (password, token, ...) - they use these credentials to obtain other, short-lived credentials - This provides enhanced security: - the long-term credentials can use long passphrases, 2FA, HSM... - the short-term credentials are more convenient to use - we get strong security *and* convenience - Systems like Vault also have certificate issuance mechanisms .debug[[k8s/csr-api.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/csr-api.md)] --- class: pic .interstitial[] --- name: toc-pod-security-policies class: title Pod Security Policies .nav[ [Section préc.](#toc-the-csr-api) | [Retour à la table des matières](#toc-chapter-5) | [Section suivante](#toc-exposing-http-services-with-ingress-resources) ] .debug[(automatically generated title slide)] --- # Pod Security Policies - By default, our pods and containers can do *everything* (including taking over the entire cluster) - We are going to show an example of a malicious pod - Then we will explain how to avoid this with PodSecurityPolicies - We will enable PodSecurityPolicies on our cluster - We will create a couple of policies (restricted and permissive) - Finally we will see how to use them to improve security on our cluster .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Setting up a namespace - For simplicity, let's work in a separate namespace - Let's create a new namespace called "green" .exercise[ - Create the "green" namespace: ```bash kubectl create namespace green ``` - Change to that namespace: ```bash kns green ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Creating a basic Deployment - Just to check that everything works correctly, deploy NGINX .exercise[ - Create a Deployment using the official NGINX image: ```bash kubectl create deployment web --image=nginx ``` - Confirm that the Deployment, ReplicaSet, and Pod exist, and that the Pod is running: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## One example of malicious pods - We will now show an escalation technique in action - We will deploy a DaemonSet that adds our SSH key to the root account (on *each* node of the cluster) - The Pods of the DaemonSet will do so by mounting `/root` from the host .exercise[ - Check the file `k8s/hacktheplanet.yaml` with a text editor: ```bash vim ~/container.training/k8s/hacktheplanet.yaml ``` - If you would like, change the SSH key (by changing the GitHub user name) ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Deploying the malicious pods - Let's deploy our "exploit"! .exercise[ - Create the DaemonSet: ```bash kubectl create -f ~/container.training/k8s/hacktheplanet.yaml ``` - Check that the pods are running: ```bash kubectl get pods ``` - Confirm that the SSH key was added to the node's root account: ```bash sudo cat /root/.ssh/authorized_keys ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Cleaning up - Before setting up our PodSecurityPolicies, clean up that namespace .exercise[ - Remove the DaemonSet: ```bash kubectl delete daemonset hacktheplanet ``` - Remove the Deployment: ```bash kubectl delete deployment web ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Pod Security Policies in theory - To use PSPs, we need to activate their specific *admission controller* - That admission controller will intercept each pod creation attempt - It will look at: - *who/what* is creating the pod - which PodSecurityPolicies they can use - which PodSecurityPolicies can be used by the Pod's ServiceAccount - Then it will compare the Pod with each PodSecurityPolicy one by one - If a PodSecurityPolicy accepts all the parameters of the Pod, it is created - Otherwise, the Pod creation is denied and it won't even show up in `kubectl get pods` .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Pod Security Policies fine print - With RBAC, using a PSP corresponds to the verb `use` on the PSP (that makes sense, right?) - If no PSP is defined, no Pod can be created (even by cluster admins) - Pods that are already running are *not* affected - If we create a Pod directly, it can use a PSP to which *we* have access - If the Pod is created by e.g. a ReplicaSet or DaemonSet, it's different: - the ReplicaSet / DaemonSet controllers don't have access to *our* policies - therefore, we need to give access to the PSP to the Pod's ServiceAccount .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Pod Security Policies in practice - We are going to enable the PodSecurityPolicy admission controller - At that point, we won't be able to create any more pods (!) - Then we will create a couple of PodSecurityPolicies - ...And associated ClusterRoles (giving `use` access to the policies) - Then we will create RoleBindings to grant these roles to ServiceAccounts - We will verify that we can't run our "exploit" anymore .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Enabling Pod Security Policies - To enable Pod Security Policies, we need to enable their *admission plugin* - This is done by adding a flag to the API server - On clusters deployed with `kubeadm`, the control plane runs in static pods - These pods are defined in YAML files located in `/etc/kubernetes/manifests` - Kubelet watches this directory - Each time a file is added/removed there, kubelet creates/deletes the corresponding pod - Updating a file causes the pod to be deleted and recreated .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Updating the API server flags - Let's edit the manifest for the API server pod .exercise[ - Have a look at the static pods: ```bash ls -l /etc/kubernetes/manifests ``` - Edit the one corresponding to the API server: ```bash sudo vim /etc/kubernetes/manifests/kube-apiserver.yaml ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Adding the PSP admission plugin - There should already be a line with `--enable-admission-plugins=...` - Let's add `PodSecurityPolicy` on that line .exercise[ - Locate the line with `--enable-admission-plugins=` - Add `PodSecurityPolicy` It should read: `--enable-admission-plugins=NodeRestriction,PodSecurityPolicy` - Save, quit ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Waiting for the API server to restart - The kubelet detects that the file was modified - It kills the API server pod, and starts a new one - During that time, the API server is unavailable .exercise[ - Wait until the API server is available again ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Check that the admission plugin is active - Normally, we can't create any Pod at this point .exercise[ - Try to create a Pod directly: ```bash kubectl run testpsp1 --image=nginx --restart=Never ``` - Try to create a Deployment: ```bash kubectl run testpsp2 --image=nginx ``` - Look at existing resources: ```bash kubectl get all ``` ] We can get hints at what's happening by looking at the ReplicaSet and Events. .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Introducing our Pod Security Policies - We will create two policies: - privileged (allows everything) - restricted (blocks some unsafe mechanisms) - For each policy, we also need an associated ClusterRole granting *use* .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Creating our Pod Security Policies - We have a couple of files, each defining a PSP and associated ClusterRole: - k8s/psp-privileged.yaml: policy `privileged`, role `psp:privileged` - k8s/psp-restricted.yaml: policy `restricted`, role `psp:restricted` .exercise[ - Create both policies and their associated ClusterRoles: ```bash kubectl create -f ~/container.training/k8s/psp-restricted.yaml kubectl create -f ~/container.training/k8s/psp-privileged.yaml ``` ] - The privileged policy comes from [the Kubernetes documentation](https://kubernetes.io/docs/concepts/policy/pod-security-policy/#example-policies) - The restricted policy is inspired by that same documentation page .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Check that we can create Pods again - We haven't bound the policy to any user yet - But `cluster-admin` can implicitly `use` all policies .exercise[ - Check that we can now create a Pod directly: ```bash kubectl run testpsp3 --image=nginx --restart=Never ``` - Create a Deployment as well: ```bash kubectl run testpsp4 --image=nginx ``` - Confirm that the Deployment is *not* creating any Pods: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## What's going on? - We can create Pods directly (thanks to our root-like permissions) - The Pods corresponding to a Deployment are created by the ReplicaSet controller - The ReplicaSet controller does *not* have root-like permissions - We need to either: - grant permissions to the ReplicaSet controller *or* - grant permissions to our Pods' ServiceAccount - The first option would allow *anyone* to create pods - The second option will allow us to scope the permissions better .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Binding the restricted policy - Let's bind the role `psp:restricted` to ServiceAccount `green:default` (aka the default ServiceAccount in the green Namespace) - This will allow Pod creation in the green Namespace (because these Pods will be using that ServiceAccount automatically) .exercise[ - Create the following RoleBinding: ```bash kubectl create rolebinding psp:restricted \ --clusterrole=psp:restricted \ --serviceaccount=green:default ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Trying it out - The Deployments that we created earlier will *eventually* recover (the ReplicaSet controller will retry to create Pods once in a while) - If we create a new Deployment now, it should work immediately .exercise[ - Create a simple Deployment: ```bash kubectl create deployment testpsp5 --image=nginx ``` - Look at the Pods that have been created: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## Trying to hack the cluster - Let's create the same DaemonSet we used earlier .exercise[ - Create a hostile DaemonSet: ```bash kubectl create -f ~/container.training/k8s/hacktheplanet.yaml ``` - Look at the state of the namespace: ```bash kubectl get all ``` ] .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- class: extra-details ## What's in our restricted policy? - The restricted PSP is similar to the one provided in the docs, but: - it allows containers to run as root - it doesn't drop capabilities - Many containers run as root by default, and would require additional tweaks - Many containers use e.g. `chown`, which requires a specific capability (that's the case for the NGINX official image, for instance) - We still block: hostPath, privileged containers, and much more! .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- class: extra-details ## The case of static pods - If we list the pods in the `kube-system` namespace, `kube-apiserver` is missing - However, the API server is obviously running (otherwise, `kubectl get pods --namespace=kube-system` wouldn't work) - The API server Pod is created directly by kubelet (without going through the PSP admission plugin) - Then, kubelet creates a "mirror pod" representing that Pod in etcd - That "mirror pod" creation goes through the PSP admission plugin - And it gets blocked! - This can be fixed by binding `psp:privileged` to group `system:nodes` .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- ## .warning[Before moving on...] - Our cluster is currently broken (we can't create pods in namespaces kube-system, default, ...) - We need to either: - disable the PSP admission plugin - allow use of PSP to relevant users and groups - For instance, we could: - bind `psp:restricted` to the group `system:authenticated` - bind `psp:privileged` to the ServiceAccount `kube-system:default` .debug[[k8s/podsecuritypolicy.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/podsecuritypolicy.md)] --- class: pic .interstitial[] --- name: toc-exposing-http-services-with-ingress-resources class: title Exposing HTTP services with Ingress resources .nav[ [Section préc.](#toc-pod-security-policies) | [Retour à la table des matières](#toc-chapter-6) | [Section suivante](#toc-collecting-metrics-with-prometheus) ] .debug[(automatically generated title slide)] --- # Exposing HTTP services with Ingress resources - *Services* give us a way to access a pod or a set of pods - Services can be exposed to the outside world: - with type `NodePort` (on a port >30000) - with type `LoadBalancer` (allocating an external load balancer) - What about HTTP services? - how can we expose `webui`, `rng`, `hasher`? - the Kubernetes dashboard? - a new version of `webui`? .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Exposing HTTP services - If we use `NodePort` services, clients have to specify port numbers (i.e. http://xxxxx:31234 instead of just http://xxxxx) - `LoadBalancer` services are nice, but: - they are not available in all environments - they often carry an additional cost (e.g. they provision an ELB) - they require one extra step for DNS integration <br/> (waiting for the `LoadBalancer` to be provisioned; then adding it to DNS) - We could build our own reverse proxy .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Building a custom reverse proxy - There are many options available: Apache, HAProxy, Hipache, NGINX, Traefik, ... (look at [jpetazzo/aiguillage](https://github.com/jpetazzo/aiguillage) for a minimal reverse proxy configuration using NGINX) - Most of these options require us to update/edit configuration files after each change - Some of them can pick up virtual hosts and backends from a configuration store - Wouldn't it be nice if this configuration could be managed with the Kubernetes API? -- - Enter.red[¹] *Ingress* resources! .footnote[.red[¹] Pun maybe intended.] .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Ingress resources - Kubernetes API resource (`kubectl get ingress`/`ingresses`/`ing`) - Designed to expose HTTP services - Basic features: - load balancing - SSL termination - name-based virtual hosting - Can also route to different services depending on: - URI path (e.g. `/api`→`api-service`, `/static`→`assets-service`) - Client headers, including cookies (for A/B testing, canary deployment...) - and more! .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Principle of operation - Step 1: deploy an *ingress controller* - ingress controller = load balancer + control loop - the control loop watches over ingress resources, and configures the LB accordingly - Step 2: set up DNS - associate DNS entries with the load balancer address - Step 3: create *ingress resources* - the ingress controller picks up these resources and configures the LB - Step 4: profit! .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Ingress in action - We will deploy the Traefik ingress controller - this is an arbitrary choice - maybe motivated by the fact that Traefik releases are named after cheeses - For DNS, we will use [nip.io](http://nip.io/) - `*.1.2.3.4.nip.io` resolves to `1.2.3.4` - We will create ingress resources for various HTTP services .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Deploying pods listening on port 80 - We want our ingress load balancer to be available on port 80 - We could do that with a `LoadBalancer` service ... but it requires support from the underlying infrastructure - We could use pods specifying `hostPort: 80` ... but with most CNI plugins, this [doesn't work or requires additional setup](https://github.com/kubernetes/kubernetes/issues/23920) - We could use a `NodePort` service ... but that requires [changing the `--service-node-port-range` flag in the API server](https://kubernetes.io/docs/reference/command-line-tools-reference/kube-apiserver/) - Last resort: the `hostNetwork` mode .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Without `hostNetwork` - Normally, each pod gets its own *network namespace* (sometimes called sandbox or network sandbox) - An IP address is assigned to the pod - This IP address is routed/connected to the cluster network - All containers of that pod are sharing that network namespace (and therefore using the same IP address) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## With `hostNetwork: true` - No network namespace gets created - The pod is using the network namespace of the host - It "sees" (and can use) the interfaces (and IP addresses) of the host - The pod can receive outside traffic directly, on any port - Downside: with most network plugins, network policies won't work for that pod - most network policies work at the IP address level - filtering that pod = filtering traffic from the node .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Running Traefik - The [Traefik documentation](https://docs.traefik.io/user-guide/kubernetes/#deploy-trfik-using-a-deployment-or-daemonset) tells us to pick between Deployment and Daemon Set - We are going to use a Daemon Set so that each node can accept connections - We will do two minor changes to the [YAML provided by Traefik](https://github.com/containous/traefik/blob/v1.7/examples/k8s/traefik-ds.yaml): - enable `hostNetwork` - add a *toleration* so that Traefik also runs on `node1` .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Taints and tolerations - A *taint* is an attribute added to a node - It prevents pods from running on the node - ... Unless they have a matching *toleration* - When deploying with `kubeadm`: - a taint is placed on the node dedicated to the control plane - the pods running the control plane have a matching toleration .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- class: extra-details ## Checking taints on our nodes .exercise[ - Check our nodes specs: ```bash kubectl get node node1 -o json | jq .spec kubectl get node node2 -o json | jq .spec ``` ] We should see a result only for `node1` (the one with the control plane): ```json "taints": [ { "effect": "NoSchedule", "key": "node-role.kubernetes.io/master" } ] ``` .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- class: extra-details ## Understanding a taint - The `key` can be interpreted as: - a reservation for a special set of pods <br/> (here, this means "this node is reserved for the control plane") - an error condition on the node <br/> (for instance: "disk full," do not start new pods here!) - The `effect` can be: - `NoSchedule` (don't run new pods here) - `PreferNoSchedule` (try not to run new pods here) - `NoExecute` (don't run new pods and evict running pods) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- class: extra-details ## Checking tolerations on the control plane .exercise[ - Check tolerations for CoreDNS: ```bash kubectl -n kube-system get deployments coredns -o json | jq .spec.template.spec.tolerations ``` ] The result should include: ```json { "effect": "NoSchedule", "key": "node-role.kubernetes.io/master" } ``` It means: "bypass the exact taint that we saw earlier on `node1`." .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- class: extra-details ## Special tolerations .exercise[ - Check tolerations on `kube-proxy`: ```bash kubectl -n kube-system get ds kube-proxy -o json | jq .spec.template.spec.tolerations ``` ] The result should include: ```json { "operator": "Exists" } ``` This one is a special case that means "ignore all taints and run anyway." .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Running Traefik on our cluster - We provide a YAML file (`k8s/traefik.yaml`) which is essentially the sum of: - [Traefik's Daemon Set resources](https://github.com/containous/traefik/blob/v1.7/examples/k8s/traefik-ds.yaml) (patched with `hostNetwork` and tolerations) - [Traefik's RBAC rules](https://github.com/containous/traefik/blob/v1.7/examples/k8s/traefik-rbac.yaml) allowing it to watch necessary API objects .exercise[ - Apply the YAML: ```bash kubectl apply -f ~/container.training/k8s/traefik.yaml ``` ] .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Checking that Traefik runs correctly - If Traefik started correctly, we now have a web server listening on each node .exercise[ - Check that Traefik is serving 80/tcp: ```bash curl localhost ``` ] We should get a `404 page not found` error. This is normal: we haven't provided any ingress rule yet. .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Setting up DNS - To make our lives easier, we will use [nip.io](http://nip.io) - Check out `http://cheddar.A.B.C.D.nip.io` (replacing A.B.C.D with the IP address of `node1`) - We should get the same `404 page not found` error (meaning that our DNS is "set up properly", so to speak!) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Traefik web UI - Traefik provides a web dashboard - With the current install method, it's listening on port 8080 .exercise[ - Go to `http://node1:8080` (replacing `node1` with its IP address) <!-- ```open http://node1:8080``` --> ] .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Setting up host-based routing ingress rules - We are going to use `errm/cheese` images (there are [3 tags available](https://hub.docker.com/r/errm/cheese/tags/): wensleydale, cheddar, stilton) - These images contain a simple static HTTP server sending a picture of cheese - We will run 3 deployments (one for each cheese) - We will create 3 services (one for each deployment) - Then we will create 3 ingress rules (one for each service) - We will route `<name-of-cheese>.A.B.C.D.nip.io` to the corresponding deployment .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Running cheesy web servers .exercise[ - Run all three deployments: ```bash kubectl create deployment cheddar --image=errm/cheese:cheddar kubectl create deployment stilton --image=errm/cheese:stilton kubectl create deployment wensleydale --image=errm/cheese:wensleydale ``` - Create a service for each of them: ```bash kubectl expose deployment cheddar --port=80 kubectl expose deployment stilton --port=80 kubectl expose deployment wensleydale --port=80 ``` ] .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## What does an ingress resource look like? Here is a minimal host-based ingress resource: ```yaml apiVersion: extensions/v1beta1 kind: Ingress metadata: name: cheddar spec: rules: - host: cheddar.`A.B.C.D`.nip.io http: paths: - path: / backend: serviceName: cheddar servicePort: 80 ``` (It is in `k8s/ingress.yaml`.) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Creating our first ingress resources .exercise[ - Edit the file `~/container.training/k8s/ingress.yaml` - Replace A.B.C.D with the IP address of `node1` - Apply the file - Open http://cheddar.A.B.C.D.nip.io ] (An image of a piece of cheese should show up.) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Creating the other ingress resources .exercise[ - Edit the file `~/container.training/k8s/ingress.yaml` - Replace `cheddar` with `stilton` (in `name`, `host`, `serviceName`) - Apply the file - Check that `stilton.A.B.C.D.nip.io` works correctly - Repeat for `wensleydale` ] .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Using multiple ingress controllers - You can have multiple ingress controllers active simultaneously (e.g. Traefik and NGINX) - You can even have multiple instances of the same controller (e.g. one for internal, another for external traffic) - The `kubernetes.io/ingress.class` annotation can be used to tell which one to use - It's OK if multiple ingress controllers configure the same resource (it just means that the service will be accessible through multiple paths) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Ingress: the good - The traffic flows directly from the ingress load balancer to the backends - it doesn't need to go through the `ClusterIP` - in fact, we don't even need a `ClusterIP` (we can use a headless service) - The load balancer can be outside of Kubernetes (as long as it has access to the cluster subnet) - This allows the use of external (hardware, physical machines...) load balancers - Annotations can encode special features (rate-limiting, A/B testing, session stickiness, etc.) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- ## Ingress: the bad - Aforementioned "special features" are not standardized yet - Some controllers will support them; some won't - Even relatively common features (stripping a path prefix) can differ: - [traefik.ingress.kubernetes.io/rule-type: PathPrefixStrip](https://docs.traefik.io/user-guide/kubernetes/#path-based-routing) - [ingress.kubernetes.io/rewrite-target: /](https://github.com/kubernetes/contrib/tree/master/ingress/controllers/nginx/examples/rewrite) - This should eventually stabilize (remember that ingresses are currently `apiVersion: extensions/v1beta1`) .debug[[k8s/ingress.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/ingress.md)] --- class: pic .interstitial[] --- name: toc-collecting-metrics-with-prometheus class: title Collecting metrics with Prometheus .nav[ [Section préc.](#toc-exposing-http-services-with-ingress-resources) | [Retour à la table des matières](#toc-chapter-6) | [Section suivante](#toc-volumes) ] .debug[(automatically generated title slide)] --- # Collecting metrics with Prometheus - Prometheus is an open-source monitoring system including: - multiple *service discovery* backends to figure out which metrics to collect - a *scraper* to collect these metrics - an efficient *time series database* to store these metrics - a specific query language (PromQL) to query these time series - an *alert manager* to notify us according to metrics values or trends - We are going to use it to collect and query some metrics on our Kubernetes cluster .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Why Prometheus? - We don't endorse Prometheus more or less than any other system - It's relatively well integrated within the cloud-native ecosystem - It can be self-hosted (this is useful for tutorials like this) - It can be used for deployments of varying complexity: - one binary and 10 lines of configuration to get started - all the way to thousands of nodes and millions of metrics .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Exposing metrics to Prometheus - Prometheus obtains metrics and their values by querying *exporters* - An exporter serves metrics over HTTP, in plain text - This is what the *node exporter* looks like: http://demo.robustperception.io:9100/metrics - Prometheus itself exposes its own internal metrics, too: http://demo.robustperception.io:9090/metrics - If you want to expose custom metrics to Prometheus: - serve a text page like these, and you're good to go - libraries are available in various languages to help with quantiles etc. .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## How Prometheus gets these metrics - The *Prometheus server* will *scrape* URLs like these at regular intervals (by default: every minute; can be more/less frequent) - If you're worried about parsing overhead: exporters can also use protobuf - The list of URLs to scrape (the *scrape targets*) is defined in configuration .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Defining scrape targets This is maybe the simplest configuration file for Prometheus: ```yaml scrape_configs: - job_name: 'prometheus' static_configs: - targets: ['localhost:9090'] ``` - In this configuration, Prometheus collects its own internal metrics - A typical configuration file will have multiple `scrape_configs` - In this configuration, the list of targets is fixed - A typical configuration file will use dynamic service discovery .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Service discovery This configuration file will leverage existing DNS `A` records: ```yaml scrape_configs: - ... - job_name: 'node' dns_sd_configs: - names: ['api-backends.dc-paris-2.enix.io'] type: 'A' port: 9100 ``` - In this configuration, Prometheus resolves the provided name(s) (here, `api-backends.dc-paris-2.enix.io`) - Each resulting IP address is added as a target on port 9100 .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Dynamic service discovery - In the DNS example, the names are re-resolved at regular intervals - As DNS records are created/updated/removed, scrape targets change as well - Existing data (previously collected metrics) is not deleted - Other service discovery backends work in a similar fashion .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Other service discovery mechanisms - Prometheus can connect to e.g. a cloud API to list instances - Or to the Kubernetes API to list nodes, pods, services ... - Or a service like Consul, Zookeeper, etcd, to list applications - The resulting configurations files are *way more complex* (but don't worry, we won't need to write them ourselves) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Time series database - We could wonder, "why do we need a specialized database?" - One metrics data point = metrics ID + timestamp + value - With a classic SQL or noSQL data store, that's at least 160 bits of data + indexes - Prometheus is way more efficient, without sacrificing performance (it will even be gentler on the I/O subsystem since it needs to write less) - Would you like to know more? Check this video: [Storage in Prometheus 2.0](https://www.youtube.com/watch?v=C4YV-9CrawA) by [Goutham V](https://twitter.com/putadent) at DC17EU .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Checking if Prometheus is installed - Before trying to install Prometheus, let's check if it's already there .exercise[ - Look for services with a label `app=prometheus` across all namespaces: ```bash kubectl get services --selector=app=prometheus --all-namespaces ``` ] If we see a `NodePort` service called `prometheus-server`, we're good! (We can then skip to "Connecting to the Prometheus web UI".) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Running Prometheus on our cluster We need to: - Run the Prometheus server in a pod (using e.g. a Deployment to ensure that it keeps running) - Expose the Prometheus server web UI (e.g. with a NodePort) - Run the *node exporter* on each node (with a Daemon Set) - Set up a Service Account so that Prometheus can query the Kubernetes API - Configure the Prometheus server (storing the configuration in a Config Map for easy updates) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Helm charts to the rescue - To make our lives easier, we are going to use a Helm chart - The Helm chart will take care of all the steps explained above (including some extra features that we don't need, but won't hurt) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Step 1: install Helm - If we already installed Helm earlier, these commands won't break anything .exercice[ - Install Tiller (Helm's server-side component) on our cluster: ```bash helm init ``` - Give Tiller permission to deploy things on our cluster: ```bash kubectl create clusterrolebinding add-on-cluster-admin \ --clusterrole=cluster-admin --serviceaccount=kube-system:default ``` ] .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Step 2: install Prometheus - Skip this if we already installed Prometheus earlier (in doubt, check with `helm list`) .exercice[ - Install Prometheus on our cluster: ```bash helm upgrade prometheus stable/prometheus \ --install \ --namespace kube-system \ --set server.service.type=NodePort \ --set server.service.nodePort=30090 \ --set server.persistentVolume.enabled=false \ --set alertmanager.enabled=false ``` ] Curious about all these flags? They're explained in the next slide. .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- class: extra-details ## Explaining all the Helm flags - `helm upgrade prometheus` → upgrade release "prometheus" to the latest version... (a "release" is a unique name given to an app deployed with Helm) - `stable/prometheus` → ... of the chart `prometheus` in repo `stable` - `--install` → if the app doesn't exist, create it - `--namespace kube-system` → put it in that specific namespace - And set the following *values* when rendering the chart's templates: - `server.service.type=NodePort` → expose the Prometheus server with a NodePort - `server.service.nodePort=30090` → set the specific NodePort number to use - `server.persistentVolume.enabled=false` → do not use a PersistentVolumeClaim - `alertmanager.enabled=false` → disable the alert manager entirely .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Connecting to the Prometheus web UI - Let's connect to the web UI and see what we can do .exercise[ - Figure out the NodePort that was allocated to the Prometheus server: ```bash kubectl get svc --all-namespaces | grep prometheus-server ``` - With your browser, connect to that port ] .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Querying some metrics - This is easy... if you are familiar with PromQL .exercise[ - Click on "Graph", and in "expression", paste the following: ``` sum by (instance) ( irate( container_cpu_usage_seconds_total{ pod_name=~"worker.*" }[5m] ) ) ``` ] - Click on the blue "Execute" button and on the "Graph" tab just below - We see the cumulated CPU usage of worker pods for each node <br/> (if we just deployed Prometheus, there won't be much data to see, though) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Getting started with PromQL - We can't learn PromQL in just 5 minutes - But we can cover the basics to get an idea of what is possible (and have some keywords and pointers) - We are going to break down the query above (building it one step at a time) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Graphing one metric across all tags This query will show us CPU usage across all containers: ``` container_cpu_usage_seconds_total ``` - The suffix of the metrics name tells us: - the unit (seconds of CPU) - that it's the total used since the container creation - Since it's a "total," it is an increasing quantity (we need to compute the derivative if we want e.g. CPU % over time) - We see that the metrics retrieved have *tags* attached to them .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Selecting metrics with tags This query will show us only metrics for worker containers: ``` container_cpu_usage_seconds_total{pod_name=~"worker.*"} ``` - The `=~` operator allows regex matching - We select all the pods with a name starting with `worker` (it would be better to use labels to select pods; more on that later) - The result is a smaller set of containers .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Transforming counters in rates This query will show us CPU usage % instead of total seconds used: ``` 100*irate(container_cpu_usage_seconds_total{pod_name=~"worker.*"}[5m]) ``` - The [`irate`](https://prometheus.io/docs/prometheus/latest/querying/functions/#irate) operator computes the "per-second instant rate of increase" - `rate` is similar but allows decreasing counters and negative values - with `irate`, if a counter goes back to zero, we don't get a negative spike - The `[5m]` tells how far to look back if there is a gap in the data - And we multiply with `100*` to get CPU % usage .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Aggregation operators This query sums the CPU usage per node: ``` sum by (instance) ( irate(container_cpu_usage_seconds_total{pod_name=~"worker.*"}[5m]) ) ``` - `instance` corresponds to the node on which the container is running - `sum by (instance) (...)` computes the sum for each instance - Note: all the other tags are collapsed (in other words, the resulting graph only shows the `instance` tag) - PromQL supports many more [aggregation operators](https://prometheus.io/docs/prometheus/latest/querying/operators/#aggregation-operators) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## What kind of metrics can we collect? - Node metrics (related to physical or virtual machines) - Container metrics (resource usage per container) - Databases, message queues, load balancers, ... (check out this [list of exporters](https://prometheus.io/docs/instrumenting/exporters/)!) - Instrumentation (=deluxe `printf` for our code) - Business metrics (customers served, revenue, ...) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- class: extra-details ## Node metrics - CPU, RAM, disk usage on the whole node - Total number of processes running, and their states - Number of open files, sockets, and their states - I/O activity (disk, network), per operation or volume - Physical/hardware (when applicable): temperature, fan speed... - ...and much more! .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- class: extra-details ## Container metrics - Similar to node metrics, but not totally identical - RAM breakdown will be different - active vs inactive memory - some memory is *shared* between containers, and specially accounted for - I/O activity is also harder to track - async writes can cause deferred "charges" - some page-ins are also shared between containers For details about container metrics, see: <br/> http://jpetazzo.github.io/2013/10/08/docker-containers-metrics/ .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- class: extra-details ## Application metrics - Arbitrary metrics related to your application and business - System performance: request latency, error rate... - Volume information: number of rows in database, message queue size... - Business data: inventory, items sold, revenue... .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- class: extra-details ## Detecting scrape targets - Prometheus can leverage Kubernetes service discovery (with proper configuration) - Services or pods can be annotated with: - `prometheus.io/scrape: true` to enable scraping - `prometheus.io/port: 9090` to indicate the port number - `prometheus.io/path: /metrics` to indicate the URI (`/metrics` by default) - Prometheus will detect and scrape these (without needing a restart or reload) .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Querying labels - What if we want to get metrics for containers belonging to a pod tagged `worker`? - The cAdvisor exporter does not give us Kubernetes labels - Kubernetes labels are exposed through another exporter - We can see Kubernetes labels through metrics `kube_pod_labels` (each container appears as a time series with constant value of `1`) - Prometheus *kind of* supports "joins" between time series - But only if the names of the tags match exactly .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## Unfortunately ... - The cAdvisor exporter uses tag `pod_name` for the name of a pod - The Kubernetes service endpoints exporter uses tag `pod` instead - See [this blog post](https://www.robustperception.io/exposing-the-software-version-to-prometheus) or [this other one](https://www.weave.works/blog/aggregating-pod-resource-cpu-memory-usage-arbitrary-labels-prometheus/) to see how to perform "joins" - Alas, Prometheus cannot "join" time series with different labels (see [Prometheus issue #2204](https://github.com/prometheus/prometheus/issues/2204) for the rationale) - There is a workaround involving relabeling, but it's "not cheap" - see [this comment](https://github.com/prometheus/prometheus/issues/2204#issuecomment-261515520) for an overview - or [this blog post](https://5pi.de/2017/11/09/use-prometheus-vector-matching-to-get-kubernetes-utilization-across-any-pod-label/) for a complete description of the process .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- ## In practice - Grafana is a beautiful (and useful) frontend to display all kinds of graphs - Not everyone needs to know Prometheus, PromQL, Grafana, etc. - But in a team, it is valuable to have at least one person who know them - That person can set up queries and dashboards for the rest of the team - It's a little bit like knowing how to optimize SQL queries, Dockerfiles... Don't panic if you don't know these tools! ...But make sure at least one person in your team is on it 💯 .debug[[k8s/prometheus.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/prometheus.md)] --- class: pic .interstitial[] --- name: toc-volumes class: title Volumes .nav[ [Section préc.](#toc-collecting-metrics-with-prometheus) | [Retour à la table des matières](#toc-chapter-7) | [Section suivante](#toc-managing-configuration) ] .debug[(automatically generated title slide)] --- # Volumes - Volumes are special directories that are mounted in containers - Volumes can have many different purposes: - share files and directories between containers running on the same machine - share files and directories between containers and their host - centralize configuration information in Kubernetes and expose it to containers - manage credentials and secrets and expose them securely to containers - store persistent data for stateful services - access storage systems (like Ceph, EBS, NFS, Portworx, and many others) .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- class: extra-details ## Kubernetes volumes vs. Docker volumes - Kubernetes and Docker volumes are very similar (the [Kubernetes documentation](https://kubernetes.io/docs/concepts/storage/volumes/) says otherwise ... <br/> but it refers to Docker 1.7, which was released in 2015!) - Docker volumes allow us to share data between containers running on the same host - Kubernetes volumes allow us to share data between containers in the same pod - Both Docker and Kubernetes volumes enable access to storage systems - Kubernetes volumes are also used to expose configuration and secrets - Docker has specific concepts for configuration and secrets <br/> (but under the hood, the technical implementation is similar) - If you're not familiar with Docker volumes, you can safely ignore this slide! .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## Volumes ≠ Persistent Volumes - Volumes and Persistent Volumes are related, but very different! - *Volumes*: - appear in Pod specifications (see next slide) - do not exist as API resources (**cannot** do `kubectl get volumes`) - *Persistent Volumes*: - are API resources (**can** do `kubectl get persistentvolumes`) - correspond to concrete volumes (e.g. on a SAN, EBS, etc.) - cannot be associated with a Pod directly; but through a Persistent Volume Claim - won't be discussed further in this section .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## A simple volume example ```yaml apiVersion: v1 kind: Pod metadata: name: nginx-with-volume spec: volumes: - name: www containers: - name: nginx image: nginx volumeMounts: - name: www mountPath: /usr/share/nginx/html/ ``` .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## A simple volume example, explained - We define a standalone `Pod` named `nginx-with-volume` - In that pod, there is a volume named `www` - No type is specified, so it will default to `emptyDir` (as the name implies, it will be initialized as an empty directory at pod creation) - In that pod, there is also a container named `nginx` - That container mounts the volume `www` to path `/usr/share/nginx/html/` .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## A volume shared between two containers .small[ ```yaml apiVersion: v1 kind: Pod metadata: name: nginx-with-volume spec: volumes: - name: www containers: - name: nginx image: nginx volumeMounts: - name: www mountPath: /usr/share/nginx/html/ - name: git image: alpine command: [ "sh", "-c", "apk add --no-cache git && git clone https://github.com/octocat/Spoon-Knife /www" ] volumeMounts: - name: www mountPath: /www/ restartPolicy: OnFailure ``` ] .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## Sharing a volume, explained - We added another container to the pod - That container mounts the `www` volume on a different path (`/www`) - It uses the `alpine` image - When started, it installs `git` and clones the `octocat/Spoon-Knife` repository (that repository contains a tiny HTML website) - As a result, NGINX now serves this website .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## Sharing a volume, in action - Let's try it! .exercise[ - Create the pod by applying the YAML file: ```bash kubectl apply -f ~/container.training/k8s/nginx-with-volume.yaml ``` - Check the IP address that was allocated to our pod: ```bash kubectl get pod nginx-with-volume -o wide IP=$(kubectl get pod nginx-with-volume -o json | jq -r .status.podIP) ``` - Access the web server: ```bash curl $IP ``` ] .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## The devil is in the details - The default `restartPolicy` is `Always` - This would cause our `git` container to run again ... and again ... and again (with an exponential back-off delay, as explained [in the documentation](https://kubernetes.io/docs/concepts/workloads/pods/pod-lifecycle/#restart-policy)) - That's why we specified `restartPolicy: OnFailure` - There is a short period of time during which the website is not available (because the `git` container hasn't done its job yet) - This could be avoided by using [Init Containers](https://kubernetes.io/docs/concepts/workloads/pods/init-containers/) (we will see a live example in a few sections) .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- ## Volume lifecycle - The lifecycle of a volume is linked to the pod's lifecycle - This means that a volume is created when the pod is created - This is mostly relevant for `emptyDir` volumes (other volumes, like remote storage, are not "created" but rather "attached" ) - A volume survives across container restarts - A volume is destroyed (or, for remote storage, detached) when the pod is destroyed .debug[[k8s/volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/volumes.md)] --- class: pic .interstitial[] --- name: toc-managing-configuration class: title Managing configuration .nav[ [Section préc.](#toc-volumes) | [Retour à la table des matières](#toc-chapter-7) | [Section suivante](#toc-stateful-sets) ] .debug[(automatically generated title slide)] --- # Managing configuration - Some applications need to be configured (obviously!) - There are many ways for our code to pick up configuration: - command-line arguments - environment variables - configuration files - configuration servers (getting configuration from a database, an API...) - ... and more (because programmers can be very creative!) - How can we do these things with containers and Kubernetes? .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Passing configuration to containers - There are many ways to pass configuration to code running in a container: - baking it into a custom image - command-line arguments - environment variables - injecting configuration files - exposing it over the Kubernetes API - configuration servers - Let's review these different strategies! .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Baking custom images - Put the configuration in the image (it can be in a configuration file, but also `ENV` or `CMD` actions) - It's easy! It's simple! - Unfortunately, it also has downsides: - multiplication of images - different images for dev, staging, prod ... - minor reconfigurations require a whole build/push/pull cycle - Avoid doing it unless you don't have the time to figure out other options .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Command-line arguments - Pass options to `args` array in the container specification - Example ([source](https://github.com/coreos/pods/blob/master/kubernetes.yaml#L29)): ```yaml args: - "--data-dir=/var/lib/etcd" - "--advertise-client-urls=http://127.0.0.1:2379" - "--listen-client-urls=http://127.0.0.1:2379" - "--listen-peer-urls=http://127.0.0.1:2380" - "--name=etcd" ``` - The options can be passed directly to the program that we run ... ... or to a wrapper script that will use them to e.g. generate a config file .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Command-line arguments, pros & cons - Works great when options are passed directly to the running program (otherwise, a wrapper script can work around the issue) - Works great when there aren't too many parameters (to avoid a 20-lines `args` array) - Requires documentation and/or understanding of the underlying program ("which parameters and flags do I need, again?") - Well-suited for mandatory parameters (without default values) - Not ideal when we need to pass a real configuration file anyway .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Environment variables - Pass options through the `env` map in the container specification - Example: ```yaml env: - name: ADMIN_PORT value: "8080" - name: ADMIN_AUTH value: Basic - name: ADMIN_CRED value: "admin:0pensesame!" ``` .warning[`value` must be a string! Make sure that numbers and fancy strings are quoted.] 🤔 Why this weird `{name: xxx, value: yyy}` scheme? It will be revealed soon! .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## The downward API - In the previous example, environment variables have fixed values - We can also use a mechanism called the *downward API* - The downward API allows exposing pod or container information - either through special files (we won't show that for now) - or through environment variables - The value of these environment variables is computed when the container is started - Remember: environment variables won't (can't) change after container start - Let's see a few concrete examples! .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Exposing the pod's namespace ```yaml - name: MY_POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace ``` - Useful to generate FQDN of services (in some contexts, a short name is not enough) - For instance, the two commands should be equivalent: ``` curl api-backend curl api-backend.$MY_POD_NAMESPACE.svc.cluster.local ``` .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Exposing the pod's IP address ```yaml - name: MY_POD_IP valueFrom: fieldRef: fieldPath: status.podIP ``` - Useful if we need to know our IP address (we could also read it from `eth0`, but this is more solid) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Exposing the container's resource limits ```yaml - name: MY_MEM_LIMIT valueFrom: resourceFieldRef: containerName: test-container resource: limits.memory ``` - Useful for runtimes where memory is garbage collected - Example: the JVM (the memory available to the JVM should be set with the `-Xmx ` flag) - Best practice: set a memory limit, and pass it to the runtime (see [this blog post](https://very-serio.us/2017/12/05/running-jvms-in-kubernetes/) for a detailed example) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## More about the downward API - [This documentation page](https://kubernetes.io/docs/tasks/inject-data-application/environment-variable-expose-pod-information/) tells more about these environment variables - And [this one](https://kubernetes.io/docs/tasks/inject-data-application/downward-api-volume-expose-pod-information/) explains the other way to use the downward API (through files that get created in the container filesystem) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Environment variables, pros and cons - Works great when the running program expects these variables - Works great for optional parameters with reasonable defaults (since the container image can provide these defaults) - Sort of auto-documented (we can see which environment variables are defined in the image, and their values) - Can be (ab)used with longer values ... - ... You *can* put an entire Tomcat configuration file in an environment ... - ... But *should* you? (Do it if you really need to, we're not judging! But we'll see better ways.) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Injecting configuration files - Sometimes, there is no way around it: we need to inject a full config file - Kubernetes provides a mechanism for that purpose: `configmaps` - A configmap is a Kubernetes resource that exists in a namespace - Conceptually, it's a key/value map (values are arbitrary strings) - We can think about them in (at least) two different ways: - as holding entire configuration file(s) - as holding individual configuration parameters *Note: to hold sensitive information, we can use "Secrets", which are another type of resource behaving very much like configmaps. We'll cover them just after!* .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Configmaps storing entire files - In this case, each key/value pair corresponds to a configuration file - Key = name of the file - Value = content of the file - There can be one key/value pair, or as many as necessary (for complex apps with multiple configuration files) - Examples: ``` # Create a configmap with a single key, "app.conf" kubectl create configmap my-app-config --from-file=app.conf # Create a configmap with a single key, "app.conf" but another file kubectl create configmap my-app-config --from-file=app.conf=app-prod.conf # Create a configmap with multiple keys (one per file in the config.d directory) kubectl create configmap my-app-config --from-file=config.d/ ``` .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Configmaps storing individual parameters - In this case, each key/value pair corresponds to a parameter - Key = name of the parameter - Value = value of the parameter - Examples: ``` # Create a configmap with two keys kubectl create cm my-app-config \ --from-literal=foreground=red \ --from-literal=background=blue # Create a configmap from a file containing key=val pairs kubectl create cm my-app-config \ --from-env-file=app.conf ``` .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Exposing configmaps to containers - Configmaps can be exposed as plain files in the filesystem of a container - this is achieved by declaring a volume and mounting it in the container - this is particularly effective for configmaps containing whole files - Configmaps can be exposed as environment variables in the container - this is achieved with the downward API - this is particularly effective for configmaps containing individual parameters - Let's see how to do both! .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Passing a configuration file with a configmap - We will start a load balancer powered by HAProxy - We will use the [official `haproxy` image](https://hub.docker.com/_/haproxy/) - It expects to find its configuration in `/usr/local/etc/haproxy/haproxy.cfg` - We will provide a simple HAproxy configuration, `k8s/haproxy.cfg` - It listens on port 80, and load balances connections between IBM and Google .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Creating the configmap .exercise[ - Go to the `k8s` directory in the repository: ```bash cd ~/container.training/k8s ``` - Create a configmap named `haproxy` and holding the configuration file: ```bash kubectl create configmap haproxy --from-file=haproxy.cfg ``` - Check what our configmap looks like: ```bash kubectl get configmap haproxy -o yaml ``` ] .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Using the configmap We are going to use the following pod definition: ```yaml apiVersion: v1 kind: Pod metadata: name: haproxy spec: volumes: - name: config configMap: name: haproxy containers: - name: haproxy image: haproxy volumeMounts: - name: config mountPath: /usr/local/etc/haproxy/ ``` .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Using the configmap - The resource definition from the previous slide is in `k8s/haproxy.yaml` .exercise[ - Create the HAProxy pod: ```bash kubectl apply -f ~/container.training/k8s/haproxy.yaml ``` <!-- ```hide kubectl wait pod haproxy --for condition=ready``` --> - Check the IP address allocated to the pod: ```bash kubectl get pod haproxy -o wide IP=$(kubectl get pod haproxy -o json | jq -r .status.podIP) ``` ] .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Testing our load balancer - The load balancer will send: - half of the connections to Google - the other half to IBM .exercise[ - Access the load balancer a few times: ```bash curl $IP curl $IP curl $IP ``` ] We should see connections served by Google, and others served by IBM. <br/> (Each server sends us a redirect page. Look at the URL that they send us to!) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Exposing configmaps with the downward API - We are going to run a Docker registry on a custom port - By default, the registry listens on port 5000 - This can be changed by setting environment variable `REGISTRY_HTTP_ADDR` - We are going to store the port number in a configmap - Then we will expose that configmap as a container environment variable .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Creating the configmap .exercise[ - Our configmap will have a single key, `http.addr`: ```bash kubectl create configmap registry --from-literal=http.addr=0.0.0.0:80 ``` - Check our configmap: ```bash kubectl get configmap registry -o yaml ``` ] .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Using the configmap We are going to use the following pod definition: ```yaml apiVersion: v1 kind: Pod metadata: name: registry spec: containers: - name: registry image: registry env: - name: REGISTRY_HTTP_ADDR valueFrom: configMapKeyRef: name: registry key: http.addr ``` .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Using the configmap - The resource definition from the previous slide is in `k8s/registry.yaml` .exercise[ - Create the registry pod: ```bash kubectl apply -f ~/container.training/k8s/registry.yaml ``` <!-- ```hide kubectl wait pod registry --for condition=ready``` --> - Check the IP address allocated to the pod: ```bash kubectl get pod registry -o wide IP=$(kubectl get pod registry -o json | jq -r .status.podIP) ``` - Confirm that the registry is available on port 80: ```bash curl $IP/v2/_catalog ``` ] .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Passwords, tokens, sensitive information - For sensitive information, there is another special resource: *Secrets* - Secrets and Configmaps work almost the same way (we'll expose the differences on the next slide) - The *intent* is different, though: *"You should use secrets for things which are actually secret like API keys, credentials, etc., and use config map for not-secret configuration data."* *"In the future there will likely be some differentiators for secrets like rotation or support for backing the secret API w/ HSMs, etc."* (Source: [the author of both features](https://stackoverflow.com/a/36925553/580281 )) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- ## Differences between configmaps and secrets - Secrets are base64-encoded when shown with `kubectl get secrets -o yaml` - keep in mind that this is just *encoding*, not *encryption* - it is very easy to [automatically extract and decode secrets](https://medium.com/@mveritym/decoding-kubernetes-secrets-60deed7a96a3) - [Secrets can be encrypted at rest](https://kubernetes.io/docs/tasks/administer-cluster/encrypt-data/) - With RBAC, we can authorize a user to access configmaps, but not secrets (since they are two different kinds of resources) .debug[[k8s/configuration.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/configuration.md)] --- class: pic .interstitial[] --- name: toc-stateful-sets class: title Stateful sets .nav[ [Section préc.](#toc-managing-configuration) | [Retour à la table des matières](#toc-chapter-8) | [Section suivante](#toc-running-a-consul-cluster) ] .debug[(automatically generated title slide)] --- # Stateful sets - Stateful sets are a type of resource in the Kubernetes API (like pods, deployments, services...) - They offer mechanisms to deploy scaled stateful applications - At a first glance, they look like *deployments*: - a stateful set defines a pod spec and a number of replicas *R* - it will make sure that *R* copies of the pod are running - that number can be changed while the stateful set is running - updating the pod spec will cause a rolling update to happen - But they also have some significant differences .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Stateful sets unique features - Pods in a stateful set are numbered (from 0 to *R-1*) and ordered - They are started and updated in order (from 0 to *R-1*) - A pod is started (or updated) only when the previous one is ready - They are stopped in reverse order (from *R-1* to 0) - Each pod know its identity (i.e. which number it is in the set) - Each pod can discover the IP address of the others easily - The pods can persist data on attached volumes 🤔 Wait a minute ... Can't we already attach volumes to pods and deployments? .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Revisiting volumes - [Volumes](https://kubernetes.io/docs/concepts/storage/volumes/) are used for many purposes: - sharing data between containers in a pod - exposing configuration information and secrets to containers - accessing storage systems - Let's see examples of the latter usage .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Volumes types - There are many [types of volumes](https://kubernetes.io/docs/concepts/storage/volumes/#types-of-volumes) available: - public cloud storage (GCEPersistentDisk, AWSElasticBlockStore, AzureDisk...) - private cloud storage (Cinder, VsphereVolume...) - traditional storage systems (NFS, iSCSI, FC...) - distributed storage (Ceph, Glusterfs, Portworx...) - Using a persistent volume requires: - creating the volume out-of-band (outside of the Kubernetes API) - referencing the volume in the pod description, with all its parameters .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Using a cloud volume Here is a pod definition using an AWS EBS volume (that has to be created first): ```yaml apiVersion: v1 kind: Pod metadata: name: pod-using-my-ebs-volume spec: containers: - image: ... name: container-using-my-ebs-volume volumeMounts: - mountPath: /my-ebs name: my-ebs-volume volumes: - name: my-ebs-volume awsElasticBlockStore: volumeID: vol-049df61146c4d7901 fsType: ext4 ``` .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Using an NFS volume Here is another example using a volume on an NFS server: ```yaml apiVersion: v1 kind: Pod metadata: name: pod-using-my-nfs-volume spec: containers: - image: ... name: container-using-my-nfs-volume volumeMounts: - mountPath: /my-nfs name: my-nfs-volume volumes: - name: my-nfs-volume nfs: server: 192.168.0.55 path: "/exports/assets" ``` .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Shortcomings of volumes - Their lifecycle (creation, deletion...) is managed outside of the Kubernetes API (we can't just use `kubectl apply/create/delete/...` to manage them) - If a Deployment uses a volume, all replicas end up using the same volume - That volume must then support concurrent access - some volumes do (e.g. NFS servers support multiple read/write access) - some volumes support concurrent reads - some volumes support concurrent access for colocated pods - What we really need is a way for each replica to have its own volume .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Persistent Volume Claims - To abstract the different types of storage, a pod can use a special volume type - This type is a *Persistent Volume Claim* - A Persistent Volume Claim (PVC) is a resource type (visible with `kubectl get persistentvolumeclaims` or `kubectl get pvc`) - A PVC is not a volume; it is a *request for a volume* .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Persistent Volume Claims in practice - Using a Persistent Volume Claim is a two-step process: - creating the claim - using the claim in a pod (as if it were any other kind of volume) - A PVC starts by being Unbound (without an associated volume) - Once it is associated with a Persistent Volume, it becomes Bound - A Pod referring an unbound PVC will not start (but as soon as the PVC is bound, the Pod can start) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Binding PV and PVC - A Kubernetes controller continuously watches PV and PVC objects - When it notices an unbound PVC, it tries to find a satisfactory PV ("satisfactory" in terms of size and other characteristics; see next slide) - If no PV fits the PVC, a PV can be created dynamically (this requires to configure a *dynamic provisioner*, more on that later) - Otherwise, the PVC remains unbound indefinitely (until we manually create a PV or setup dynamic provisioning) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## What's in a Persistent Volume Claim? - At the very least, the claim should indicate: - the size of the volume (e.g. "5 GiB") - the access mode (e.g. "read-write by a single pod") - Optionally, it can also specify a Storage Class - The Storage Class indicates: - which storage system to use (e.g. Portworx, EBS...) - extra parameters for that storage system e.g.: "replicate the data 3 times, and use SSD media" .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## What's a Storage Class? - A Storage Class is yet another Kubernetes API resource (visible with e.g. `kubectl get storageclass` or `kubectl get sc`) - It indicates which *provisioner* to use (which controller will create the actual volume) - And arbitrary parameters for that provisioner (replication levels, type of disk ... anything relevant!) - Storage Classes are required if we want to use [dynamic provisioning](https://kubernetes.io/docs/concepts/storage/dynamic-provisioning/) (but we can also create volumes manually, and ignore Storage Classes) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Defining a Persistent Volume Claim Here is a minimal PVC: ```yaml kind: PersistentVolumeClaim apiVersion: v1 metadata: name: my-claim spec: accessModes: - ReadWriteOnce resources: requests: storage: 1Gi ``` .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Using a Persistent Volume Claim Here is a Pod definition like the ones shown earlier, but using a PVC: ```yaml apiVersion: v1 kind: Pod metadata: name: pod-using-a-claim spec: containers: - image: ... name: container-using-a-claim volumeMounts: - mountPath: /my-vol name: my-volume volumes: - name: my-volume persistentVolumeClaim: claimName: my-claim ``` .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Persistent Volume Claims and Stateful sets - The pods in a stateful set can define a `volumeClaimTemplate` - A `volumeClaimTemplate` will dynamically create one Persistent Volume Claim per pod - Each pod will therefore have its own volume - These volumes are numbered (like the pods) - When updating the stateful set (e.g. image upgrade), each pod keeps its volume - When pods get rescheduled (e.g. node failure), they keep their volume (this requires a storage system that is not node-local) - These volumes are not automatically deleted (when the stateful set is scaled down or deleted) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Stateful set recap - A Stateful sets manages a number of identical pods (like a Deployment) - These pods are numbered, and started/upgraded/stopped in a specific order - These pods are aware of their number (e.g., #0 can decide to be the primary, and #1 can be secondary) - These pods can find the IP addresses of the other pods in the set (through a *headless service*) - These pods can each have their own persistent storage (Deployments cannot do that) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- class: pic .interstitial[] --- name: toc-running-a-consul-cluster class: title Running a Consul cluster .nav[ [Section préc.](#toc-stateful-sets) | [Retour à la table des matières](#toc-chapter-8) | [Section suivante](#toc-local-persistent-volumes) ] .debug[(automatically generated title slide)] --- # Running a Consul cluster - Here is a good use-case for Stateful sets! - We are going to deploy a Consul cluster with 3 nodes - Consul is a highly-available key/value store (like etcd or Zookeeper) - One easy way to bootstrap a cluster is to tell each node: - the addresses of other nodes - how many nodes are expected (to know when quorum is reached) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Bootstrapping a Consul cluster *After reading the Consul documentation carefully (and/or asking around), we figure out the minimal command-line to run our Consul cluster.* ``` consul agent -data=dir=/consul/data -client=0.0.0.0 -server -ui \ -bootstrap-expect=3 \ -retry-join=`X.X.X.X` \ -retry-join=`Y.Y.Y.Y` ``` - Replace X.X.X.X and Y.Y.Y.Y with the addresses of other nodes - The same command-line can be used on all nodes (convenient!) .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Cloud Auto-join - Since version 1.4.0, Consul can use the Kubernetes API to find its peers - This is called [Cloud Auto-join] - Instead of passing an IP address, we need to pass a parameter like this: ``` consul agent -retry-join "provider=k8s label_selector=\"app=consul\"" ``` - Consul needs to be able to talk to the Kubernetes API - We can provide a `kubeconfig` file - If Consul runs in a pod, it will use the *service account* of the pod [Cloud Auto-join]: https://www.consul.io/docs/agent/cloud-auto-join.html#kubernetes-k8s- .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Setting up Cloud auto-join - We need to create a service account for Consul - We need to create a role that can `list` and `get` pods - We need to bind that role to the service account - And of course, we need to make sure that Consul pods use that service account .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Putting it all together - The file `k8s/consul.yaml` defines the required resources (service account, cluster role, cluster role binding, service, stateful set) - It has a few extra touches: - a `podAntiAffinity` prevents two pods from running on the same node - a `preStop` hook makes the pod leave the cluster when shutdown gracefully This was inspired by this [excellent tutorial](https://github.com/kelseyhightower/consul-on-kubernetes) by Kelsey Hightower. Some features from the original tutorial (TLS authentication between nodes and encryption of gossip traffic) were removed for simplicity. .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Running our Consul cluster - We'll use the provided YAML file .exercise[ - Create the stateful set and associated service: ```bash kubectl apply -f ~/container.training/k8s/consul.yaml ``` - Check the logs as the pods come up one after another: ```bash stern consul ``` <!-- ```wait Synced node info``` ```keys ^C``` --> - Check the health of the cluster: ```bash kubectl exec consul-0 consul members ``` ] .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Caveats - We haven't used a `volumeClaimTemplate` here - That's because we don't have a storage provider yet (except if you're running this on your own and your cluster has one) - What happens if we lose a pod? - a new pod gets rescheduled (with an empty state) - the new pod tries to connect to the two others - it will be accepted (after 1-2 minutes of instability) - and it will retrieve the data from the other pods .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- ## Failure modes - What happens if we lose two pods? - manual repair will be required - we will need to instruct the remaining one to act solo - then rejoin new pods - What happens if we lose three pods? (aka all of them) - we lose all the data (ouch) - If we run Consul without persistent storage, backups are a good idea! .debug[[k8s/statefulsets.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/statefulsets.md)] --- class: pic .interstitial[] --- name: toc-local-persistent-volumes class: title Local Persistent Volumes .nav[ [Section préc.](#toc-running-a-consul-cluster) | [Retour à la table des matières](#toc-chapter-8) | [Section suivante](#toc-static-pods) ] .debug[(automatically generated title slide)] --- # Local Persistent Volumes - We want to run that Consul cluster *and* actually persist data - But we don't have a distributed storage system - We are going to use local volumes instead (similar conceptually to `hostPath` volumes) - We can use local volumes without installing extra plugins - However, they are tied to a node - If that node goes down, the volume becomes unavailable .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## With or without dynamic provisioning - We will deploy a Consul cluster *with* persistence - That cluster's StatefulSet will create PVCs - These PVCs will remain unbound¹, until we will create local volumes manually (we will basically do the job of the dynamic provisioner) - Then, we will see how to automate that with a dynamic provisioner .footnote[¹Unbound = without an associated Persistent Volume.] .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## If we have a dynamic provisioner ... - The labs in this section assume that we *do not* have a dynamic provisioner - If we do have one, we need to disable it .exercise[ - Check if we have a dynamic provisioner: ```bash kubectl get storageclass ``` - If the output contains a line with `(default)`, run this command: ```bash kubectl annotate sc storageclass.kubernetes.io/is-default-class- --all ``` - Check again that it is no longer marked as `(default)` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Work in a separate namespace - To avoid conflicts with existing resources, let's create and use a new namespace .exercise[ - Create a new namespace: ```bash kubectl create namespace orange ``` - Switch to that namespace: ```bash kns orange ``` ] .warning[Make sure to call that namespace `orange`: it is hardcoded in the YAML files.] .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Deploying Consul - We will use a slightly different YAML file - The only differences between that file and the previous one are: - `volumeClaimTemplate` defined in the Stateful Set spec - the corresponding `volumeMounts` in the Pod spec - the namespace `orange` used for discovery of Pods .exercise[ - Apply the persistent Consul YAML file: ```bash kubectl apply -f ~/container.training/k8s/persistent-consul.yaml ``` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Observing the situation - Let's look at Persistent Volume Claims and Pods .exercise[ - Check that we now have an unbound Persistent Volume Claim: ```bash kubectl get pvc ``` - We don't have any Persistent Volume: ```bash kubectl get pv ``` - The Pod `consul-0` is not scheduled yet: ```bash kubectl get pods -o wide ``` ] *Hint: leave these commands running with `-w` in different windows.* .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Explanations - In a Stateful Set, the Pods are started one by one - `consul-1` won't be created until `consul-0` is running - `consul-0` has a dependency on an unbound Persistent Volume Claim - The scheduler won't schedule the Pod until the PVC is bound (because the PVC might be bound to a volume that is only available on a subset of nodes; for instance EBS are tied to an availability zone) .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Creating Persistent Volumes - Let's create 3 local directories (`/mnt/consul`) on node2, node3, node4 - Then create 3 Persistent Volumes corresponding to these directories .exercise[ - Create the local directories: ```bash for NODE in node2 node3 node4; do ssh $NODE sudo mkdir -p /mnt/consul done ``` - Create the PV objects: ```bash kubectl apply -f ~/container.training/k8s/volumes-for-consul.yaml ``` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Check our Consul cluster - The PVs that we created will be automatically matched with the PVCs - Once a PVC is bound, its pod can start normally - Once the pod `consul-0` has started, `consul-1` can be created, etc. - Eventually, our Consul cluster is up, and backend by "persistent" volumes .exercise[ - Check that our Consul clusters has 3 members indeed: ```bash kubectl exec consul-0 consul members ``` ] .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Devil is in the details (1/2) - The size of the Persistent Volumes is bogus (it is used when matching PVs and PVCs together, but there is no actual quota or limit) .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Devil is in the details (2/2) - This specific example worked because we had exactly 1 free PV per node: - if we had created multiple PVs per node ... - we could have ended with two PVCs bound to PVs on the same node ... - which would have required two pods to be on the same node ... - which is forbidden by the anti-affinity constraints in the StatefulSet - To avoid that, we need to associated the PVs with a Storage Class that has: ```yaml volumeBindingMode: WaitForFirstConsumer ``` (this means that a PVC will be bound to a PV only after being used by a Pod) - See [this blog post](https://kubernetes.io/blog/2018/04/13/local-persistent-volumes-beta/) for more details .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Bulk provisioning - It's not practical to manually create directories and PVs for each app - We *could* pre-provision a number of PVs across our fleet - We could even automate that with a Daemon Set: - creating a number of directories on each node - creating the corresponding PV objects - We also need to recycle volumes - ... This can quickly get out of hand .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Dynamic provisioning - We could also write our own provisioner, which would: - watch the PVCs across all namespaces - when a PVC is created, create a corresponding PV on a node - Or we could use one of the dynamic provisioners for local persistent volumes (for instance the [Rancher local path provisioner](https://github.com/rancher/local-path-provisioner)) .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- ## Strategies for local persistent volumes - Remember, when a node goes down, the volumes on that node become unavailable - High availability will require another layer of replication (like what we've just seen with Consul; or primary/secondary; etc) - Pre-provisioning PVs makes sense for machines with local storage (e.g. cloud instance storage; or storage directly attached to a physical machine) - Dynamic provisioning makes sense for large number of applications (when we can't or won't dedicate a whole disk to a volume) - It's possible to mix both (using distinct Storage Classes) .debug[[k8s/local-persistent-volumes.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/local-persistent-volumes.md)] --- class: pic .interstitial[] --- name: toc-static-pods class: title Static pods .nav[ [Section préc.](#toc-local-persistent-volumes) | [Retour à la table des matières](#toc-chapter-8) | [Section suivante](#toc-next-steps) ] .debug[(automatically generated title slide)] --- # Static pods - Hosting the Kubernetes control plane on Kubernetes has advantages: - we can use Kubernetes' replication and scaling features for the control plane - we can leverage rolling updates to upgrade the control plane - However, there is a catch: - deploying on Kubernetes requires the API to be available - the API won't be available until the control plane is deployed - How can we get out of that chicken-and-egg problem? .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## A possible approach - Since each component of the control plane can be replicated... - We could set up the control plane outside of the cluster - Then, once the cluster is fully operational, create replicas running on the cluster - Finally, remove the replicas that are running outside of the cluster *What could possibly go wrong?* .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Sawing off the branch you're sitting on - What if anything goes wrong? (During the setup or at a later point) - Worst case scenario, we might need to: - set up a new control plane (outside of the cluster) - restore a backup from the old control plane - move the new control plane to the cluster (again) - This doesn't sound like a great experience .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Static pods to the rescue - Pods are started by kubelet (an agent running on every node) - To know which pods it should run, the kubelet queries the API server - The kubelet can also get a list of *static pods* from: - a directory containing one (or multiple) *manifests*, and/or - a URL (serving a *manifest*) - These "manifests" are basically YAML definitions (As produced by `kubectl get pod my-little-pod -o yaml`) .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Static pods are dynamic - Kubelet will periodically reload the manifests - It will start/stop pods accordingly (i.e. it is not necessary to restart the kubelet after updating the manifests) - When connected to the Kubernetes API, the kubelet will create *mirror pods* - Mirror pods are copies of the static pods (so they can be seen with e.g. `kubectl get pods`) .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Bootstrapping a cluster with static pods - We can run control plane components with these static pods - They can start without requiring access to the API server - Once they are up and running, the API becomes available - These pods are then visible through the API (We cannot upgrade them from the API, though) *This is how kubeadm has initialized our clusters.* .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Static pods vs normal pods - The API only gives us read-only access to static pods - We can `kubectl delete` a static pod... ...But the kubelet will re-mirror it immediately - Static pods can be selected just like other pods (So they can receive service traffic) - A service can select a mixture of static and other pods .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## From static pods to normal pods - Once the control plane is up and running, it can be used to create normal pods - We can then set up a copy of the control plane in normal pods - Then the static pods can be removed - The scheduler and the controller manager use leader election (Only one is active at a time; removing an instance is seamless) - Each instance of the API server adds itself to the `kubernetes` service - Etcd will typically require more work! .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## From normal pods back to static pods - Alright, but what if the control plane is down and we need to fix it? - We restart it using static pods! - This can be done automatically with the [Pod Checkpointer] - The Pod Checkpointer automatically generates manifests of running pods - The manifests are used to restart these pods if API contact is lost (More details in the [Pod Checkpointer] documentation page) - This technique is used by [bootkube] [Pod Checkpointer]: https://github.com/kubernetes-incubator/bootkube/blob/master/cmd/checkpoint/README.md [bootkube]: https://github.com/kubernetes-incubator/bootkube .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Where should the control plane run? *Is it better to run the control plane in static pods, or normal pods?* - If I'm a *user* of the cluster: I don't care, it makes no difference to me - What if I'm an *admin*, i.e. the person who installs, upgrades, repairs... the cluster? - If I'm using a managed Kubernetes cluster (AKS, EKS, GKE...) it's not my problem (I'm not the one setting up and managing the control plane) - If I already picked a tool (kubeadm, kops...) to set up my cluster, the tool decides for me - What if I haven't picked a tool yet, or if I'm installing from scratch? - static pods = easier to set up, easier to troubleshoot, less risk of outage - normal pods = easier to upgrade, easier to move (if nodes need to be shut down) .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- ## Static pods in action - On our clusters, the `staticPodPath` is `/etc/kubernetes/manifests` .exercise[ - Have a look at this directory: ```bash ls -l /etc/kubernetes/manifests ``` ] We should see YAML files corresponding to the pods of the control plane. .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- class: static-pods-exercise ## Running a static pod - We are going to add a pod manifest to the directory, and kubelet will run it .exercise[ - Copy a manifest to the directory: ```bash sudo cp ~/container.training/k8s/just-a-pod.yaml /etc/kubernetes/manifests ``` - Check that it's running: ```bash kubectl get pods ``` ] The output should include a pod named `hello-node1`. .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- class: static-pods-exercise ## Remarks In the manifest, the pod was named `hello`. ```yaml apiVersion: v1 Kind: Pod metadata: name: hello namespace: default spec: containers: - name: hello image: nginx ``` The `-node1` suffix was added automatically by kubelet. If we delete the pod (with `kubectl delete`), it will be recreated immediately. To delete the pod, we need to delete (or move) the manifest file. .debug[[k8s/staticpods.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/staticpods.md)] --- class: pic .interstitial[] --- name: toc-next-steps class: title Next steps .nav[ [Section préc.](#toc-static-pods) | [Retour à la table des matières](#toc-chapter-9) | [Section suivante](#toc-links-and-resources) ] .debug[(automatically generated title slide)] --- # Next steps *Alright, how do I get started and containerize my apps?* -- Suggested containerization checklist: .checklist[ - write a Dockerfile for one service in one app - write Dockerfiles for the other (buildable) services - write a Compose file for that whole app - make sure that devs are empowered to run the app in containers - set up automated builds of container images from the code repo - set up a CI pipeline using these container images - set up a CD pipeline (for staging/QA) using these images ] And *then* it is time to look at orchestration! .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Options for our first production cluster - Get a managed cluster from a major cloud provider (AKS, EKS, GKE...) (price: $, difficulty: medium) - Hire someone to deploy it for us (price: $$, difficulty: easy) - Do it ourselves (price: $-$$$, difficulty: hard) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## One big cluster vs. multiple small ones - Yes, it is possible to have prod+dev in a single cluster (and implement good isolation and security with RBAC, network policies...) - But it is not a good idea to do that for our first deployment - Start with a production cluster + at least a test cluster - Implement and check RBAC and isolation on the test cluster (e.g. deploy multiple test versions side-by-side) - Make sure that all our devs have usable dev clusters (whether it's a local minikube or a full-blown multi-node cluster) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Namespaces - Namespaces let you run multiple identical stacks side by side - Two namespaces (e.g. `blue` and `green`) can each have their own `redis` service - Each of the two `redis` services has its own `ClusterIP` - CoreDNS creates two entries, mapping to these two `ClusterIP` addresses: `redis.blue.svc.cluster.local` and `redis.green.svc.cluster.local` - Pods in the `blue` namespace get a *search suffix* of `blue.svc.cluster.local` - As a result, resolving `redis` from a pod in the `blue` namespace yields the "local" `redis` .warning[This does not provide *isolation*! That would be the job of network policies.] .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Relevant sections - [Namespaces](kube-selfpaced.yml.html#toc-namespaces) - [Network Policies](kube-selfpaced.yml.html#toc-network-policies) - [Role-Based Access Control](kube-selfpaced.yml.html#toc-authentication-and-authorization) (covers permissions model, user and service accounts management ...) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Stateful services (databases etc.) - As a first step, it is wiser to keep stateful services *outside* of the cluster - Exposing them to pods can be done with multiple solutions: - `ExternalName` services <br/> (`redis.blue.svc.cluster.local` will be a `CNAME` record) - `ClusterIP` services with explicit `Endpoints` <br/> (instead of letting Kubernetes generate the endpoints from a selector) - Ambassador services <br/> (application-level proxies that can provide credentials injection and more) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Stateful services (second take) - If we want to host stateful services on Kubernetes, we can use: - a storage provider - persistent volumes, persistent volume claims - stateful sets - Good questions to ask: - what's the *operational cost* of running this service ourselves? - what do we gain by deploying this stateful service on Kubernetes? - Relevant sections: [Volumes](kube-selfpaced.yml.html#toc-volumes) | [Stateful Sets](kube-selfpaced.yml.html#toc-stateful-sets) | [Persistent Volumes](kube-selfpaced.yml.html#toc-highly-available-persistent-volumes) - Excellent [blog post](http://www.databasesoup.com/2018/07/should-i-run-postgres-on-kubernetes.html) tackling the question: “Should I run Postgres on Kubernetes?” .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## HTTP traffic handling - *Services* are layer 4 constructs - HTTP is a layer 7 protocol - It is handled by *ingresses* (a different resource kind) - *Ingresses* allow: - virtual host routing - session stickiness - URI mapping - and much more! - [This section](kube-selfpaced.yml.html#toc-exposing-http-services-with-ingress-resources) shows how to expose multiple HTTP apps using [Træfik](https://docs.traefik.io/user-guide/kubernetes/) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Logging - Logging is delegated to the container engine - Logs are exposed through the API - Logs are also accessible through local files (`/var/log/containers`) - Log shipping to a central platform is usually done through these files (e.g. with an agent bind-mounting the log directory) - [This section](kube-selfpaced.yml.html#toc-centralized-logging) shows how to do that with [Fluentd](https://docs.fluentd.org/v0.12/articles/kubernetes-fluentd) and the EFK stack .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Metrics - The kubelet embeds [cAdvisor](https://github.com/google/cadvisor), which exposes container metrics (cAdvisor might be separated in the future for more flexibility) - It is a good idea to start with [Prometheus](https://prometheus.io/) (even if you end up using something else) - Starting from Kubernetes 1.8, we can use the [Metrics API](https://kubernetes.io/docs/tasks/debug-application-cluster/core-metrics-pipeline/) - [Heapster](https://github.com/kubernetes/heapster) was a popular add-on (but is being [deprecated](https://github.com/kubernetes/heapster/blob/master/docs/deprecation.md) starting with Kubernetes 1.11) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Managing the configuration of our applications - Two constructs are particularly useful: secrets and config maps - They allow to expose arbitrary information to our containers - **Avoid** storing configuration in container images (There are some exceptions to that rule, but it's generally a Bad Idea) - **Never** store sensitive information in container images (It's the container equivalent of the password on a post-it note on your screen) - [This section](kube-selfpaced.yml.html#toc-managing-configuration) shows how to manage app config with config maps (among others) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Managing stack deployments - The best deployment tool will vary, depending on: - the size and complexity of your stack(s) - how often you change it (i.e. add/remove components) - the size and skills of your team - A few examples: - shell scripts invoking `kubectl` - YAML resources descriptions committed to a repo - [Helm](https://github.com/kubernetes/helm) (~package manager) - [Spinnaker](https://www.spinnaker.io/) (Netflix' CD platform) - [Brigade](https://brigade.sh/) (event-driven scripting; no YAML) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Cluster federation --  -- Sorry Star Trek fans, this is not the federation you're looking for! -- (If I add "Your cluster is in another federation" I might get a 3rd fandom wincing!) .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Cluster federation - Kubernetes master operation relies on etcd - etcd uses the [Raft](https://raft.github.io/) protocol - Raft recommends low latency between nodes - What if our cluster spreads to multiple regions? -- - Break it down in local clusters - Regroup them in a *cluster federation* - Synchronize resources across clusters - Discover resources across clusters .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- ## Developer experience *We've put this last, but it's pretty important!* - How do you on-board a new developer? - What do they need to install to get a dev stack? - How does a code change make it from dev to prod? - How does someone add a component to a stack? .debug[[k8s/whatsnext.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/whatsnext.md)] --- class: pic .interstitial[] --- name: toc-links-and-resources class: title Links and resources .nav[ [Section préc.](#toc-next-steps) | [Retour à la table des matières](#toc-chapter-9) | [Section suivante](#toc-) ] .debug[(automatically generated title slide)] --- # Links and resources All things Kubernetes: - [Kubernetes Community](https://kubernetes.io/community/) - Slack, Google Groups, meetups - [Kubernetes on StackOverflow](https://stackoverflow.com/questions/tagged/kubernetes) - [Play With Kubernetes Hands-On Labs](https://medium.com/@marcosnils/introducing-pwk-play-with-k8s-159fcfeb787b) All things Docker: - [Docker documentation](http://docs.docker.com/) - [Docker Hub](https://hub.docker.com) - [Docker on StackOverflow](https://stackoverflow.com/questions/tagged/docker) - [Play With Docker Hands-On Labs](http://training.play-with-docker.com/) Everything else: - [Local meetups](https://www.meetup.com/) .footnote[These slides (and future updates) are on → http://container.training/] .debug[[k8s/links.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/k8s/links.md)] --- class: title, self-paced Merci à tous et toutes! .debug[[shared/thankyou.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/thankyou.md)] --- class: title, in-person C'est tout pour aujourd'hui! <br/> Des questions?  .debug[[shared/thankyou.md](https://github.com/djalal/orchestration-workshop.git/tree/master/slides/shared/thankyou.md)]